Multiverse, not Metaverse

Generative AI lets us explore Many worlds owned by Nobodies, and this is fundamentally better than One world owned by Somebody

See responses from Emad Mostaque, Simon Willison, Sam McRoberts, and others.

It is popular to regard the rising field of prompt engineering as spellcasting, where getting the human-machine interface correct becomes a matter of finding the right sequence of tokens (“trending on artstation, 8k, Greg Rutkowski”), asking nicely (“let’s think step by step”), or telling it what it can’t do (“you can’t do math”).

Omit the right incantation, get lousy results, and it’s your fault: leading to a cottage industry of books and guides and galleries that tell you how to get better results, much like a collective Hermione telling you to say “LeviOHsa, not LevioSAH” to get the spell to work.

But there is another perspective: Prompts as Portals into an alternate universe.

Prompts as Portals

What if Jurassic Park and La La Land had different visual styles?

What if Cris Farley had been cast as Joker?

What if the moon landing was faked?

What if hamsters understood every corner of the universe?

And so on in increasingly nonsensical proportions - the more nonsensical, the more it merely means that a given universe is “further away” from our universe, much like how Verse Jumping to a wackier universe in the movie Everything Everywhere All At Once requires doing wackier things.

In multiverse exploration, just as in image generation, the artists have raced far ahead of the scientists: popular culture has been beset with Multiverses and Spider-Verses and Crises and What Ifs and alternate timeline stories for decades. As a people we are ready for this idea; we just don’t yet know what to do with it.

StableDiffusion (hereafter SD) gave us many new knobs to tweak - from the big ones like sampling steps and CFG (aka —scale) to alternative models from k_lms to DDIM, such that the current best practice in AI image generation is to do a broad parametric search with low quality parameters for speed, and then find interesting bases to upscale into high quality versions, and even rotate around the scene (Oct 2022 update - dreamfusion does this in limited context, 3d-diffusion does too).

In a sense, our prompts are search requests in latent multiversal space, the model zeroes in on the request through vector search, and in these images we are “remotely viewing” an alternate reality where whatever we asked for exists - and then we tune our parameters to see it better much like we tune aperture and shutter speed in conventional cameras.

Only 4.3 Billion Universes?

Of all the parameters in SD, the seed parameter is the most important anchor for keeping the image generation the same. In SD-space, there are only 4.3 billion possible seeds. You could consider each seed a different universe, numbered as the Marvel universe does (where the main timeline is #616, and #616 Dr Strange visits #838 and a dozen other universes). Universe #42 is the best explored, because someone decided to make it the default for text2img.py (probably a Hitchhiker’s Guide reference). But you could change the seed, and get a totally different result from what is effectively a different universe.

It is an uncomfortable limitation of the human imagination that we so often limit ourselves to finite, low-numbered seed space; our minds cannot comprehend or traverse infinity (although perhaps we can see the beginning of it?).

In an abstract sense, GPT-3 is a far better multiverse portal than SD - What SD generates can often be recognized as art (or at least bad art) - whereas GPT-3 easily comes up with stuff that clearly is not from our sector of the multiverse:

This is kinda fixable, but also the “fix” only makes sense to people from our universe; if we were ever to explore an alternative universe where 1 x 1 = 2, GPT-X is surely the better vehicle to do it. This seems like a whimsical idea, but imagine how different physics, chemistry, biology would be if the universe had different constants for π, lightspeed, gravity, electricity, thermodynamics or mass-energy equivalence.

Multiversal Transfer

We can animate and voice our projections with ease - right now they are very low fidelity inferences from single images, but with time, fidelity will increase. The key word to look out for here is coherence: the sense that we are staying within the “same universe” from frame to frame, telling us a story about our faraway cousins.

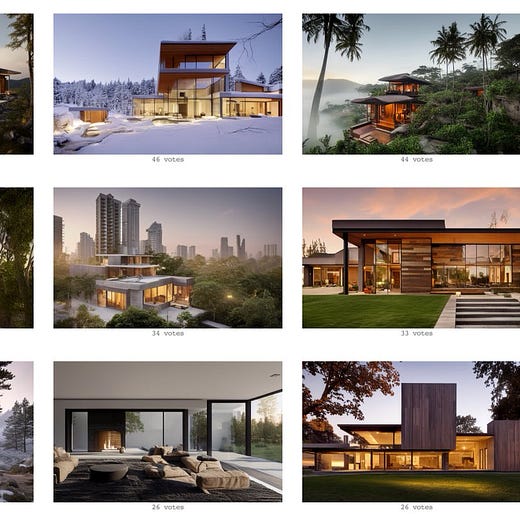

But viewing multiverses we cannot touch can only be so interesting - art is inspiring and beautiful, but to be truly lifechanging it needs to be tangible. This is why Pieter Levels’ work with This House Does Not Exist is qualitatively different: he is crowdsourcing the multiversal search, and then importing the viewed house from their universe to ours by getting a professional 3D model and render, at which point it is actually possible to build it in our universe.

Blue Multiverse, Red Metaverse

I want to leave you with a thought on why excitement for the Multiverse is orders of magnitude higher than the Metaverse.

All the big tech CEOs from Zuck to Satya to Baszucki to Jason Citron are intently making bets on the Metaverse, and the VCs lined up to back hundreds of millions of dollars of virtual land startups, because a finite-supply, zero-sum game is how the traditional power structure exerts control and extracts wealth. The Metaverse is how the Somebodies of today successfully transition into Somebodies of tomorrow.

But you cannot own a Multiverse. Whatever virtual land you “own”, my next favorite one is one seed away in a neighboring universe. Absolute nobodies can come up with new prompts and models for their own purposes, completely permissionlessly and autonomously. That is at once wonderful to the creative, sovereign individual, yet terrifying to the masters of our current universe.

Capitalism understands scarcity.

We’ll need a new model for infinity.

I've used the reverse of this analogy before! That is, instead of using the multiverse as an analogy to help me understand latent space, I've used latent space as an analogy to help me understand the multiverse.

Good tweet that echoes scarcity vs infinity: https://twitter.com/thesephist/status/1570452001489670145