Special discounts up for AIE Melbourne (LS discount) and AIE World’s Fair (group discounts up to 25% - CFPs still open for Autoresearch and Vertical AI) Cya there!

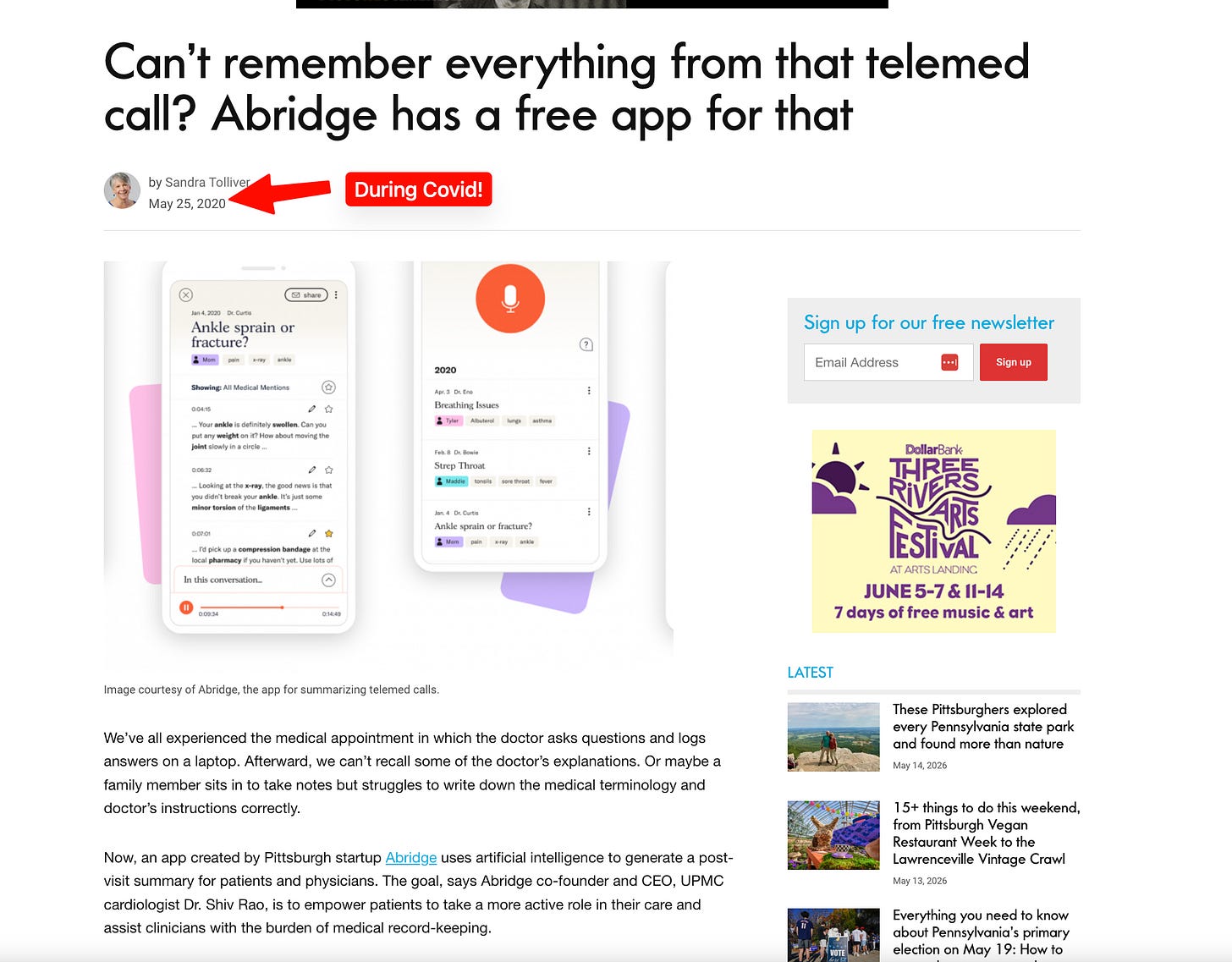

Abridge did not start as an “GPT wrapper”. It was founded in 2018, years before the Cambrian explosion of AI application layer companies. OpenAI launched ChatGPT publicly on November 30, 2022 and by then, Abridge had already spent years doing the unglamorous work of building trust for one of the highest context, most important workflows in healthcare: the conversation between a patient and a clinician.

Abridge’s original wedge was clinical documentation. Listen to the visit, generate the note, reduce the clerical burden, and let clinicians spend more time with patients instead of the EHR. By focusing on how doctors actually document, how health systems actually buy, how EHR integration actually works, how clinicians verify outputs, and how missing context during a visit turns into downstream friction across billing, prior authorization, quality, and follow-up, the adoption of LLMs became a force multiplier on a workflow already optimized for sensitive context gathering.

The company has scaled fast: Abridge says it is projected to support 80M+ patient-clinician conversations this year across 250 large and complex U.S. health systems, with support for 28+ languages and 50+ specialties. It raised $300M at a $5.3B valuation in June 2025, after a $250M round earlier that year.

Today, Janie Lee and Chaitanya “Chai” Asawa of Abridge join us for another crossover pod with Redpoint’s Jacob Effron (who is on the board of Abridge) to dive into how Abridge is building the clinical intelligence layer for healthcare starting with ambient documentation, then expanding into clinical decision support, prior authorization, payer/provider/pharma workflows, and eventually real-time agents that act before, during, and after the patient conversation.

We go inside the product, data, infra, evals, workflow, privacy, and org design choices behind bringing AI into one of the highest-stakes enterprise environments from 100M+ medical conversations and specialty-specific evals to real-time alerts, EHR integration, de-identification, clinician-scientist teams, and why healthcare may solve some of the hardest AI problems first.

We discuss:

Why Abridge started with clinical documentation, “pajama time,” and saving clinicians 10–20 hours a week

The transition from ambient scribe to clinical intelligence layer: save time, save money, and save lives

Why conversations between patients and clinicians may be the most important workflow in healthcare (patient visit summary feature)

Chai’s “healthcare-coded Glean” framing: context is king, but healthcare raises the stakes on safety, evals, and rollout

Why Abridge wants AI to feel like “air conditioning”: always in the background, but only interrupting when it truly matters

The prior authorization example: turning a denied MRI weeks later into real-time guidance while the patient is still in the room

Why payer policies, EHR data, medical literature, and hospital-specific guidelines make the problem hard, and also create the moat

How Abridge thinks about ambient form factors: mobile, desktop, in-room devices, nursing workflows, multimodality, and future AR

The multi-sided healthcare customer: CMIOs, CFOs, CIOs, clinicians, patients, payers, and pharma

The hardest AI problem at Abridge: high-quality, low-latency, low-cost real-time support in a high-stakes clinical setting

When Abridge uses frontier models vs proprietary models, and why its unique data from medical conversations matters

Why “every agent is a coding agent underneath,” and how the EHR can be thought of as a filesystem for healthcare agents

How Abridge approaches personalization across individual doctors, specialties, and health systems

Why “AI slop” is AI without context, and how edits, memories, and clinician preferences create a data flywheel

Abridge’s eval stack: LFDs, LLM judges, in-house clinicians, third-party evaluators, specialty-specific evals, and progressive rollout

HIPAA, PHI, de-identification, one-way anonymization, customer contracts, and learning from healthcare data safely

What changes when you operate at 100M+ conversations: reliability, cost, post-training, model routing, and infrastructure optimization

Why the same clinical conversation can serve doctors, patients, payers, pharma, and future clinical-trial workflows

How Abridge works with EHRs, and why deep interoperability is table stakes for clinician adoption

Why healthcare AI has regulatory tailwinds, why 80/20 does not work here, and why high-stakes domains may drive AI forward

Why Abridge embeds “clinician scientists” into product and eval teams

What Chai learned from Glean about search, quality, and durable AI infrastructure

Why the future of AI infra may look like context layers, event-driven systems, Kafka, Temporal, sockets, CRDTs, and tools built for humans

Why Janie changed her mind on “PRDs are dead,” and why crisp written clarity matters more in complex AI products

How Abridge uses Claude Code, Cursor, and coding agents internally

Abridge:

Website: https://www.abridge.com/

Janie Lee:

LinkedIn: https://www.linkedin.com/in/janiejlee

Chaitanya “Chai” Asawa:

LinkedIn: https://www.linkedin.com/in/casawa

Timestamps

00:00:00 Introduction and what Abridge does

00:02:05 From ambient documentation to clinical intelligence

00:04:04 Clinical decision support and context as king

00:06:57 Alert fatigue, proactive intelligence, and prior authorization

00:12:36 Ambient AI form factors and healthcare customers

00:16:59 The hardest AI problems in healthcare

00:18:26 Frontier models, proprietary data, and model strategy

00:21:07 The EHR as a filesystem for agents

00:24:03 Personalization, memory, and clinician preferences

00:30:40 Evals, LLM judges, and progressive rollout

00:36:47 HIPAA, de-identification, and privacy

00:39:21 100M conversations and operating at scale

00:44:10 EHR integration and the clinical intelligence layer

00:46:39 Healthcare regulation, latency, and high-stakes AI

00:50:11 Clinician scientists and long-tail quality

00:53:04 Lessons from Glean and durable AI infrastructure

00:57:03 The future of agentic healthcare workflows

00:57:34 PRDs, product clarity, and building serious AI products

01:03:11 AI coding tools at Abridge

01:04:06 Outro

Transcript

Introduction: Abridge, Clinical Intelligence, and the Latent Space x Unsupervised Learning Crossover

Swyx [00:00:00]: Okay. This is a special crossover Latent Space Unsupervised Learning pod.

Jacob [00:00:07]: Very excited to do this.

Jacob [00:00:08]: At this point, we get together once a year.

Swyx [00:00:10]: Once a year

Jacob [00:00:11]: And this is a fun occasion to get to do it on.

Swyx [00:00:13]: I really wanted to talk to Abridge but I felt very underqualified because healthcare is not something we cover very intensely. It just so happens that Redpoint’s our big investors and supporters of Abridge.

Jacob [00:00:27]: Anytime you want to have a portfolio company on your podcast

Jacob [00:00:29]: Please, by all means.

Swyx [00:00:31]: So we’ll introduce our guests. Chai and Janie, welcome to the pod.

Janie [00:00:34]: Thanks for having us.

Chai [00:00:35]: Thank you.

Janie [00:00:35]: We’re excited to be here.

Chai [00:00:36]: Thank you.

Swyx [00:00:36]: So for listeners, what do you guys do, just to situate you guys in the company?

Janie [00:00:42]: Abridge is a clinical intelligence layer for health systems. We really started with documentation and building for clinicians and as we think about reducing the burden that clinicians have, they’re spending 10 to 20 hours a week on documentation. There’s a massive doctor shortage in the country. We also think that conversations between patients and clinicians are probably the most important workflow in healthcare. It’s where care is given and received but if you think about the 20% of our GDP that goes towards healthcare, almost everything is a derivative of that conversation, whether it’s the claim, the payment, the actual diagnosis given, the treatment. And we’ve started with a conversation to reduce the burden for doctors on documentation but we’re really excited about the path ahead as we become this broader clinical intelligence layer.

Chai [00:01:34]: I’m Chai. I work on clinical decision support at Abridge.

Swyx [00:01:37]: Yes.

Chai [00:01:37]: And so as Janie said, we’re uniquely situated where we started off with the clinical note. What I’m really excited about and where we’re expanding towards is what are all the things you can do before the conversation, during the conversation and after the conversation if you did have access to all the context about patients, payer guidelines, medical literature and put that together and to serve, how healthcare could look fundamentally different.

Swyx [00:02:01]: And that’s the context engine that you guys have?

Chai [00:02:04]: Yes.

Swyx [00:02:04]: Is that what it’s called? Okay.

Swyx [00:02:05]: So historically, as I understand it, the company started in 2018. A lot of people would be familiar with the AI voice notes form factor that doctors would be “Well, do you consent to being recorded?” It replaces handwriting and what have you. But it sounds like more recently there’s been a big transition in the company. Tell me about the broader transition.

From Documentation to Clinical Intelligence: Save Time, Save Money, Save Lives

Janie [00:02:26]: So from a transition perspective, we really think about our journey as The first act was: how do we help save time? And that’s where a lot of that original product was.

Swyx [00:02:37]: By the way, one of those interesting stats

Swyx [00:02:39]: On your landing page was, doctors spend time after hours.

Janie [00:02:43]: They call it pajama time.

Swyx [00:02:44]: Why is that pajama time?

Janie [00:02:46]: Doctors after work in their pajamas

Swyx [00:02:48]: In their pajamas. Oh

Janie [00:02:49]: At home are just writing and catching up on their notes every day.

Janie [00:02:53]: Some of our favorite customer love stories, we have a Slack channel called Love Stories. We have clinicians telling us, “Abridge has helped us, from retiring early or we’re now finally able to

Janie [00:03:06]: go home and eat dinner with our kids for the first time.”

Chai [00:03:08]: Save the marriage in some cases.

Swyx [00:03:10]: One of the quotes was “We’re not divorcing anymore.”

Swyx [00:03:12]: I’m asking, “Why?”

Swyx [00:03:14]: Because they’re working too much.

Janie [00:03:16]: But, in terms of where we’re going and where we’re expanding, we really think about our second and third acts around how do we help health systems save and make more money. Health systems are operating with record-low operating margins. It’s getting harder and harder to serve patients and they have regulatory, some tailwinds but also a lot of headwinds coming their way and AI is ripe for helping on the saving and make-more-money piece. And then ultimately, how do we help save lives? The fact that our software and our product is open millions of times a week before, during and after a patient walks in the room, gives us massive opportunity with products like clinical decision support, which Chai is building but so many others to improve patient outcomes and probably one of the most important workflows and problems to be going after right now.

From Glean to Healthcare: Context Is King

Jacob [00:04:04]: One thing that’s interesting, Chai, is you came over to Abridge from Glean and clinical decision support, which for our listeners is, in the context of a visit, helping a doctor figure out the right type of care. It’s really a search problem in many ways, going through lots of different data sources. Very analogous to your previous role as one of the earliest engineers over at Glean. I’m sure a lot of our listeners are curious what’s similar about the problems that you’re going after now and what feels different, now that you’re in healthcare.

Chai [00:04:33]: Very similar. Taking a step back, with every wave, there’s a lot of very similar patterns that happen across different products. A lot of social networking products look the same. A lot of credit-based products look the same. And we’re seeing that very similar in the agent era with many companies, of course, in Redpoint’s portfolio and so forth. And the key insight between both companies is that you have amazing models but context is king. Context is what puts them to work. So I see it in a lot of ways, a lot of similarities in this is a healthcare-coded version of Glean but the differences are really interesting. A couple things that come to mind. First and foremost, the rigor of the setting we’re in. The downside risk is extremely high here in healthcare. It can be fatal in some cases. You prescribe something that the patient is allergic to for example. Whereas at Glean, it’s “Oh, you got the question wrong.” It wasn’t the end of the world in most cases. And so what does that mean? That shapes our evaluation strategy, both offline evaluation, progressive rollout and there’s a lot more we could go into there. Second thing that comes to mind is, vertical versus horizontal. In both cases, there’s a large variance but when Glean is, it’s a much more horizontal company, there’s a variance of personas, companies that you’re working with. We also have a variance of personas, different types of specialties, different hospital systems. But the variance is a little more narrow. So from a product perspective, you’re able to focus far more, especially when you have a maturing technology and you’re building new products that never existed before. It lets you go after them much more easily and especially in healthcare where so many problems were solved with labor and process, that it’s extremely ripe for AI to keep helping augment and enable. And the final thing that’s really interesting, Abridge specifically compared to many other companies in the AI area, is the modality we started with where we’re ambient and we’re always listening in the background. And many more AI products will go that way but it’s how we started. And that’s the greatest form of AI we can create, AI that’s seamless. You’re not looking at your screen. It’s always there. It’s always helping you out and being proactive. The Jarvis vision that, every hackathon I went to over the past decade, there was always a Jarvis competitor. But Abridge very much started from the opportunity and continues to go that way.

Ambient AI and Alert Fatigue: When Should the Product Interrupt?

Jacob [00:06:57]: One thing that is super interesting then from a product perspective is you have this always-on seamless in the background and then you have to decide when you break the wall almost and say, “Hey, clinician, you might not have thought about X,” or whatever it is that you want to do. And in healthcare traditionally there’s been this idea of alert fatigue and a million pop-ups and then a doctor just ignores all of them. It’s probably a pattern that a lot of builders are thinking through now. How do you think about the right way to intervene or to pop up in a doctor visit?

Janie [00:07:26]: It’s such a good question. Alerts are notorious in healthcare specifically. Over 90% of alerts are ignored. The first and most important thing is context is everything, as Chai alluded to and I also think about how do we go from being reactive alerting to really proactive intelligence at the point at which it matters most. One thing we like to say is we want our product to feel like air conditioning. It should be in the background just making things better and if there is something that has great clinical risk and we’re acutely aware that intervening now and not later is incredibly important, we should decide to act. But if you think about proactive versus reactive, instead of alerting a clinician during a visit when they’re with their patient having a pretty serious and sensitive conversation, how do we prep a clinician before they walk into the room with that patient? And so historically, clinicians might have to manually go through charts with a patient that they’ve had over the course of months or years and they’ll try to suss out what are the things they should be doing. You can imagine a world with Abridge. We’ll summarize all of the most recent context for you, tell you based on the reason for a visit the patient is coming in for the types of things you should be discussing. And so you’re going into that conversation prepped rather than walking in cold to that patient visit and then having this product interrupt you five or 10 times throughout the visit. And there might be times where it’s really important to interrupt. We have a product called Prior Authorization and so this is when you may go into a doctor’s office with knee pain. They’ll prescribe you an MRI and so many of us have had this experience before, where in four weeks you’ll get a call saying, “Hey, Sean, that MRI that you were prescribed wasn’t approved and why don’t you come back in? We’ll figure it out.” In a world with Abridge, we might choose to quietly but still alert a doctor in that visit. And alert is probably not even the word we would want to use. Before a patient leaves, we would want to tell the doctor, “Hey, Doctor, before Sean leaves, you should ask him, has he had physical therapy and has his pain lasted for more than six weeks? Because the Aetna plan that he’s on in California requires six things. We’ve already confirmed four of them have been met ‘cause we have all the context. But these two last criteria, if you can address with Sean before he leaves the room, we could guarantee that your MRI is approved before you leave.” And so when you think about clinical usefulness, impact to the patient, there are instances in which if we can catch a doctor while the patient is still in the room, as we think about save time, save money, save lives, we get to check all of those boxes. But when doctors have 15 minutes between visits, we have to be really thoughtful about when it matters.

Prior Authorization: Reducing Latency in Care

Chai [00:10:23]: There’s this interesting product opportunity AI has is reducing latency in the world. For example, prior authorization is an example of where care gets delayed and so great AI can reduce that. And the problem with alerts before partially is a technical problem: the quality of your alerts really matters. They’re going to get ignored if you get alerts that... Similarly in engineering, where they’re noisy alerts that you can’t act on. But if you can make really high-quality alerts with both the context, as Janie said, and really high-quality models, then you can create a whole other game.

Janie [00:10:53]: And I really like that experience because it starts to tease apart, what makes this so hard and unique. One, to make that prior authorization example possible, think about all the data that you need to have. You need to integrate with the electronic health record to know all of the patient context. Do we have access to your previous labs, previous imaging? And then to match you and to know that you’re on Aetna, we have to collect all of the different payer policies and they vary by state. Some of these payer policies live on websites. Some of them live in unstructured 50-page PDF files.

Jacob [00:11:31]: I thought this episode was

Jacob [00:11:31]: To make sure we didn’t scare people from healthcare.

Janie [00:11:34]: But when you think about the things that make it hard, it also gives you the moat.

Janie [00:11:39]: And then the second is the AI and the model quality we need to be able to hang our hat on. And so the bar, similarly when I worked at Opendoor, I worked on pricing models. Every outlier wiped out the margins of 30 and so similarly here in healthcare, the bar for accuracy is so high. And then I’d say the last is workflow is everything. If insurance companies deploy AI, it typically happens too late and this is when you have the notorious comical examples of AI just fighting each other when it’s too late. But if we can pull forward the use of both the AI but also the ability to solve problems when the patient’s in the room, you can start to collapse what typically takes weeks or months after your visit, ideally down to minutes or real-time. And it’s where healthcare is both very difficult but also extremely rewarding if you can crack it.

Product Form Factors: Mobile, Desktop, In-Room Devices, and AR

Swyx [00:12:36]: Just to get some baseline on the form factors, because I’ve seen some videos on your website and stuff. You guys talk a lot about ambient AI. Is it primarily on the phone? Is there any other form factor that people get Abridge in? Is there an Abridge room setup where it’s always on? I don’t know.

Jacob [00:12:55]: An Abridge podcast studio.

Janie [00:12:58]: Primary form factor is mobile and desktop. Usually

Janie [00:13:00]: Clinicians are walking in and out of rooms with mobile but at the end of the day, when they’re closing out their notes or wanting to prep for the day ahead, they might use desktop. We have been having a lot of really interesting partnership conversations with a lot of these in-room device companies as you think about the power of multimodality and even more data, as you think about all of what is not captured today. It is fascinating to think about, especially even as we go into building and scaling our nursing product. It’s one where nurses constantly, as they’re walking in to check in on a patient for two minutes or maybe even 30 seconds,

Janie [00:13:43]: Starting an Abridge experience is probably going to take longer than the visit. And so what can we do with in-room devices that are always on starts to raise really interesting and fun product questions.

Swyx [00:13:54]: I was thinking, the way in tech companies we have all these Google Meet

Swyx [00:13:58]: And other things, we might as well set up entire rooms with just Abridge tech.

Chai [00:14:02]: Very much. AR glasses and related form factors are also relevant: how do we bring the information to the clinician in real-time without a screen, while still letting them focus on the patient?

Swyx [00:14:18]: Do you think they want that? I’m skeptical of AR, but I’m curious what you’ve tried.

Chai [00:14:26]: Admittedly, it’s not a near-term product roadmap

Chai [00:14:29]: By any means. I’m being far-fetched.

Jacob [00:14:31]: There’s some sick AR stuff for surgeries.

Swyx [00:14:33]: Really?

Jacob [00:14:33]: When people are trying to visualize, you’re about to make an incision but you want to see, what the cut might look or what the body might look like inside and they can layer in imaging.

Swyx [00:14:43]: That’s cool.

Chai [00:14:45]: At some point in the future.

Janie [00:14:46]: But there are a lot of our largest customers and at the largest health systems integrating already and so even as we think about building into it, unlocks a lot of product capabilities.

Swyx [00:14:57]: And just to establish the terminology. Sorry, and I know I’m asking basic questions somewhat for myself but also for the audience who might be

Health Systems, Buyers, Clinicians, Patients, and Payers

Swyx [00:15:05]: Less integrated. When you say health systems, it’s like the Johns Hopkins, the Kaiser Permanentes.

Janie [00:15:09]: Mayos, the Kaisers of the world.

Swyx [00:15:10]: These are your customers, right? And the outcome that you deliver for them is happier doctors, reduced cost of processing, reduced mistakes. It’s weird in a sense that I feel like there’s also, a secondary customer, the customer of the customer and I don’t know if you — do you think about it that way?

Janie [00:15:28]: The other interesting and complex part of building product is we have our buyers, who are the chief medical information officers

Janie [00:15:39]: The chief financial officers, the CIOs of these large health systems. Our users today are clinicians but if you think about who downstream is impacted, it’s patients. And so as we build, with every product in mind, we think about who we’re building for, who the secondary user is and what does that mean either in terms of experience, security compliance, ROI that we have to make tangible. And so like you said, time savings is one of them. But for CFOs, they care a lot more than just time savings. We have to show for every dollar you put into Abridge, because you have more compliant documentation or because you have fewer queries coming from your billing team, we save or add real dollars to your bottom line or top line, are things that we’re constantly thinking about because of the dynamic across all three sets of users.

Chai [00:16:32]: There’s a whole other axis too with the payers and pharma

Chai [00:16:35]: as well. Connecting all these three big stakeholders in healthcare is

Swyx [00:16:39]: Do the payers ever see your data? Sorry, the payers meaning the insurers, right?

Chai [00:16:44]: Yes.

Swyx [00:16:44]: They also see Abridge data?

Chai [00:16:47]: No

Swyx [00:16:47]: Like the direct integration to you guys

Chai [00:16:48]: They wouldn’t see the raw Abridge data but when you’re working together on something like prior authorization, whatever information they need, we’d communicate to them.

Jacob [00:16:59]: That’s cool. I would love to dig into the AI side. You still have a lot of problems on the AI side. And so maybe to start at the highest level, what’s one of the hardest problems you have to solve in AI at Abridge today?

The Hardest AI Problems: Quality, Latency, and Cost

Chai [00:17:11]: To make things simple, let’s take, building off the prior auth example. So one thing Janie talked about is okay, this data is all over the place and there’s this combinatorial explosion of procedures, payer policies and even sometimes different health systems. There can be some cross-product of all of these different considerations you have to take into account. But what’s really hard about this problem is doing it real-time in the conversation. So, in any AI product, usually the three KPIs you care about are quality, latency and cost. Now, what we’re saying is we want you to do this real-time in the conversation, guiding the clinician. How do we do it in a way that does not break the bank? But we’re using — But we also need very intelligent models because you’re working with this cross-product of data and this, all this context layer as well. So you need high intelligence and high-quality because you don’t want the alert fatigue but you also need to be fast and cost-effective. And so that’s where a lot of clever engineering goes. It’s okay, without getting into all the details here, can you model these policies in some intermediate representation or other things that you can do that can make this problem tractable? And of course, the Pareto frontier is always changing but we are also trying to do this now.

Model Strategy: Third-Party Models, Proprietary Data, and Medical Conversations

Jacob [00:18:26]: What implications has that had for what you take off-the-shelf and say, “ what? We don’t need to be world-class at X. We’ll just take this from the model providers or from some infrastructure player,” and what you’re “No, this is where we spend most of our time focused on”?

Chai [00:18:38]: This is, the fun challenge in AI?

Jacob [00:18:42]: It changes every three months? So

Chai [00:18:42]: Of course, with the shifting landscape, we try to be extremely thoughtful on predicting the trends of where third-party models are going and where we can uniquely go. And, sometimes when you talk about AI models, we’re the models are just going to get infinitely better. But I don’t think... It may be in the grandness of time you could say that but, within every month, every quarter, there’s specific ways they’re getting better. They’re training on a lot more, coding data to be better coding agents, for example. And so

Chai [00:19:14]: We have to think about where are the things that won’t — unique data that we’re uniquely training on or to step back a little, where is a proprietary model bringing advantage to us is if it can give higher quality or lower cost and latency for similar quality, very similar to many other companies. And when we can do that is when we have proprietary data. So, for example, we have on the order of eighty million or hundreds of millions now getting close to of medical conversations.

Jacob [00:19:44]: It’s insane.

Chai [00:19:45]: This is a unique data set. And this data set, it’s very interesting because this data set is effectively a large part of the trace between the patient and the provider. That’s where the quote-unquote debugging happens in healthcare. We have these traces at scale, as in as, our CEOs even called it, an exhaust that comes out of our product. And so when you have these traces, that’s how you can train better agents on certain use cases, whether it’s your transcription diarization use cases or so on or like note generation models and we can do that much cheaper and faster. But we’re always also working with these third-party model providers. We closely collaborate with them and that’s how we predict where the trends are going. The thing that I think about a lot is that, I know that the model providers are going to train much more on agentic workflows and so forth, so that’s great, so that you have a better agentic harness. But the other thing that’s interesting is that the model providers, because a large class of the consumer model providers is healthcare queries, that they might, optimize to train a lot of healthcare data to encode the knowledge in its weights. And this is just a great thing for us as well, where the off-the-shelf models can keep bett-getting better at general healthcare information, such that what our strategy is, we have a constellation of models, we can use something for this, that and, we only care about, at the end of the day, the best product experience.

EHR as File System: Agentic Workflows and Real-Time Interfaces

Jacob [00:21:07]: And, you have, overall capabilities improving. I’m curious, as these models get better, is there something you look at and you’re “, three months ago, we really couldn’t do that but God, the the latest models really allow us to do it”?

Chai [00:21:19]: So here’s something interesting that I’ve, been toying with. So all models are... This wasn’t super obvious a year ago but now it’s become clear and clear that almost every agent is a coding agent underneath the hood? So you give it whatever file system, it can write its own code and so forth. So when you think about within healthcare and the use case that we have, you can think of the EHR effectively like a file system. It’s just — it’s a storage of all this information. It’s a lot of information there that cannot fit into the context window, at least of today’s models and you want to use that context effectively for all these product use cases we’re talking about. And so if you have better agents that can, manipulate data, read that data, treat it as a file system as we see they’re going and we know model companies are investing this way, then that very directly benefits us.

Swyx [00:22:09]: Yeah. Okay, cool. Again, just establishing basic things. But we’re going back to the model stuff. I’m really interested in double-clicking more on the real-time, element, which is pretty important for both of you. Is it — Is real-time just batches of every one minute, every five minutes? Is that how we do it? Or is there some more native, genuinely real-time in the sense that OpenAI has a real-time API or Gemini has a real-time API?

Chai [00:22:35]: Yeah. Yeah. So today it is more on the on the batch basis but there’s interesting

Chai [00:22:41]: Prototypes that we have that we’re still not fully, full time, voice in text out or in that sense. But, can you trigger your models, your agents or agentic workflows, depending on the right times in the conversation?

Chai [00:22:58]: And so you can imagine, different techniques to bring this latency down and, you want to bring the feedback loop down as much as you can. And so a lot of clever engineering there without fully... Maybe one day we’ll do full voice in and text out, train a model to do something like that.

Swyx [00:23:15]: You do — People don’t want voice in voice out?

Chai [00:23:18]: Now we aren’t creating experiences that are, during the conversation, inter — It’s almost like

Swyx [00:23:25]: Might be too disruptive

Chai [00:23:26]: Too disruptive until, who knows, maybe eventually you could have full voice agents once we — the quality and we improve the comfort of the technology. But right now gra — that change is much more gradual and it’s more text focus, text out.

Janie [00:23:42]: And so much of currently what our product is trying to do is allow a clinician to focus on their patient and maybe at some point but right now patients, clinicians don’t want a third voice, at least in a literal voice in that room. And so how do we be there with all the contacts and information ready at hand when there’s the right moment?

Personalization: Individual Doctors, Specialties, and Health Systems

Jacob [00:24:03]: Jenny, one thing I’m curious about is how you think about, personalization in the product. I imagine, every doctor is a special snowflake in their own way, has their own way they like to do things. There are probably a bunch of different approaches you could take to doing that, both within the model layer itself but then also just with clever prompting or engineering. How do you

Jacob [00:24:20]: Deliver on that?

Janie [00:24:21]: It’s such a good question. Personalization is massive for us. We think about personalization at three levels. The first is at the individual, the second is at the specialty level and then the third is at the health system or the organization level. To your point, there are a lot of individual preferences. You-When a note is produced, it almost is a reflection that is so deeply personal of a doctor’s work and how they give care. And so do they have preferences on things like style? They might want bullets versus paragraphs, really concise versus comprehensive. They also might have phrases that they really like to use or the templates that they want every note to be structured. And, we see it in our feedback all the time. We want two spaces in between sentences or I refuse to use this tool. And so that’s something that we’ve had to build in. And the tricky part is how do you make sure that stylistic preferences don’t interrupt accuracy and quality and that’s something that we’ve really had to refine and hone over time. Second is at the specialty level. A cardiologist note or workflow is going to look very different from a dermatologist workflow.

Jacob [00:25:32]: I assume cardiology notes are the highest stakes for you guys, given your CEO is a cardiologist.

Jacob [00:25:36]: It’s “Oh my God, make sure we get this one.”

Janie [00:25:37]: Shiv, our CEO, is still a practicing cardiologist. He rounds once a month. And so, first call when we want just quick and easy user feedback too.

Janie [00:25:46]: But, specialties require a lot of personalization, both in terms of what does the product look and so we make sure that as new users onboard, we catch that and the product proportionally reflects that. But also on the back end, evals at the specialty level, they are hard-earned to calibrate and get. What does a really great dermatology note look like? What makes it complete? What makes it compliant and billable is very different than a primary care doctor. And so it’s not just about what does the product experience look but on the back end tuning and really deepening our understanding for the specialists. What does great output look like? And that’s, a problem that we need to calibrate internally, externally, online, offline but, takes lots of cycles but is necessary in a high-stakes environment. And then at the health system level, for products like clinical decision support, you have health systems who’ve spent years or decades refining their best practices and they want to know, “Hey, we love your clinical decision support product but how do we embed our own hospital guidelines into them to inform clinicians before, during or after a visit what brest — best practices should look like?” And as you think about, deepening moats as well, when health systems, trust us with that data, allow us to productize it and directly into the clinical workflow, makes us a really great partner to health systems who want to build something that truly meets their needs, their practicing guidelines.

AI Slop, Memory, and Product Data Flywheels

Chai [00:27:23]: And I want to add onto that. The for the clinical documentation problem, it’s very similar to AI writing that doesn’t feel like your own and then we call that slop. But the way I describe one framing of slop is like AI without context. But we have all that context and both the clinicians, can have it and can guide it. And so part of the other interesting exhaust for us is, memory is, one of these new systems records

Chai [00:27:49]: Almost.

Janie [00:27:50]: And we also have all the edits people make on our product and when you think about a data flywheel and how we get better over time becomes really powerful as a mechanism to just going deeper in personalization.

Jacob [00:28:04]: It’s interesting. I love this idea of working with systems on the guidelines they built up over a long time. I feel like so many of the best AI app companies today are... The question is: How do you take the expertise that a law firm or a bank has built up over many years and then add that as context and also a special sauce over, a an AI tool? And so seems like y’all are really doing that very effectively.

Janie [00:28:24]: We’re now starting to have our customers ask, “What are other customers doing?”

Janie [00:28:28]: “And how are they doing it?”

Janie [00:28:30]: And as we think about having visibility across such a large set of care being delivered right now, a really interesting place we could also partner.

Swyx [00:28:40]: I’m just curious. I — This may be a nothing question but, how different are health system guidelines from each other? Don’t they all converge to the same thing? And if not, where do they differ?

Chai [00:28:52]: At a really high level, they’re going to talk about very similar things but the difference is probably in some more of the details. “Oh, you should refer to specialists only when XYZ conditions are met,” or so forth and maybe different organizations have different practices and guidelines around that. But high level, talking about similar things but the details are what, of course, that shapes the context and the decisions you make.

Swyx [00:29:15]: And this all goes into the context engine and it might affect the notes but maybe not.

Chai [00:29:21]: The — For these local pathways, we’re definitely thinking about it a little more for our clinical decision support product.

Chai [00:29:26]: So yeah.

Swyx [00:29:27]: Which is your stuff, yeah.

Swyx [00:29:28]: And then the memory which you raised, let’s just tell us more about that. What have you tried in memory? What’s the structure of the memory? What works? What doesn’t work?

Chai [00:29:38]: There’s, of course, many different ways you could do memory, where it’s okay, can you bake it into the model weights or can you do it in some external store? For us, what’s interesting is, of course, when you think the models are rapidly changing, whether it’s in-house or third-party, baking into the model weights, sometimes you worry that it could be a little throwaway. And so, how do you... You need to find a way that you decompose the problem, the preferences from the underlying models and so forth. The thing we’re right now most both that’s easiest to start with and we’re excited about is having, a separate store for memory, where you have, for example, a memory sub-agent that’s, working in the background, figuring out what are the important parts of the clinician’s actions that we want to remember for the long term. And then you can also imagine, other things where in the — you have background jobs that are running that are collating these, memories similar to Sleep, of course and what other pattern, patterns products do as well. Learning over all these action, all the action data we have, again, note edits, the conversations they did and the actual transcripts.

Evals: LFD, LLM Judges, and Clinical Safety

Jacob [00:30:40]: What about evals? How in the world do you... It is such a complex product surface area. We would love to hear you riff on that and also how has that evolved? I’m sure you’ve gotten better at it, so any learnings along the way.

Janie [00:30:50]: From an evals perspective, we, from day one when we build any new product or feature, we think about, what does good look like? And there are table stakes things like clinical safety but then you start to get deeper into what does good quality look like. And when you go into something like our core product, there’s stuff like style and completeness and there’s things like does this note become something that can be billable, which is very high stakes for a health system. We have a number of ways in which we get confidence for this. We have, internal in-house clinicians who do what we call an LFD process to give us our very first pass at is this or isn’t this a good enough output, look at the effing data.

Jacob [00:31:41]: LFD?

Chai [00:31:42]: That’s why I was smiling. I was “Is Janie going to mention what it stands for?”

Jacob [00:31:46]: I was not... There’s like a million acronyms.

Jacob [00:31:48]: How am I supposed to know that I don’t? So “Oh yeah, of course, an LFD.”

Swyx [00:31:51]: I’ve never heard of LFDs.

Chai [00:31:53]: It’s a bridge for sure.

Janie [00:31:55]: I got through three days and then I had to ask someone.

Janie [00:31:58]: I thought it was just me that didn’t know

Janie [00:32:01]: It’s our internal process.

Swyx [00:32:02]: But look at the data as a meme in ML, ‘cause you tend to not look at it. You just want to look at number go up.

Chai [00:32:06]: Exactly.

Swyx [00:32:07]: But yes.

Janie [00:32:08]: But so, we make sure we look at the data and then as we think about all of the components of good output, we, one, create LLM judges across all of these and we make sure with annotated data and either internal or external evaluators, we feel like these judges are calibrated. And then depending on the stakes, we also work with in-house and third-party evaluators across all of these before we ship any big change. And the goal is, in terms of evolution, how do you go from this process taking months, down to weeks, down to days? Some of it is, a true science and ML problem. A lot of it’s also just, hard operational work. Have you planned ahead in terms of what you need? Have you really optimized the capacity that you need across all of the different specialties you need? Have you gotten a really good sense of which third parties are great to work with for what use cases? This takes a lot of domain, expertise and, lots of mistakes and errors in figuring that out. And so as much of it is an ML problem, so much of it has also been operational gains that are hugely important, where domain-specific expertise is everything.

Specialty-Level Evaluation and Progressive Rollouts

Jacob [00:33:23]: But it’s funny, ‘cause I feel like people talk about healthcare like it’s one giant market and the reality is

Jacob [00:33:26]: It’s, dozens and dozens of sub-markets. And so it feels like in your evals you have to build that up across the board, probably.

Swyx [00:33:34]: And is specialization the primary cardinality at... That’s the word that comes to mind.

Janie [00:33:40]: Sometimes, depending on the product or the use case. And so if we’re making a note improvement or feature for a particular specialty, definitely but we have products that are for nurses. We have products that, are really aimed at making the document or the output a lot more billable. And so we’ll want to work with coding teams and not necessary clinicians. And so like

Jacob [00:34:05]: Coding meaning healthcare coding.

Janie [00:34:06]: Yes. Yes.

Jacob [00:34:07]: Not

Chai [00:34:07]: Yes. I see you.

Swyx [00:34:07]: Other kinds.

Janie [00:34:09]: But is this output proportional to the work that was delivered? Is there sufficient documentation to justify the amount that a health system may end up charging? And so, specialty sometimes but also domain, very different across all of the different products that we’re working for. And building out that network is, not easy and is where a lot of our operational investments have gone into.

Chai [00:34:35]: And I view a lot of analogies to self-driving cars here, where, part of it is we really want progressive rollout of features to test in the real world is this useful? Is this going to work? One big difference compared to past lives is before I’d build a product, maybe I’d alpha it and then I’d like GA it the next week, ‘cause I’m “Go, move fast, ship,” and whatnot. But the mentality is like you... I want to make contact with the reality as quick as possible but I want a progressive rollout. Because as much as I get as large of an offline eval set, I want the distribution of that to match real-life distribution. And over time, by rolling out early, similar to Waymo has a tagline, “The world’s most experienced driver,” another thing that can, at least linearly increase for us is, both the size of our evaluation offline and online, that and it all feeds back.

Janie [00:35:25]: Something that’s been earned over time, speaking of evolution, is just the trust we’ve gotten with customers. Historically, a lot of these health systems, when they bring on new vendors, their release cycles are quarters, sometimes twice a year. We’ve gotten our customers onto monthly release cycles, which is pretty fast for health systems but what is more exciting over the last, call it, few quarters, has been, a subset of our customers have said, “We want to innovate with you. We trust you,” and we have a pretty, decent chunk of our customers who say, “We’ll develop with you outside of these monthly release cycles. We have a higher tolerance. We know that the stakes are very high but we want to be the first ones using these products, giving you feedback.” And so for a pretty substantial set of our customers, we’ve been able to convince them to be able to ship, in this gradual way before GA. Something we talk about a lot internally is, trust is earned in drops, earned in buckets and so we still can’t do what I used to do when I worked at Loom. We had 30 million users. I’d just be, rolling out experiments left and. The bar is still quite high for iterative rollout but because of the trust we’ve earned, we’re able to learn at pretty high volume very quickly.

Privacy, HIPAA, and De-Identification

Swyx [00:36:45]: Your scale is still pretty huge.

Swyx [00:36:47]: One thing I want to... We were going to go into scale? In a sec. One thing I wanted to call up, follow up on evals, which, again, just coming from a generalist engineer point of view, just thinking through what would people be scared of in doing this, the privacy and HIPAA

Jacob [00:37:00]: Elements of this. I have zero experience in that. What do you have to do? What is surprisingly not that bad?

Chai [00:37:06]: So one thing that’s really important here from a compliance perspective is very much that any of the data we use needs to be de-identified, any real-world data we use as a basis of online eval sets we’re learning from. And so you have to — And there’s, very clear, government guidelines, what counts as PHI. And so we’ve even have built models that can take, for example, a clinical transcript and remove all the key PHI indicators and so you have a scrubbed/de-identified version. And then once you... And so one thing that’s important is first you’ve got to get confidence in that model in the first place? And prove that out. Because, now you have, multiple probabilistic systems on top of each other.

Chai [00:37:46]: But once you have that, then you can train on it use it for evaluation and so forth, provided one of the cool things also that you can do from a business side is the right data contracting as well with your partners.

Jacob [00:37:57]: Is the anonymization one way? Once it’s done, you cannot undo it? Or is there someone

Chai [00:38:01]: Yes

Jacob [00:38:02]: Who holds the master key that can... Yeah, okay. So it’s one way.

Chai [00:38:05]: It’s one way. Yeah.

Jacob [00:38:06]: That’s how it works. I just wanted to... Because, there’s a lot of this, learning from feedback and everything that, you would want to debug more but you can’t because you just physically don’t allow yourself to.

Janie [00:38:17]: Some of it’s also written in our customer contracts in terms of who can or can’t access PHI data, how long do we retain it,

Jacob [00:38:27]: Very good

Janie [00:38:27]: Before it gets de-identified. And so we have a pretty high bar for who can access that PHI data, just to make sure that we always respect our customer data and privacy. But that’s something that we partner with our customers on too, to make sure that as we want full, as close to precision as possible in that quality

Janie [00:38:48]: We can still use it.

Jacob [00:38:50]: But it’ll be fascinating to see how that space evolves? Because you think about, I used to work at a company that, did a lot of healthcare data in the cancer space and if you asked, the average cancer patient, “Hey, do you want people, do you want other patients to be able to learn-”

Chai [00:39:03]: Take it.

Jacob [00:39:03]: “... Learn from your experience?”

Chai [00:39:04]: Take it all.

Jacob [00:39:05]: They’re “Please.”

Jacob [00:39:06]: “I’d love, nothing more than for other people to be able to learn from

Jacob [00:39:10]: The experience that I had.” And so in the past it was a lot harder to do that learning. But with this technology, that might really be practical and so it’ll be fascinating to see how that continues to evolve.

Chai [00:39:21]: There’s so much in our data set of 100 million conversations.

Chai [00:39:26]: You can imagine things like insights that you can give to the clinician. How could you, oh, how could you have reacted to this? In coaching or insights around, which treatments are effective or, like... Because you have this, again, this data source that was never captured before but that’s, where, intuition or experience is created from, going back to this idea that the conversation is the agent of truth.

Operating at Scale: Reliability, Cost, and Token Efficiency

Jacob [00:39:46]: Back to the 100 million conversations, I feel like you have this insane scale that maybe only a few other AI app companies have and everyone else dreams of. So not everyone has had to confront this yet but maybe just talk about some of the challenges of operating at that scale and what, our listeners have to look forward to if they ever get to this level of scale.

Chai [00:40:05]: At large and larger in scale, so of course there’s a general, infrastructure reliability. When you... In any given startup, you’re building the plane while it’s flying. So there’s some notion of that. But what gets interesting on the AI and ML side for sure is this, as you get at more and more scale, so one, you have the data to first and foremost do this. But, you start thinking about costs or infrastructure in a whole different way at scale versus, a prototype.

Chai [00:40:34]: You can use the most expensive model, you can burn as many tokens as you want but when you’re doing 100 million conversations

Jacob [00:40:41]: Token max on leaderboards are less upsetting than that context.

Chai [00:40:45]: . When you’re doing that and so that comes for we have the data and we also have the team that’s able to post-train based on this and you can optimize for efficiency, especially in areas where you believe that maybe a lot of the quality headroom is less so and you don’t expect the other off-the-shelf models to go that way, such that you want to do, efficiency maximization, in terms of compute and tokens.

Jacob [00:41:08]: I feel like you guys live in the future in some way where most use cases today are really just in use case discovery mode, where it’s “God, I really hope I can find something that can get to scale,” and so you’re always going to use the most powerful model. And then the few things that do get to this level of scale, you start to do those optimizations.

Chai [00:41:22]: It’s a natural trajectory where it’s like zero-to-one, we’re not talking about any of these optimizations.

Chai [00:41:26]: But when maybe we’re in the one-to-100 or so forth, then we’re in optimization mode and, what works out really well is you’ve got all this data from zero-to-one that lets you do this.

What Comes Next: The Conversation as the Shared Healthcare Platform

Jacob [00:41:36]: That’s fascinating. I feel like one thing that’s so interesting about the Abridge footprint is that you’re in the doctor-patient visit in real-time. I always like to say, there’s like probably 50 years’ worth of product you could build on top of that. What gets each of you, I don’t know, what are you most excited about building, either in the short term or medium term or even, long down the line?

Janie [00:41:53]: Something that I get really excited about is that the same conversation can serve so many stakeholders. If you think about the conversation, a doctor needs to know what is the documentation, how do I make sure that this fully represent the care I gave? A patient needs to know, “What the heck just happened? This was really overwhelming. What are my next steps?” A payer needs to know, was this the proper and appropriate care given? A pharma company might want to know why isn’t this drug being properly used or is there a good candidate for this clinical trial that I’m about to run? And where I get excited is that our product and our platform and our infrastructure can be the same product across all of those things and start to what’s today, separate, very expensive, complex systems that serve each one of these stakeholders in very different ways, start to collapse all of that into a singular platform that enables not just more efficiency across the board but also better outcomes for everyone. And, all of us experience healthcare in probably very painful ways and knowing that there is a world in which we can simplify a lot is really exciting to me and it all starts with the conversation.

Chai [00:43:15]: It’s interesting. Of it very similar to going back to the KPIs that any AI product cares about. How do you increase quality of care? How do you reduce latency to care? And how do you reduce costs? Which is a huge, in healthcare

Jacob [00:43:28]: They call it the triple aim in healthcare.

Chai [00:43:30]: But very similar to building AI products and the thing that really excites me is when we talk about that latency piece, we talked about one example earlier of prior authorization, can you reduce the latency to care? But you can imagine so much more. Oh, as soon as the lab value gets updated, do you have like a background agent that, kicks off and uses all the context to be “Oh, hey, the patient should do this next,” for example. And of flagging that to the clinician who’s always in the loop but reducing that latency, to care. And then you can imagine this is much further down the road but it’s like even connecting that to the direct patient and the consumer. And so how can you, how can you build a bridge to all of these things?

EHR Partnerships and the Clinical Intelligence Layer

Jacob [00:44:10]: Very cool. The connections piece is just an ever-growing thing. And one of the key partners is the EHR and I wonder what that relationship is like. Will they, look at this as, something that is valuable enough that they want to own someday?

Janie [00:44:29]: Our partnerships with the EHR is, we know that we have to be extremely close partners with all the EHRs who we partner with. Being able to not only pull and push all of the data into the right places is, not only table stakes, if we can’t do that, health systems don’t want to use us. The second and the reality of today is clinicians spend a lot of their days in the EHR. So much of what allowed us to win in the largest health systems was pretty direct and, very close partnerships with some of the largest electronic health records that allowed us to pull and push data with APIs that weren’t ready out of the box. And clinicians want to save clicks. Anytime we introduce a new product that, adds two clicks for them in their day, they’re “We’re not going to use it.”

Janie [00:45:21]: They have 15-minute back-to-back appointments with their patients. They’re spending, hours during pajama time doing documentation. Every second and every minute counts and so we really think about being deeply integrated into the EHR as also table stakes to getting real usage and adoption. And anything that we build or introduce, we really talk about earn the right internally a lot, which is we have to provide so much value or save so much time that people will use us. But those are the two things that are close to us, is we know that the product won’t be used unless it is deeply interoperable.

Chai [00:46:01]: And strategically, to your point, it’s like what does EHR want to own versus us? EHRs are really focused on the clinical workflows and so forth but some of the things that we’re talking about here, I do these traditionally are outside of the domain where it’s oh, connecting pairs and providers together with provider policies or the clinical trial matching, as Janie brought up. And so these are, entirely — we position ourselves as building this entirely new intelligence, clinical intelligence layer across, again, providers, pharma and, payers.

Chai [00:46:33]: And so that’s a it’s a whole different ballgame that we try to play

Chai [00:46:36]: In combination with them.

Jacob [00:46:37]: But it’s like a different layer of scope.

Healthcare AI Regulation, Technical Depth, and What Changed Their Minds

Jacob [00:46:39]: I’m curious, you are both relatively newcomers to healthcare. People have these, there’s lots of futuristic healthcare AI takes of “Oh, everything will look different.”, now that you’ve been in healthcare for a bit, you live at the edge of AI, what have you, changed your mind on around this, as you think about what healthcare looks like in ten, 20 years? Any updates to your mental model from the time being close to the problems?

Chai [00:47:02]: One thing that I

Chai [00:47:04]: Was hesitant about before and it’s a common thing when I’m trying to recruit engineers that people ask me around, is definitely oh, healthcare, heavily regulated space. And it is, rightfully so. You want to keep, the patients at the end of the day safe. But one of the interesting things that, is a that surprised me how much it is coming to the company is there’s a lot of really favorable regulatory tailwinds as well. Where you think about, government really wants interoperability between all these systems that we talked about and so agents can access this information. The government just in January, the FDA released updated guidance on clinical decision support, what I work on in such a way that they used to have guidance from like 2022 that required you to have, mention all these options and do all these other things but it’s a very forward and forward-looking way. And so for me, what’s been really cool to work on is this, there’s this very special moment both in AI in general, we all know that but there’s a special moment also regulatory in healthcare as well.

Janie [00:48:05]: One thing I would call out is for the very reasons things are higher stakes or, potentially considered more difficult in healthcare, it’s where some of the hardest AI problems will get solved first, just because the bar is so high. When I first joined, I was “Oh, this is where we’ll be on the tail end of where, all of the AI innovation will be able to be applied.” But when you think about, zero error evals or multi-step workflows that have really low tolerance, a lot of the innovation will happen here just because we have to or else we can’t ship.

Jacob [00:48:42]: ‘Cause like in other domains, you’d much rather just solve the 80%-is-good-enough problems first

Janie [00:48:46]: 80/20 doesn’t work here

Chai [00:48:48]: And building off that, traditionally, there was a bit of stigma that, oh, healthcare companies are not that interesting from a technical perspective or I’ve seen that or faced that myself. But these are really hard and fun problems from a pure technical perspective beyond just the impact. How do you bring the latency of this thing down and make it really high-quality?

Reducing Latency: Clinical Workflows, Agents, and Implementation Reality

Jacob [00:49:07]: How do you bring the latency of things down?

Chai [00:49:10]: Yeah. Yeah. Yeah. So okay, let’s answer the latency question. And maybe hopefully not too redundant with some of the things I’ve said earlier but some part of it is with any latency, you have to like what is, what is really your bottleneck. In a lot of workflows, it’s sometimes it’s the model itself. And so that’s where like our data flywheel, our post-training team and so forth come in so that can you make the models far more efficient. So that’s one aspect of latency. But there’s whole other aspects of latency where it’s okay, on top of that, if you use a constellation of different models, can you use — can you first use like a — it’s like thinking fast and slow. Can you use a cheap, fast model that triages and hands it off to a larger model where you get more intelligence and so forth and so all these

Chai [00:49:56]: Clever tricks to make it work.

Chai [00:49:58]: And by the way, we are totally — we also realize that the parameter frontier is changing and so these tricks will — may not get us to where we want to be in five years but we need to if we want to build a useful product right now.

Jacob [00:50:11]: Should we go to the quick-fire or you want to ask more about Abridge? We can stuff everything that’s not Abridge into the quick-fire

Swyx [00:50:16]: I don’t mind. I was — I feel like Janie was on the topic of more long tail stuff, which is

Swyx [00:50:21]: Not the eighty/twenty thing and that really matters. And I’ll —, if you have any tips or cool stories or just general approaches that have worked for you that’s interesting to dig into.

Janie [00:50:32]: One of them is even just how we staff our teams looks different than a traditional software engineering team, I’d say.

Swyx [00:50:40]: Let’s go.

Clinician Scientists, Edge Cases, and Evals at Scale

Janie [00:50:41]: We have a bunch of folks with different roles who are clinicians and so we have this role called the clinician scientist and I heard one of our leaders refer to them as mutants recently. But they are people who’ve had clinical backgrounds, so MDs typically, who are also deeply technical, somewhere, on the spectrum of like a full stack engineer all the way to like extremely scrappy prompter. But having each of these people embedded within our teams instantly raises the bar for everything that we build because not only are they determining, is this product clinically useful but they’re deeply embedded in our whole evals process. And so when we talk about LFDs, when we talk about what is our actual evaluation criteria, you don’t want Chai or me creating what those are because we don’t have clinical background. But is probably unique to Abridge but has been game changing. And when you think about where the puck is going, you have people build with clinical backgrounds who are technical and where AI tools are going, they just become

Janie [00:51:53]: More and more, critical and like the killers of the team. And so that’s one. And then the second is just the scale at which we do evals to catch that long tail up front before anything ever gets into production is something that we’ve pretty much like really started to fine-tune, both from a scale but when do we know we need to get several hundred versus several thousand offline responses, what helps us make that quick decision and make this less of an art and as much of a science as possible. But that’s also been something we’ve had to tune over time.

Swyx [00:52:27]: And you have partners who opted in to give you those evals.

Janie [00:52:31]: So we work either internally or with third-party for offline evals and then we have customers who also agree to give us, whether it’s like thumbs up, thumbs down to like choose this or that, a lot of data to get us to what is as close to fully confident as possible.

Swyx [00:52:51]: The term that comes to mind is

Swyx [00:52:53]: Like active learning on things where you’re weak. I feel like it’s a lost art

Swyx [00:52:58]: Is a lot of the polish that comes into doing something like this.

Janie [00:53:02]: Really.

Chai [00:53:03]: Hundred percent.

Lessons from Glean: Technical Foundations and AI App Infrastructure

Jacob [00:53:04]: Maybe, on a totally unrelated note, Chai, you had a very, storied run at Glean before heading over to Abridge. And so, I’m curious like that — it’s was one of the early AI app success stories. As reflecting back on that experience, what do you think Glean got most, maybe most wrong? Yeah, curious for your reflections.

Chai [00:53:24]: The... I attribute Glean’s success really to very strong technical foundations, that have really stood the test of time. And so it started with — it started with a known problem and like finding information where work is hard. The best technology at the time was to build really high-quality search. A lot of times enterprise search startups failed because the quality wasn’t great enough. But the learning that people took away from that is, oh, enterprise search is not good enough. And so like quality, really changes the game of like if something can be useful or not. It’s like similarly like people may have taken it that way, “Oh, Alexa voice assistants are not that useful.” But when you have quality, things can change the game. And so Glean’s early foundations, by bringing people who had built search at Google, the best place to have ever built search and being really creative and having a very concrete problem to solve but with the right technical backgrounds, laid the foundation for all of its success for the many years to come. And what’s interesting is always figuring out, hey, how does a company adapt in this, as we all know and we’ve talked many times, in this changing landscape. And so for Glean, how do you put this context layer to the use, has been the thing that we’ve really, the last few years, has been the fun from the challenge. That where like you could say, that’s been the opportunity for the company as well as the challenge as well.

Jacob [00:54:46]: Definitely a competitive market. It feels like one at the epicenter of the foundation models and, the hyperscalers, so it’ll be interesting to see how it all plays out.

Chai [00:54:55]: When you think about can you build something that helps everyone at knowledge work as well is a massive opportunity.

Jacob [00:55:02]: Always my mental model is like there’s a few markets that are like the foundation model companies have to win or are like big enough to go after and It’s probably like consumer code and that.

Jacob [00:55:11]: And so it would definitely be interesting to see how it plays out. One thing we often think about on the investing side is, the pace of progress in models changes so fast and so the building patterns adjust so fast. And it’s always hard to figure out, what pieces of the way people are building today, the infrastructure tools they use, are going to prove persistent versus, okay, six months later we’re doing something completely different because

Jacob [00:55:31]: Models have improved. I’m curious of the stuff you use today, how do you think about the pieces of AI infrastructure software that feel a little bit more persistent?

Chai [00:55:40]: So generally, if you take the thesis that the models are going to be more and more agentic, before we had to build a lot of scaffolding around that. In previous gigs, I’ve — we’ve effectively, we made our own DSL effectively and you can view the because the models were not capable enough, so you needed to simplify things. And you can view it similar to other agent frameworks. But over time, if the models become more and more agentic and can use the similar tools that we already have, where it’s like computer use, writing code itself in sandbox, much more around, far more about, what are the right context layers and the tools to give agents. And then the other things that I think about are how do you really build truly event-driven real-time systems and especially at Abridge, again, where you’re doing something real-time in the conversation. And so there’s a lot of event-driven technology. And by the way, stuff that we’ve always used in the past, whether it’s Kafka, Temporal, Sockets and so forth, how do you bring that together is also durable. Or thinking about patterns in which humans collaborated with each other on Google Docs. How do you think about like CRDT and so forth when you have conflicts, when you have multi-agent systems? So all these things that we’ve built for — the things we’ve built for humans are the things that are going to be, continue to be durable.

Jacob [00:56:55]: . Just with like 1,000 times more the scale of agents running at them instead.

Jacob [00:56:58]: They’re going to really work.

Chai [00:56:58]: So make sure that they scale, of course and fast and whatnot. Without a doubt, yes.

How Agentic Does Abridge Become?

Swyx [00:57:03]: Does Abridge become more agentic over time than, what is the next more agentic version of that look like?

Swyx [00:57:10]: ‘Cause you’re already pretty proactive it’s, with like the notifications.

Chai [00:57:15]: And so I view that as like a piece of being agentic but I also view it as maybe some of the things we mentioned before, oh, reacting to labs or, doing work in the background or doing

Chai [00:57:25]: Even more capabilities on behalf of the clinician, who we believe has a super important role to play as, in terms of patient connection and so forth.

What They Changed Their Minds On: PRDs, Prototypes, and Judgment

Jacob [00:57:34]: I’m curious for both of you, what’s one thing you’ve changed your mind on in AI in the past year?

Janie [00:57:39]: The one I flopped on and this is much more product specific, is, probably the hotter take is that prototypes are the end all be all and that PRDs are dead.

Janie [00:57:51]: We’ve tried switching and... We continue to evolve the way product is developed and, the products that we’re building are extremely complicated and nuanced and it is very difficult for a prototype to capture the full complexity of what can we or can’t we do with this data. What and who... Is this the actual right problem to be solving for in a world where software has become so cheap? Yes, this is a cool looking prototype but should we be spending any of our precious hours here? If so, why? And how does this deepen our moat in a world of decreasing moats? Does this require custom implementation from our customer to use? None of that gets captured in a prototype and so we’ve, we’re continuously evolving the way that we develop product here but even if not written in the same traditional ways as it was two years ago, as a team we’ve gotten pretty, high conviction that in a world of so much noise, crisp written clarity is more important than ever. It might now live in a markdown file that more teams and systems can use as context but that’s probably one that is much more

Swyx [00:59:06]: So you’re

Janie [00:59:06]: Function specific to me.

Jacob [00:59:08]: I love that.

Swyx [00:59:09]: You’re disagreeing with the consensus

Janie [00:59:10]: That PRDs are dead

Swyx [00:59:11]: That’s great, yeah.

Swyx [00:59:12]: So you are like

Janie [00:59:14]: That prototypes are the thing.

Janie [00:59:14]: We should partner with AI to create great documentation but first, probably most important, is strategically answering like why is this problem the one our company and our product should solve? What happens if the next 20 competitors build this? Why, what is our right to win and does this help us differentiate in any way or are we just adding noise? It’s important

Swyx [00:59:39]: That’s a high bar. I don’t know if I could answer that

Swyx [00:59:41]: Because a lot of the times the answer is let’s do it first.

Janie [00:59:44]: And when the cost of doing it first is so expensive, we just talked through the process of getting something out to customers. You need to have a higher bar for as a business, should we invest here? And as all of our roles evolve, one of product or like all of our jobs become should we do this thing? And that’s something that is worth the time spending up front on. And then, as you think about prototypes, it’s still really valuable to quickly show, “Here are the 20 ways we could do it. Clinician, I would love your feedback, which one resonates more?” Or as you get into deeper fidelity, you can also make the prototypes deeper fidelity and like get it as close to production ready as possible. But, beyond that, to get it out to customers, there’s a lot of implementation details, security compliance, edge cases, things that never get caught in a prototype that need to be written out somewhere. And so they look different but still more important than ever.

Jacob [01:00:52]: It’s interesting. I imagine a lot of that also is like given the context of the stage that Abridge is at.

Jacob [01:00:58]: I feel like for so many early stage companies, it’s just a desperate race to... You throw like 30 things at the wall, you’re “Please, something just like resonate with my end buyer.” and, you find something and that’s, why the prototype first approach is so powerful. But for you all, it’s like anything you’re going to do is across 200 systems, there’s like a whole, implementation change management side of things and you get a few big bullets to fire at at what you want those systems to do. And so being really thoughtful about that.

Chai [01:01:25]: It makes a ton of sense and maybe the prototype first takes will all grow into your view of the world when they’re a bit more scaled.

Janie [01:01:32]: The weekend demo versus it works at the largest health systems is, a massive gap. I don’t think it means we can’t go fast. This is the fastest I’ve built in my career, right now and the

Chai [01:01:47]: Compared to Loom?

Janie [01:01:48]: From a the complexity and the scale of the products we’re trying to build and the problems we’re trying to solve, I’d say, yes, maybe I, updated a flow or, shipped a new feature pretty quickly but if you think about some of the products we’re building, we’re trying to collapse prior authorization, things that used to take 45 days across maybe 20 different touch points into one. I’m building faster than I ever have and so the thoughtfulness allows us just to go fast at the right things. It sounds contradictory but that

Chai [01:02:28]: No

Janie [01:02:28]: Thought up front

Chai [01:02:28]: Go slow to go fast.

Janie [01:02:29]: Exactly.

Chai [01:02:30]: It’s interesting. In the... When a lot of things are changing and in the AI discourse, sometimes we lose sight of things that always stood the test of time. Judgment and clarity always matters. As an engineer, sometimes I don’t want a prototype. I would like to see... I want the written, the clarity that comes from writing and then we build that. And again, for some things, of course, where it’s a small thing, yeah, just ship the prototype. That’s why, don’t sweat the details. So the interesting thing, the nuance that gets lost sometimes in discussion is, sometimes we need to recalibrate our judgment for sure because the costs and gains have changed but that doesn’t mean we go all the way on one spectrum or the other.

AI Tools, Claude Code, and Closing Notes

Chai [01:03:11]: Outside of your specific tool, I always like to ask this question, any other AI tools that you guys are enjoying?

Chai [01:03:16]: Claude Code. But, that feels, too basic of an answer.

Chai [01:03:20]: Is all of Abridge engineering very built on Claude Code?

Chai [01:03:23]: Yes.

Chai [01:03:23]: Wow.

Chai [01:03:23]: Very much so. I won’t

Chai [01:03:26]: We also have Cursor as well.

Chai [01:03:28]: Many of the

Chai [01:03:29]: I’m just checking the boxes here.

Chai [01:03:30]: Many of the tools available but it’s like you look at just earlier in the day, you see an engineer’s screen. You see, six different, Claudes running at it. Sometimes the same person, I’ve seen them on the sofa now with the remote control as well on the mobile. But, very much so. One of the interesting things for me is, as a relatively new person to companies, Claude Code helps me onboard much faster or any of these AI code... And, I feel like I learn so much. I do love the memes of “Claude’s going to do this.” So, I’d like to see Claude,

Chai [01:04:00]: The venture equivalent is “I’d like to see Claude go do a company at a billion dollars pre-revenue.” Like

Where to Learn More: Whitepapers, Research, and AbridgeHQ