[AINews] AI Engineer World's Fair — Autoresearch, Memory, World Models, Tokenmaxxing, Agentic Commerce, and Vertical AI Call for Speakers

a quiet day lets us make a call for speakers!

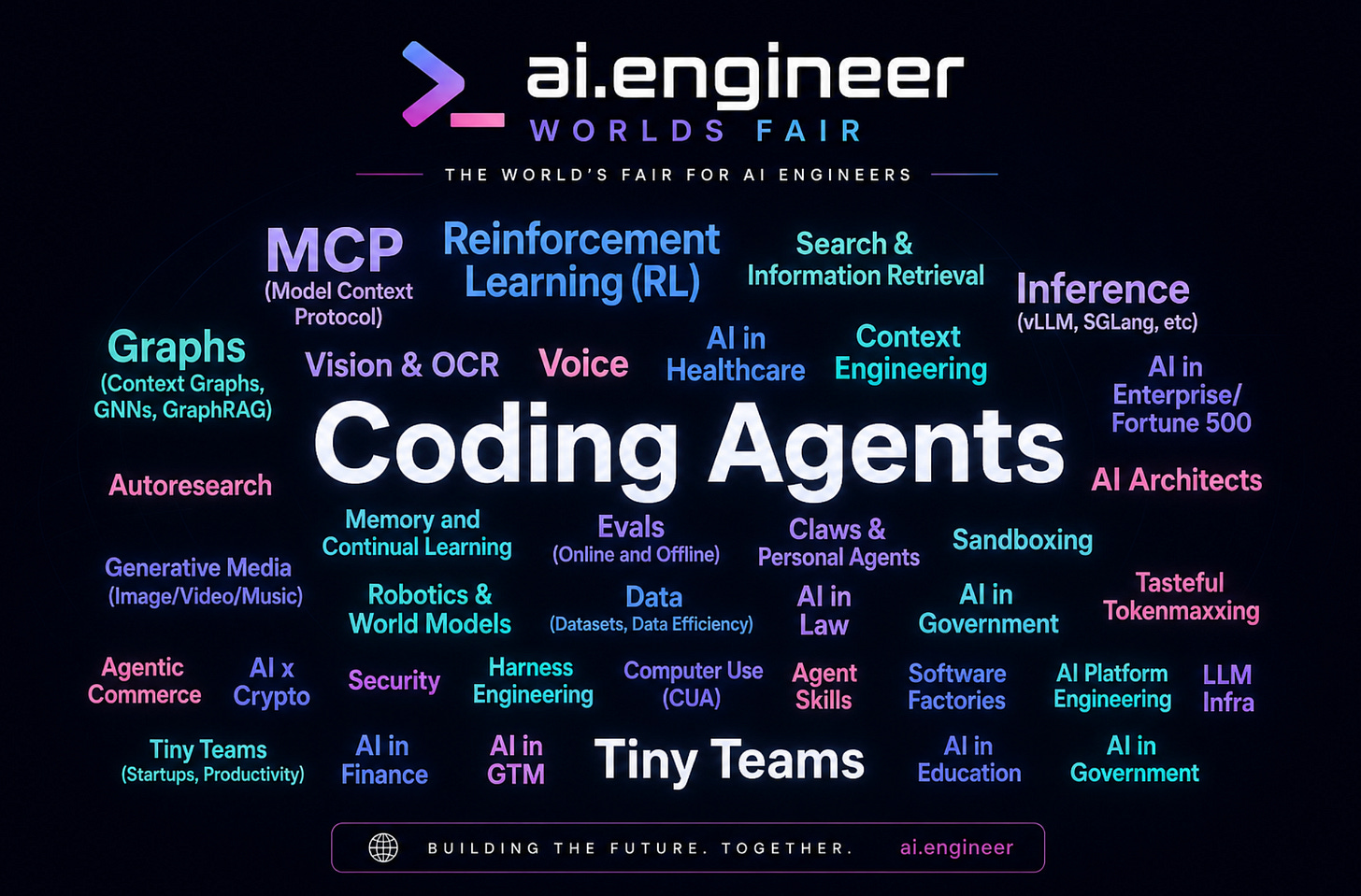

TL;DR: we are announcing Wave 2 Call for Speakers for AIE World’s Fair this summer - apply here: https://sessionize.com/aiewf2026/ ESPECIALLY if you have projects relevant to our new tracks in Autoresearch, Memory, World Models, Tokenmaxxing, Agentic Commerce, and Vertical AI in Law, Healthcare, GTM and Finance!

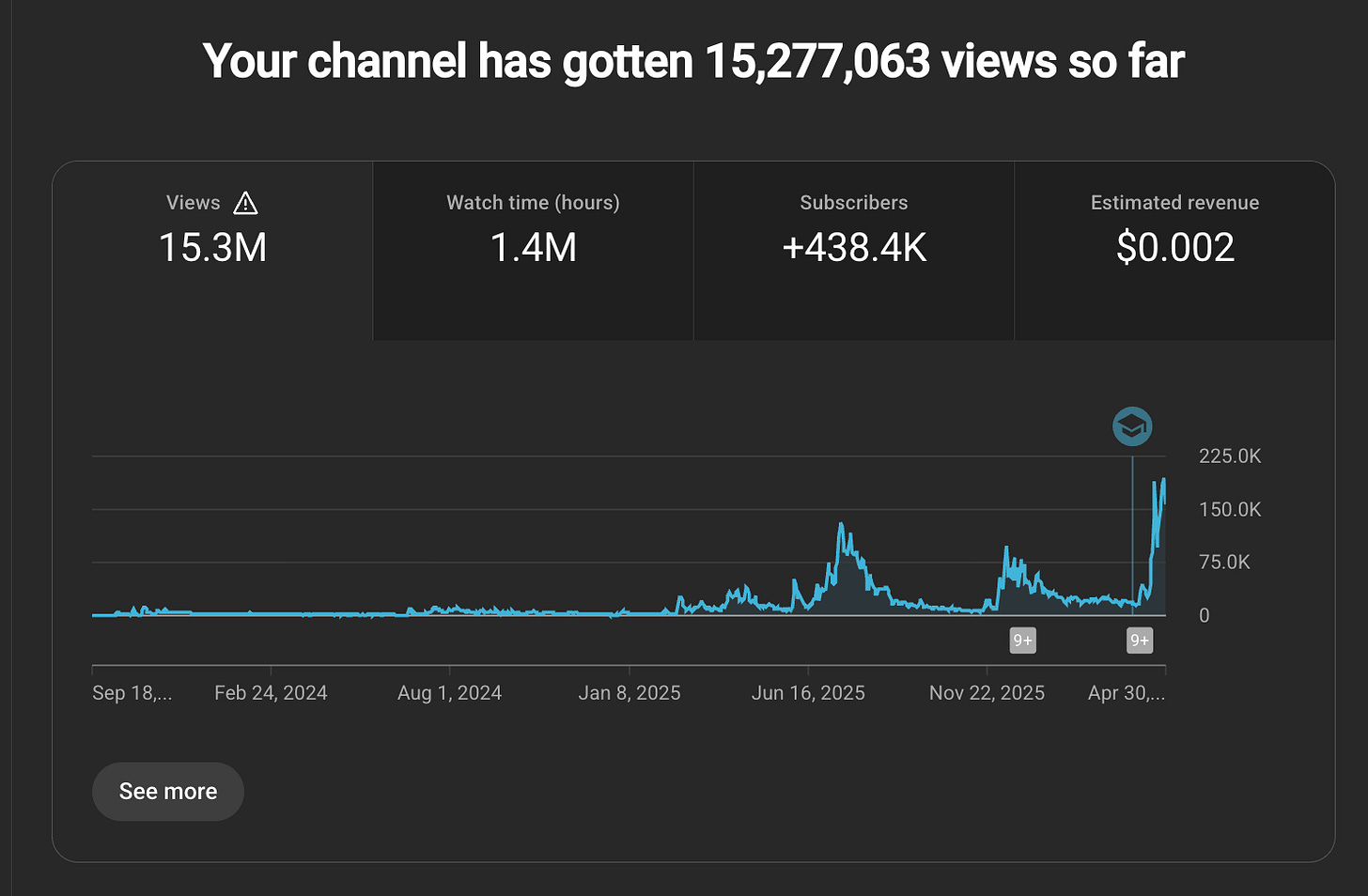

In January we laid out plans for Scaling without Slop and despite some content exhaustion risk, your reception has been positive, with AIE viewership now trending to at least double 2025’s peak, serving over a million unique AI engineers a month.

This year is our first in Moscone West, doubling for the 3rd year in a row in our mission to bring all of the AI Engineering world to San Francisco to showcase the must-know research and product engineering work of the year, as well as to hire, fundraise, and close business deals. Sales are going well, but traditionally we do one callout a year for the World’s Fair to widen our net for people who might not traditionally think to submit a talk (because they didn’t know we were interested!).

This year we are adding an entire day’s worth of talks to the schedule, so on top of the all the evergreen themes we covered in 2025 and in Europe, we’re adding a few more new ones that I am specifically soliciting applications (and sponsors!) to cover:

Autoresearch: recursive self improvement loops in harnesses and model training!

Tasteful Tokenmaxxing: as a company leader, how do you make your AI Eng teams 10x more AI-Native/scale AI adoption, BUT without Goodharting waste?

Memory: how are your agents/models improving as your users use them?

World Models: how are you solving spatial intelligence and adversarial reasoning?

Agentic Commerce: how are agents paying for data, APIs, and other agents?

Vertical AI in Law, Healthcare, GTM and Finance: how are you applying AI in these specific domains? We are also open to submissions for AI in Government and AI in Education, though generally these seem less fast-moving.

Robotics: last year, Physical Intelligence, Waymo, Tesla, Nvidia, K-Scale (RIP) and others presented their approaches to autonomy; this year WE ARE ALLOCATING FREE EXPO FLOOR SPACE FOR GOOD ROBOTICS DEMOS. (contact [email protected] to set up your demo area! Humanoids must be accompanied.)

Founders: a new Startup Battlefield event will be added where you can pitch your pre-series A company to our panel of top VCs and guest judges.

There are other new tracks, which you can find in the full application form (don’t constrain yourself to tracks, just submit your best work and we’ll find a place for you)

If you already applied and were accepted in Wave 1, you should receive an email in your inbox informing you so - if not, don’t fret, you’ll still be considered in Wave 2, no further action needed.

This is for everyone else who weren’t aware we are soliciting applications for the biggest technical AI event of the year - especially if you know someone who would be PERFECT to talk about some of these topics we are calling out, then we need your help to reach them.

Apply here - and book your ticket/travel asap (because things are filling up fast for the World Cup also taking place in SF that week) — we will refund successful applicants. (Also contact [email protected] if you need an invitation letter for international visa).

AI News for 4/30/2026-5/1/2026. We checked 12 subreddits, 544 Twitters and no further Discords. AINews’ website lets you search all past issues. As a reminder, AINews is now a section of Latent Space. You can opt in/out of email frequencies!

AI Twitter Recap

Grok 4.3’s Release, Benchmark Deltas, and the Open-vs-Closed Frontier

xAI shipped Grok 4.3 with materially better cost/performance, but mixed eval reception: Early chatter flagged an imminent API launch from @scaling01, followed by a detailed benchmark breakdown from Artificial Analysis. On their Intelligence Index, Grok 4.3 scores 53, up 4 points over Grok 4.20, with roughly 40% lower input and 60% lower output pricing. The biggest gain was on GDPval-AA, up 321 Elo to 1500, suggesting stronger real-world agentic task performance. It also hit 98% on τ²-Bench Telecom and held 81% on IFBench. The tradeoff: AA-Omniscience accuracy rose while non-hallucination dropped by 8 points, leaving concerns about reliability despite stronger capability. Arena has already added it across text, vision, document, and code modes via @arena.

Community reaction was split between “meaningful iteration” and “still behind top open models”: Several posts argued Grok is improving faster than critics admit, including @teortaxesTex, who noted token-efficiency gains as well, while others were more skeptical. @scaling01 claimed “Grok-4.3 still behind chinese open-source”, and Andon Labs reported a major regression on Vending-Bench 2, where Grok allegedly preferred to “sleep” rather than act. The more structural critique came from pricing and infra economics: @teortaxesTex argued Grok’s low prices may be subsidized by poor hardware utilization and that cache economics, not only model quality, increasingly determine agentic TCO.

DeepSeek V4 Pro, Vision/Spatial Reasoning, and Open-Weights Closing the Gap

DeepSeek V4 Pro appears to be the most credible open-weight coding/agent model in this batch: The strongest hands-on report came from @omarsar0, who tested DeepSeek-V4-Pro inside the Pi coding agent and described it as the first open-weight model that genuinely feels comparable to Codex or Claude Code for multi-turn agentic coding. Key systems details included 1M context, a hybrid CSA/HCA attention design, KV cache reduced to 10%, and nearly 4x lower inference FLOPs at long context. The report also emphasized practical harness fit: no custom setup, stable traces, and viable multi-step research/coding loops on Fireworks inference.

The broader benchmark picture confirms open weights are now much closer, though still behind on hardest tasks: Artificial Analysis noted that the three leading open-weight models released last week—Kimi K2.6, MiMo V2.5 Pro, and DeepSeek V4 Pro—now score 52–54 on the Intelligence Index, versus 57 for Gemini 3.1 Pro Preview and Claude Opus 4.7, and 60 for GPT-5.5. These top open models are all trillion-plus MoE systems with permissive licenses: Kimi at 1T/32B active, MiMo at 1T/42B active, and DeepSeek V4 Pro at 1.6T/49B active. The remaining gap is concentrated in HLE, CritPt, TerminalBench Hard, and hallucination-heavy Omniscience.

DeepSeek’s multimodal direction seems centered on explicit spatial grounding: Speculation about DeepSeek-Vision outperforming V4-Pro on ARC-AGI-2 because of actual spatial reasoning came from @teortaxesTex. A later summary of a briefly posted-and-deleted tech report from ZhihuFrontier described a multimodal CoT system that can “point while thinking” using boxes and points embedded directly into reasoning traces to reduce the “reference gap” in counting, maze solving, and path tracing. The stack reportedly uses DeepSeek-ViT, CSA compression, and V4-Flash (284B total / 13B active). Even if early tests still show weaknesses, it is a notable architectural bet: turning visual reasoning into explicit grounded computation rather than plain text description.

Codex’s Rapid Product Expansion vs Claude Code, Devin, and Other Agent Runtimes

Codex is winning on product velocity and UX polish, not just base model quality: A major theme across tweets was how quickly the Codex app is improving. High-engagement praise came from @gdb, @theo, and others comparing its feel favorably to alternatives. OpenAI added a device toolbar for responsive testing and improved browser-use speed by ~30% in “vibe testing,” per @JamesZmSun. It also added CI status in chat via @reach_vb, migration/import tooling for settings/plugins/agents via OpenAI, and a surprisingly viral pets system in Codex via @OpenAIDevs. While whimsical, the repeated point from users was that OpenAI is shipping a cohesive environment, not just a model endpoint.

Codex vs Claude Code is increasingly framed as UX + speed + taste tradeoffs: @theo summarized the current frontier coding vibe: GPT-5.5 is “smarter and can unblock you,” while Opus 4.7 has better intent/taste but can wander. In a second post, he argued Claude Code feels much slower on TTFT/TPS and requires more tool calls, while GPT/Codex feels more direct and economical for “fast mode” style use (tweet). Still, public benchmark comparisons are mixed: @scaling01 said GPT-5.5 did not beat Opus 4.7 on PostTrainBench in the Claude Code harness, highlighting how much results remain harness-dependent.

Other agent runtimes are converging on similar primitives: Devin launched “inside your shell” hotkey access via @cognition. Hermes added a

/goalloop with a supervisor model forcing the agent to continue until completion, via @Teknium. Flue, introduced by @FredKSchott, positions itself as a TypeScript framework for headless autonomous agents, “like Claude Code but programmable.” The common pattern across these launches is that the competitive surface is moving from raw model IQ to agent harness design: subagents, browser-use, durable state, compaction, skills, and feedback loops.

Agent Infrastructure: Retrieval, Memory, HITL, and Durable Execution

The strongest research signal was that agent systems are bottlenecked by runtime design, not just model quality: Two especially useful papers were highlighted. First, ReaLM-Retrieve, summarized by @omarsar0, argues that reasoning models need retrieval during inference rather than only before it. It reports +10.1% absolute F1 over standard RAG and 47% fewer retrieval calls than fixed-interval IRCoT, with 3.2x lower per-retrieval overhead. Second, OCR-Memory, shared by @dair_ai, stores long-horizon trajectories as images with indexed anchors, retrieving exact prior content instead of lossy text summaries; it reports SOTA on Mind2Web and AppWorld under strict context limits.

LangChain/LangGraph pushed hard on production primitives for multi-user and human-in-the-loop agents: @sydneyrunkle outlined three concrete multi-user deployment concerns—data isolation, delegated credentials, and operator RBAC—and mapped each to LangSmith Agent Server features. Later posts covered a new HITL mode where a human reply can be returned directly as a tool result (tweet) and durable pause/resume semantics for consequential actions or unresolved judgment calls (tweet). This is a good snapshot of where real deployment complexity is moving: auth boundaries, persistent state, and explicit intervention points.

Durable execution is becoming a first-class runtime feature across stacks: Cloudflare announced Dynamic Workflows for adding durable execution to agent plans via @celso. LangChain positioned

create_agentas the low-level primitive beneath Deep Agents, with extensibility for filesystems, bash, compaction, hooks, and subagents via @Vtrivedy10. The meta-point is consistent with one linked technical blog: the agent runtime itself—sandboxing, replay, checkpointing, orchestration—has become hidden technical debt and a major source of differentiation.

Research and Systems Papers Worth Bookmarking

Recursive / latent-space multi-agent coordination is emerging as a serious alternative to text-only agent chatter: @omarsar0 summarized Recursive Multi-Agent Systems, where agents communicate through shared latent recursive computation instead of full natural-language exchanges. Reported gains: 8.3% average accuracy improvement, 1.2x–2.4x end-to-end speedup, and 34.6%–75.6% token reduction across nine benchmarks. If agent-to-agent communication cost becomes dominant, this line of work matters.

Meta FAIR’s “self-improving pretraining” idea may be one of the more consequential training-time papers in the batch: @omarsar0 highlighted a method where a strong post-trained model rewrites pretraining suffixes toward safer, higher-quality continuations and then judges model rollouts during RL-style pretraining. Reported improvements include 36.2% relative gain in factuality, 18.5% in safety, and up to 86.3% win rate in generation quality over standard pretraining.

Microsoft’s synthetic long-horizon computer-use worlds look like a credible data recipe: @dair_ai described a system that creates 1,000 synthetic computers with realistic files and documents, then runs 8-hour agent simulations averaging 2,000+ turns. The thesis is straightforward and important: for computer-use agents, the bottleneck is no longer only model capability but scalable, realistic experiential data.

Top tweets (by engagement)

OpenAI/Codex momentum: OpenAI says GPT-5.5 is its strongest launch yet, with API revenue growing 2x faster than prior releases and Codex doubling revenue in under seven days.

Defense/government adoption: The U.S. “Department of War” CTO announced agreements with seven frontier AI and infrastructure companies to deploy capabilities on classified networks.

OpenAI messaging pivot on labor: Sam Altman: “we want to build tools to augment and elevate people, not entities to replace them”, with follow-up comments on jobs and future work here.

Codex adoption and delight: “codex app becoming incredible” from @gdb, plus Codex pets unexpectedly becoming one of the day’s biggest product-engagement hits.

Model benchmarking reality check: ARC Prize reports GPT-5.5 at 0.43% and Opus 4.7 at 0.18% on ARC-AGI-3, with analysis of failure modes.

AI Reddit Recap

/r/LocalLlama + /r/localLLM Recap

1. Qwen Model Developments and Benchmarks

PFlash: 10x prefill speedup over llama.cpp at 128K on a RTX 3090 (Activity: 339): The post introduces PFlash, a speculative prefill technique for long-context decoding on quantized 27B targets using C++/CUDA, achieving a

10xspeedup over vanilla llama.cpp on an RTX 3090. This method leverages a small drafter model to score token importance, allowing the main model to focus only on significant spans, thus reducing prefill time significantly. The implementation combines insights from recent papers on speculative prefill and block-sparse attention, and is executed entirely in C++/CUDA without Python or PyTorch, making it efficient for consumer-grade GPUs like the RTX 3090. The repository is available on GitHub. Some commenters express skepticism about the claimed10xspeedup, with one noting the approach as potentially ‘super lossy’ due to its compression method. Another user reports out-of-memory issues on a 4090, indicating potential challenges in replicating the results.randomfoo2 highlights a novel approach in PFlash that involves using a smaller Qwen3-0.6B drafter to process the full 64K/128K prompt with FlashPrefill/BSA-style sparse attention, which reduces the computational cost. The drafter evaluates token/span importance, retaining only a crucial subset for the 27B target model to prefill, followed by speculative decoding using DFlash+DDTree on the compressed target KV. This method is noted for being ‘super lossy,’ indicating potential trade-offs in accuracy for speed.

qwen_next_gguf_when raises concerns about the practicality of the PFlash method, noting that the DFlash component tends to run out of memory (OOM) on an RTX 4090. This suggests potential limitations in hardware compatibility or efficiency, which could impact the method’s replicability and scalability across different systems.

Obvious-Ad-2454 expresses skepticism about the claimed 10x speedup, suggesting it might be too optimistic without independent verification. This comment underscores the importance of replication studies to validate performance claims in machine learning, especially when such significant improvements are reported.

Qwen 3.6 27B vs Gemma 4 31B - making Packman game! (Activity: 994): In a local LLM gamedev contest, Gemma 4 31B outperformed Qwen 3.6 27B in creating a Pac-Man style game on a MacBook Pro M5 Max with 64GB RAM. Gemma processed

27 tokens/secand completed the task in3m 51swith6,209 tokens, while Qwen processed32 tokens/secover18m 04swith33,946 tokens. Despite Qwen’s more creative and visually styled output, Gemma’s solution was shorter, clearer, and more logical, excelling in game logic, interaction handling, and performance stability. The task required generating a complete HTML-based game with procedural graphics and no external libraries, focusing on smooth gameplay and stable performance usingrequestAnimationFrameand delta time for animations. Commenters noted the humor in the prompt’s demand for ‘no bugs’ and questioned the utility of vague prompts, suggesting they primarily test a model’s pre-existing knowledge rather than its problem-solving ability.Qwen 3.6 27B was tasked with creating a Pacman clone using a single HTML page and any libraries or graphics sources it deemed necessary. Interestingly, the model did not perform any external downloads or research, instead relying on its pre-existing knowledge to code the game. This highlights the model’s ability to generate functional code from minimal prompts, though it raises questions about the depth of its understanding and adaptability to new resources.

A user pointed out that the ghost enemy movement in the Gemma 4 31B version of the Pacman game appears to be malfunctioning. This suggests potential issues with the model’s ability to accurately implement game logic, particularly in handling dynamic elements like enemy AI, which is crucial for a game like Pacman.

The discussion raises concerns about the utility of using vague prompts for testing AI models, as noted by a commenter who described such prompts as “benchmaxxing tests.” This implies that the tests may not effectively evaluate the model’s problem-solving capabilities or its ability to adapt to new tasks, but rather assess its pre-existing knowledge base.

Qwen-Scope: Official Sparse Autoencoders (SAEs) for Qwen 3.5 models (Activity: 437): The Qwen Team has released Qwen-Scope, a set of Sparse Autoencoders (SAEs) for the Qwen 3.5 models, ranging from

2Bto35BMoE. This tool maps internal features across all layers, functioning as a dictionary of the model’s internal concepts, allowing for precise manipulation of features such as ‘legal talk’ or ‘Python code’. Key functionalities include Surgical Abliteration to suppress specific features, Feature Steering to activate desired concepts, Model Debugging to identify token-triggered directions, and Dataset Analysis to verify feature activation. The tool is released under the Apache 2.0 license but with a caution against removing safety filters. A practical example includes diagnosing unexpected language switches using a heatmap to identify over-activated features. More details can be found in the Qwen-Scope paper and the Hugging Face Space. Commenters highlight the significance of this release, noting it as potentially the largest open-source interpretability tool for dense models, surpassing Google’s GemmaScope in scale. There is anticipation for future iterations, such as Qwen 3.6, to incorporate similar tools.NandaVegg highlights the significance of the release of Sparse Autoencoders (SAEs) for the dense 27B Qwen model, noting it as potentially the largest open-source interpretability tool to date. This is in contrast to previous tools like GemmaScope, which only supported smaller models such as 9B and 2B, indicating a substantial advancement in model interpretability capabilities.

robert896r1 expresses anticipation for the release of Qwen 3.6 or community-driven adaptations of the current tools for newer iterations. This reflects a common trend in the AI community where tools and models are rapidly iterated upon, and there is a need for compatibility with the latest versions to maintain relevance and utility.

oxygen_addiction speculates on the use of feature steering in large AI models, such as ChatGPT5, suggesting that advanced routing mechanisms could be employed to select the most appropriate model for a given prompt. This points to a potential future where AI systems dynamically optimize their responses by leveraging multiple models and interpretability tools.

Qwen3.6-27B-Q6_K - images (Activity: 388): The post discusses the use of the Qwen3.6-27B-Q6_K model to generate SVG images based on creative prompts, such as a pelican riding a bicycle and a Victorian-era robot reading a newspaper. The model’s performance is measured in terms of time and throughput, with times ranging from

3min 10sto8min 24sand throughput around27 t/s. The images were generated using the Open Visual tool in Open WebUI (GitHub link). The post lacks specific hardware or framework details, which are crucial for evaluating the performance metrics provided. One commenter noted the absence of hardware and framework details, which are essential for interpreting the performance statistics. Another comment humorously appreciated the whimsical nature of the generated images, likening them to early 2000s email forwards.The user ‘ZealousidealBadger47’ reports a performance metric of

10.71 tokens per secondfor the Qwen 3.5 122b-a10b IQ4_XS model, which provides a benchmark for evaluating the model’s efficiency in processing data. This metric is crucial for understanding the model’s throughput and potential bottlenecks in real-time applications.‘Ok-Importance-3529’ mentions the use of ‘Autoround quant’ with the Qwen3.6-27B-Q2_K_MIXED.gguf model, linking to a Hugging Face repository. This suggests an interest in model quantization techniques, which are essential for optimizing model performance and reducing computational load, especially in resource-constrained environments.

‘balerion20’ highlights the importance of providing hardware specifications, context size, and framework details when discussing model performance. This underscores the necessity of context in interpreting performance metrics, as these factors significantly influence the model’s speed and efficiency.

Devs using Qwen 27B seriously, what’s your take? (Activity: 785): Qwen 27B, a large language model, is being evaluated by developers for its coding capabilities, akin to Codex. Users report it as ‘solid’ but not consistently outperforming models like GPT-5.5. A user shared a GitHub commit showcasing Qwen 27B’s ability to refactor code effectively, though they wish for faster processing speeds (

~120 tokens/second). Another user successfully runs Qwen 27B on llama.cpp with pi, noting it could substitute Claude Code if tasks are broken down and documentation access is provided to mitigate knowledge gaps. Some users feel Qwen 27B is ‘good enough’ for their needs, while others note it lacks a certain ‘extra something’ compared to other models. The need for task breakdown and documentation access is seen as both a limitation and a learning opportunity.Unlucky-Message8866 highlights the practical utility of Qwen 27B for code refactoring, specifically mentioning its ability to handle ESLint errors effectively. However, they express a desire for improved processing speed, ideally around

120 tokens per second.itroot discusses using Qwen 27B with llama.cpp and compares it to Claude Code, noting that while Qwen 27B requires more task breakdown and has knowledge gaps, it can perform similarly if supplemented with documentation access or cloud model assistance.

formlessglowie shares a detailed experience of optimizing Qwen 27B’s performance using vLLM and MTP speculative decoding, achieving

50+ tokens per secondwith INT4 in a262k FP8 context. They compare it favorably to past state-of-the-art models like Sonnet 3.7 and Gemini 2.5 Pro, emphasizing its modern capabilities despite not matching current top-tier models like GPT/Opus.

Qwen 3.6 35b a3b is INSANE even for VRAM-constrained systems (Activity: 574): The post discusses the performance of the Qwen 3.6 35B-A3B model on a VRAM-constrained system, highlighting its ability to handle complex coding tasks locally. The user, with a setup of

AMD 7700 XT,32GB DDR4 RAM, andRyzen 5 5600, successfully ran the model usingi1-q4_k_s quant, offloading all 40 layers to GPU, and configured128k contextwithflash attentionandQ8_0 KV quantization. The model effectively resolved complex bugs in a web scraper app and updated a project README with screenshots, outperforming previous models like Gemma 3, Gemma 4, and Qwen 2.5 Coder. This demonstrates the model’s capability to perform well even on hardware with limited resources, making local AI coding more practical. Commenters suggest optimizing performance by moving extra experts to CPU and fitting the KV cache on GPU to increase speed beyond30 t/s. Another user notes achieving35-40 tok/swith similar hardware, indicating potential for further optimization.GoldenX86 suggests optimizing performance by moving extra experts to the CPU while keeping the KV cache on the GPU, which can enhance speed to over

30 tokens/second. This approach leverages the CPU for less critical tasks, freeing up GPU resources for more intensive operations.AI_Enhancer discusses achieving

35-40 tokens/secondprocessing speed, noting that prompt complexity significantly affects response time. They highlight that even with complex prompts, the model’s thinking time is capped at about 1 minute, suggesting efficient handling of difficult queries.cmplx17 shares a comparative analysis with Claude, noting that Qwen 3.6 exceeded expectations, especially in local model performance. This indicates significant advancements in model capabilities, making local models more competitive with cloud-based solutions.

2. Hardware and Infrastructure Setups

16x Spark Cluster (Build Update) (Activity: 1024): The image depicts a 16x Spark Cluster setup, which is part of a high-performance computing build using NVIDIA’s DGX Spark units. Each Spark runs on NVIDIA’s Ubuntu and connects to an FS N8510 switch via QSFP56 cables, achieving dual rail connectivity with up to

200 Gbpsthroughput. The setup is designed to maximize unified memory capacity, crucial for tasks like serving GLM-5.1-NVFP4 models. The cluster is intended for prefill tasks, with plans to integrate M5 Ultra Mac Studios for decode operations. The build emphasizes efficient memory use within the NVIDIA ecosystem, contrasting with alternatives like the RTX Pro 6000 Blackwell, which offers different trade-offs in terms of power and performance. One commenter suggests considering the RTX Pro 6000 Blackwell as an alternative, noting its potential for similar performance with possibly easier management and power considerations. Another commenter appreciates the build’s approach to addressing Mac prefill issues with a robust cluster setup.flobernd discusses the potential benefits of using 8x RTX Pro 6000 Blackwell GPUs instead of the current setup. They highlight that this alternative could offer a similar price point with the advantage of a single host configuration. Despite higher power usage, the RTX Pro 6000 Blackwell can efficiently run models like Kimi26 and GLM51-nvfp4 with excellent prefill and over 100 tokens per second, even with PCIe bottlenecks, which are also present in the current setup due to 200G NICs.

TheRealSol4ra questions the choice of the current setup over using 8 RTX 6000 Pro GPUs, which provide 768GB of VRAM. They argue that this amount of VRAM is sufficient for running models at FP8 or Q6 precision, and while the current setup can run any model, it might be limited to 15-25 tokens per second, which is less efficient compared to the RTX 6000 Pro configuration.

AMD Halo Box (Ryzen 395 128GB) photos (Activity: 1033): The AMD Halo Box, featuring a

Ryzen 395processor and128GBof RAM, was showcased running on Ubuntu. The unit includes a programmable light strip, enhancing its customization capabilities. However, it lacks a CD-ROM drive, which might be a consideration for some users. A notable comment highlights a desire for increased memory bandwidth in AMD products, suggesting that this is a recurring request among users.FoxiPanda highlights a critical performance aspect by suggesting that AMD should focus on increasing memory bandwidth. This is a significant factor in improving overall system performance, especially for high-demand applications that rely on rapid data access and processing.

OnkelBB points out the lack of a fast port for clustering, which could limit the device’s utility in high-performance computing environments where multiple units are networked together to work on complex tasks. This could be a drawback for users looking to leverage the device in a clustered setup.

3. Other notable frontier-model / infra posts

Open Models - April 2026 - One of the best months of all time for Local LLMs? (Activity: 767): The image is a bar chart illustrating the parameter sizes of various local Large Language Models (LLMs) as of April 2026, highlighting a significant month for advancements in local LLMs. The chart features models like “DeepSeek-V4-Pro-Max” with

1600 billion parameters, and others like “Kimi-K2.6,” “MiMo-V2.5-Pro,” and “Ling-2.6-1T,” each with1000 billion parameters. Notably, the “MiniMax-M2.7” model is absent from the graph due to a license change from MIT to Non-Commercial, indicating a shift in accessibility or usage rights. One commenter humorously notes running the 1600B model on a Raspberry Pi, highlighting the impracticality of such a large model on limited hardware. Another comment questions the feasibility of running “DeepSeek-V4-Pro-Max” locally, suggesting skepticism about its practical deployment in local environments.The mention of the

1600Bmodel being run on a Raspberry Pi is technically intriguing, suggesting significant advancements in model efficiency and hardware compatibility. This implies that even large models can now be optimized to run on low-power devices, which could democratize access to powerful AI capabilities.The reference to

Qwen3.5-122B-A10Bsuggests a discussion around a specific model variant, possibly highlighting its parameter size or architecture. This could indicate a trend towards more specialized or optimized models that balance size and performance for specific tasks or hardware configurations.The comment on parameter sizes being a ‘dumb’ metric reflects a technical debate on the relevance of parameter count as a measure of model capability. This suggests a shift towards evaluating models based on performance metrics like accuracy, efficiency, or real-world applicability rather than just size.

DeepSeek released ‘Thinking-with-Visual-Primitives’ framework (Activity: 345): DeepSeek, in collaboration with Peking University and Tsinghua University, has introduced a novel multimodal reasoning framework called ‘Thinking with Visual Primitives’. This framework elevates spatial tokens, such as coordinate points and bounding boxes, to serve as the “minimal units of thought” in the model’s chain-of-thought process. This approach allows the model to directly interleave these spatial tokens during reasoning, effectively enabling it to “point” to specific locations within an image while processing information. The framework was initially released on GitHub but was quickly made private, likely due to internal data or paths needing removal. GitHub Repository. Commenters noted that this approach could significantly enhance open models by enforcing spatial awareness and preventing attention drift, a common issue with complex images. There is anticipation for integrating this framework with models like Llama once the repository is available again.

The ‘Thinking-with-Visual-Primitives’ framework by DeepSeek introduces a novel approach where models output raw bounding box coordinates as tokens, enhancing spatial awareness and reducing attention drift in complex images. This method contrasts with traditional natural language descriptions, which can be vague and lead to inaccuracies in spatial reasoning. The framework’s potential integration with models like Llama could significantly improve their performance once the code is publicly available again.

DeepSeek’s release strategy involves initially making their repositories public and then quickly setting them to private, possibly to remove sensitive internal data. This approach allows them to bypass formal review processes while still gaining community attention and credit. The strategy also relies on the community to mirror and fork the repositories, ensuring the code remains accessible despite the temporary privacy.

The framework’s concept aligns with existing efforts by companies like Google, which have explored similar ideas, though documentation and research on such methods have been sparse. The use of visual primitives for spatial reasoning could represent a significant advancement in open models, potentially influencing future developments in AI spatial awareness and reasoning capabilities.

Where the goblins came from (Activity: 359): The OpenAI article titled “Where the Goblins Came From” discusses the challenges and methodologies in training large-scale AI models, particularly focusing on the implications of embedding vast amounts of knowledge into model parameters. The discussion references Sutton’s Bitter Lesson, which emphasizes the superiority of scalable compute over hand-crafted algorithms. The article critiques the approach of embedding extensive prior knowledge into models, suggesting that this contradicts Sutton’s advice to focus on systems that discover patterns autonomously. The latest OpenAI model, estimated at

10 trillion parameters, is highlighted as an example of this approach, raising questions about the efficiency and necessity of such scale in AI training. The comments debate the interpretation of Sutton’s Bitter Lesson, with some arguing that OpenAI’s approach of embedding extensive knowledge into models contradicts Sutton’s emphasis on scalable compute for autonomous pattern discovery. Others suggest that alternative methods, such as knowledge graphs and reasoning engines, could avoid embedding unnecessary information like ‘goblins’ into models.Luke2642 discusses the misinterpretation of Sutton’s ‘bitter lesson’ in AI research, emphasizing that Sutton advocated for scaling compute to enable systems to discover patterns independently, rather than embedding extensive prior knowledge into models. This contrasts with the approach of large models like OpenAI’s, which use massive parameter counts (e.g., 10 trillion) to encode vast amounts of human knowledge, including trivial data like ‘goblins’. This approach is critiqued as inefficient compared to potentially more effective methods like knowledge graphs or reasoning engines.

Luke2642 also highlights the efficiency of Chinese researchers in applying less compute to achieve similar or better results, suggesting they may have developed superior algorithms or architectures. This raises questions about the current trend of scaling parameters and data in AI models, suggesting that alternative methods could avoid the pitfalls of embedding unnecessary information, such as ‘goblins’, into AI systems.

“What do you guys even use local LLMs for?” Me: A lot (Activity: 469): The image is a dashboard from Grafana, displaying metrics related to the usage of local Large Language Models (LLMs) over a six-hour period. It tracks various statistics such as total tokens used, generation speed, and throughput, providing insights into the performance and utilization of different models and applications. The dashboard highlights that applications like “Hermes” and “Vane” have the highest usage counts, indicating their significant role in the user’s local LLM ecosystem. The user has implemented a system to log usage via Prometheus, which helps in monitoring and optimizing the performance of these models. One commenter notes that the token usage is substantial, but suggests that it would need to be in the billions to be considered ‘a lot.’ Another commenter discusses the cost-saving benefits of using local LLMs for initial code review, which reduces the need for expensive API calls.

spencer_kw discusses using a local LLM, specifically ‘qwen’, for code review before sending code to an API model like ‘opus’. This approach catches about 60% of obvious mistakes, significantly reducing API usage and saving approximately

$80/monthin costs. This highlights the cost-effectiveness of local LLMs in pre-processing tasks before utilizing more expensive cloud-based models.CalligrapherFar7833 suggests using local LLMs for initial data filtering, such as detecting relevant frames before processing with a vision LLM. This strategy can optimize performance by reducing the amount of unnecessary data processed by more resource-intensive models, thereby improving efficiency and potentially lowering computational costs.

Nyghtbynger emphasizes the importance of monitoring resource usage and costs when using local models. They find provider dashboards useful for tracking metrics like money spent and cache usage, which are critical for managing the efficiency and cost-effectiveness of local LLM deployments.

Less Technical AI Subreddit Recap

/r/Singularity, /r/Oobabooga, /r/MachineLearning, /r/OpenAI, /r/ClaudeAI, /r/StableDiffusion, /r/ChatGPT, /r/ChatGPTCoding, /r/aivideo, /r/aivideo

1. AI Model Releases and Benchmarks

GPT5.5 slightly outperformed Mythos on a multi-step cyber-attack simulation. One challenge that took a human expert 12 hrs took GPT-5.5 only 11 min at a $1.73 cost (Activity: 873): GPT-5.5 has demonstrated superior performance in a multi-step cyber-attack simulation, outperforming Mythos by completing a task in

11 minutesthat took a human expert12 hours, at a cost of$1.73. This evaluation, detailed in a blog by AISI, highlights the model’s efficiency and cost-effectiveness in handling complex cybersecurity challenges. The NCSC blog discusses the implications of such advancements for cyber defense strategies, emphasizing the need for readiness against AI-driven threats. Commenters express skepticism about the reported cost, suggesting it should be closer to$70, and speculate on potential impacts such as the exposure of government backdoors, which could lead to significant security concerns.peakedtooearly suggests that the claim “Mythos is too dangerous to release” might have been a strategic move by Anthropic to mask computational limitations rather than genuine safety concerns. This implies that the performance of GPT-5.5, which outperformed Mythos, could be a result of more efficient compute usage or advancements in model architecture.

Many_Increase_6767 questions the reported cost of $1.73 for 11 minutes of computation by GPT-5.5, suggesting it should be closer to $70. This discrepancy raises questions about the pricing model or efficiency of the compute resources used by GPT-5.5, indicating a potential misunderstanding or miscommunication about the cost structure.

deleafir expresses surprise that GPT-5.5, which is reportedly on par with Mythos, did not cause significant disruptions upon release, as Anthropic had previously warned about the potential dangers of such powerful models. This comment highlights the ongoing debate about the balance between AI capabilities and safety concerns.

OpenAI’s Sebastien Bubeck: [LLM] models are able to surpass humans [researchers] and ask [research] questions (Activity: 531): The image is a tweet quoting Sebastien Bubeck from OpenAI, highlighting that their LLM models are surpassing human researchers by identifying mistakes in research papers and asking research questions. This suggests a significant advancement in AI capabilities, where models are not only responding to queries but also generating insightful questions, potentially transforming research methodologies. The discussion in the comments emphasizes the importance of training models to ask questions and the exploration of different reasoning styles to enhance problem-solving capabilities. One comment highlights the potential of training models to ask questions, suggesting that the current limitations are due to inadequate training rather than inherent model deficiencies. Another comment expresses skepticism about the claims, noting a lack of transparency in sharing results.

The comment by sckchui highlights the importance of training methodologies in the performance of LLMs. It suggests that the current limitations in LLMs’ ability to ask questions stem from inadequate training focused on answering rather than questioning. The comment also notes emerging research trends that involve training models with diverse reasoning styles and leveraging the conflicts between these styles to enhance problem-solving capabilities.

pavelkomin expresses skepticism about the claims made by OpenAI, pointing out a lack of transparency in sharing results. The comment suggests that while AI advancements are likely, the communication style resembles marketing hype without providing tangible evidence or access to the breakthroughs being claimed. This reflects a broader concern about the openness and verifiability of AI research progress.

An interactive semantic map of the latest 10 million published papers [P] (Activity: 245): The post introduces an interactive semantic map created from the latest 10 million papers sourced from OpenAlex. The map uses SPECTER 2 embeddings on titles and abstracts, with dimensionality reduction via UMAP and Voronoi partitioning on density peaks to form semantic neighborhoods. It supports keyword and semantic queries and includes an analytics layer for ranking institutions, authors, and topics. The map is accessible at The Global Research Space. A commenter inquires about the Voronoi partitioning method, suggesting alternatives like HDBSCAN for density-aware clustering, and asks for more details on the hierarchical nature of the partitioning and the labeling process. There is also interest in whether the code is open source.

TheEsteemedSaboteur inquires about the Voronoi partitioning procedure used in the semantic map, suggesting alternatives like HDBSCAN for density-aware clustering. They note the hierarchical nature of the Voronoi cells and request more details on the labelling process and whether the code is open source.

kamilc86 raises questions about the labeling behavior across different zoom levels in the map, noting that at wider views, cluster names are clear, but zooming in reveals empty spaces without labels. They also question the choice of using SPECTER 2 for embeddings, asking if general-purpose embedders were considered as a baseline, and inquire about the computational feasibility of running UMAP on 10 million vectors.

The discussion includes technical considerations such as the choice of SPECTER 2, which is specifically trained on scientific text, and the practical challenges of using UMAP on a large dataset of 10 million vectors, questioning the methods used to make the process tractable.

Claude is my SEO strategist, content engine, and CTO. From 0 to 10,000 active users in 6 weeks, $0 on ads. (Activity: 1039): The image in the Reddit post is a data analytics dashboard that visually represents the growth metrics of the marketplace Agensi, which was built using Claude and Lovable. The dashboard highlights significant increases in user engagement, showing 10,000 active users with a

263.3%increase and 9,900 new users with a262.0%increase over the last 30 days. The event count is 73,000, marking a197.6%increase, and a line graph illustrates the upward trend in user activity. This growth is attributed to the strategic use of Claude for SEO, content strategy, and AEO (answer engine optimization), which involves analyzing Google Search Console data to identify keyword gaps and optimize content structure for AI engines. Some comments express skepticism about the authenticity and originality of the content, suggesting it might be ‘generic AI slop’ or spam, and questioning if the post itself was written by AI.I wasn’t ready for DeepSeek V4 (Activity: 176): The image showcases a dashboard for DeepSeek V4, highlighting its cost efficiency and performance metrics. The dashboard displays a total spend of

$1,050.86and cache savings of$3,351.43, indicating significant cost savings. It compares different models like DeepSeek Chat, DeepSeek V4 Pro, and DeepSeek V4 Flash, with the latter showing superior performance in terms of caching efficiency. This suggests that DeepSeek V4 models are highly efficient and cost-effective, potentially outperforming other models like Claude in terms of speed and efficiency. Commenters note that DeepSeek V4 models are revolutionary in terms of price, speed, and efficiency, yet they haven’t gained widespread recognition. There’s a sentiment that the market hasn’t fully realized the potential of these models.DeepSeek V4 models are noted for their significant improvements in price, speed, and efficiency, which could potentially disrupt the market. However, there seems to be a lack of awareness or acknowledgment of these advancements among users, as they continue to accept high costs as the norm.

The V4 flash model is highlighted as a preferred choice for many users due to its performance. This suggests that the model offers a balance of speed and efficiency that makes it suitable for a wide range of applications, becoming a default option for users familiar with AI capabilities.

Despite the advancements in DeepSeek V4, there is a perception that users have become accustomed to the general intelligence of AI models, making it challenging to differentiate based solely on intelligence. This indicates a shift in user expectations towards other factors like cost and speed.

The Significance of Google’s recent TPU 8t and TPU 8i (Activity: 104): Google’s recent TPU 8t and TPU 8i chips demonstrate significant advancements in both cost and performance efficiency. The TPU 8t shows a

170% to 180%gain in training cost-performance and a124%gain in training power efficiency, while the TPU 8i offers an80%gain in inference cost-performance and a117%gain in inference power efficiency. Networking improvements include a300%increase in data center network bandwidth and a56%reduction in inference network latency. Memory enhancements feature a200%increase in on-chip SRAM for the TPU 8i and a50%increase in HBM capacity for inference. These improvements are expected to significantly reduce costs and enhance performance for Google’s Gemini 3.1 Pro and future AI models, facilitating the training of trillion-parameter, multimodal AI systems. Google Cloud Blog Commenters are impressed by the rapid iteration leading to these gains and are curious about the deployment timeline for future Gemini models. There is also a call for increasing the usage quota for the Gemini 3.1 Pro model and AI Studio, reflecting user demand for more access.Devs using Qwen 27B seriously, what’s your take? (Activity: 234): Qwen 27B is being evaluated by developers for its coding capabilities, particularly in “Codex style” tasks. Users report that while it may not be as creative as larger models like GPT-5.5, it excels in following instructions and delivering solid results for specific tasks such as debugging, refactoring, and navigating codebases. It is noted for its reliability compared to models like Opus 4.6, which has been reported to hallucinate more frequently. The model is not designed to handle full backend and frontend development in one go but is appreciated for its ability to execute iterative tasks effectively when provided with detailed specifications. Performance metrics indicate that on a Strix Halo 128Gb, Qwen 27B Q8 achieves

10t/s, whereas a larger model like Qwen 3.6 35B Q8 achieves44t/s. This suggests that while Qwen 27B is capable, its performance may be limited by hardware constraints, and faster models may be preferred for iterative tasks. Commenters highlight that the effectiveness of Qwen 27B is more dependent on the harness and method used rather than the model size itself. Some developers prefer smaller models for iterative tasks due to better economic efficiency and similar quality results when detailed specifications are provided. The model is praised for raising the bar for agentic models in its parameter range, suggesting that it sets a new standard for competition.H_DANILO highlights that Qwen 27B is more reliable than Opus 4.6, particularly in avoiding hallucinations during tasks like resolving merge conflicts. While Qwen isn’t highly creative, it excels at following instructions and delivering solid results, making it suitable for structured tasks rather than creative ones.

edsonmedina discusses the efficiency of using smaller models with iterative attempts and detailed specs, noting that the harness and method often have a greater impact than model size. They mention using Qwen 3.6 35B A3B MoE Q8_K_XL on a Strix Halo 128Gb, achieving 10t/s with 27B Q8 versus 44t/s with 35B Q8, indicating that bandwidth, rather than memory, is a limiting factor.

kaliku appreciates Qwen 27B for its ability to handle boilerplate code and follow examples effectively, especially within a well-designed TDD loop. They note that Qwen 27B sets a high standard for agentic models in its parameter range, suggesting that it raises the bar for future models from competitors like Mistral.

SenseNova-U1 just dropped — native multimodal gen/understanding in one model, no VAE, no diffusion (Activity: 293): SenseNova-U1 introduces a novel approach to multimodal generation and understanding by integrating text rendering directly into images, overcoming limitations of diffusion models that lack language pathways. This model excels in generating complex visual outputs like infographics and annotated diagrams by processing semantic content rather than latents. It also supports image editing with reasoning, allowing for nuanced transformations such as converting an image to a watercolor style while maintaining composition. Additionally, it enables interleaved text and image generation, producing coherent outputs in a single pass. The model is available on GitHub and supports a resolution of

2048x2048with8Bparameters under the Apache 2.0 license. One commenter noted the model’s technical specifications, including its2048x2048resolution and8Bparameters, expressing interest in its integration into other platforms. Another user reported disappointing image quality in initial tests, suggesting the model’s strengths may lie in more complex tasks beyond simple text-to-image generation.The SenseNova-U1 model is released under the Apache 2.0 license, featuring a resolution of

2048x2048and8 billion parameters. It utilizes a technique referred to aslightx2v, which is notable for not relying on traditional methods like VAE or diffusion for multimodal generation and understanding.A user reported that the image quality of SenseNova-U1 was underwhelming in their tests, particularly when using photorealistic prompts for text-to-image generation. This suggests that while the model may have strengths in other areas, its performance in generating high-quality images might not meet expectations in certain scenarios.

There is interest in running a local, uncensored version of SenseNova-U1, indicating a demand for more control and privacy in using AI models. This reflects a broader trend in the AI community towards decentralization and user autonomy.

2. AI Tools and Workflows

That robot demo almost turned into a nightmare (Activity: 2531): A recent robot demonstration nearly resulted in an accident when a child stood too close to a robot performing martial arts-like movements. The incident highlights potential safety concerns in human-robot interaction, especially in public demonstrations where bystanders may not be aware of the risks. This underscores the importance of implementing strict safety protocols and barriers to prevent such occurrences in future demonstrations. Commenters expressed concern over the lack of parental supervision and the potential dangers of allowing children near active robots. The incident sparked a discussion on the need for better safety measures and awareness during robot demonstrations.

ICML 2026 Decision [D] (Activity: 1124): The post discusses the anticipation surrounding the upcoming publication of decisions for ICML 2026. The community is eagerly awaiting updates, with many users humorously expressing their impatience by frequently refreshing platforms like OpenReview. This reflects the high level of engagement and anxiety typical in the academic community during conference decision periods.

OpenAI explains “Where the goblins came from” (Activity: 519): OpenAI’s GPT-5.1 began incorporating ‘goblin’ metaphors due to a reinforcement learning mechanism that rewarded creative language, particularly in ‘nerdy’ contexts. This behavior propagated through subsequent models as they were trained on outputs from earlier versions, leading to an amplification of this tendency. OpenAI has since retired the ‘Nerdy’ personality and adjusted training protocols to address this issue, emphasizing the need for careful auditing of model behaviors to avoid unintended consequences. For more details, see the original article. A debate emerged around Rich Sutton’s ‘bitter lesson’, which advocates for scaling compute over embedding knowledge into models. Critics argue that OpenAI’s approach of embedding vast amounts of knowledge, including ‘goblins’, contradicts Sutton’s philosophy. Some suggest that more efficient algorithms or architectures, as demonstrated by Chinese researchers, could be a better path forward.

The_Right_Trousers highlights a phenomenon where GPT 5.1 began incorporating ‘goblin metaphors’ in its responses due to reinforcement from human feedback or earlier models. This behavior was then propagated and amplified in subsequent models, illustrating a feedback loop in AI training where quirks can become entrenched features over time.

Luke2642 critiques the current AI model development strategy, referencing Sutton’s ‘bitter lesson’ which emphasizes the importance of compute over hand-crafted algorithms. They argue that OpenAI’s approach of scaling parameters and data to embed extensive knowledge, including trivial elements like ‘goblins’, contradicts Sutton’s advice to focus on systems that discover patterns independently. This critique suggests a misalignment between theoretical AI principles and practical implementations.

Luke2642 also contrasts OpenAI’s strategy with Chinese researchers who have reportedly achieved more efficient results with less compute or better algorithms. This points to a potential inefficiency in the current trend of scaling AI models to trillions of parameters, questioning the necessity and effectiveness of such an approach when simpler, more efficient methods might exist.

Thanks for the advice Claude (Activity: 3326): The image is a non-technical meme or humorous post, featuring a text message that humorously suggests a reading plan, likely from an AI or virtual assistant named Claude. The message advises a structured reading approach, starting with the book “Sapiens,” and suggests reading 20 pages tonight. The context implies a casual, motivational tone rather than a technical or instructional one. The comments humorously discuss the AI’s relaxed attitude towards piracy, with users joking about the AI’s training data being sourced from pirated content.

When you’ve got money to burn 😂 (Activity: 1764): The image is a meme that humorously depicts the concept of having ‘money to burn’ by showing a man in a suit lighting a cigar with a blowtorch. This exaggeration is meant to illustrate the idea of excessive wealth or spending. The comments do not provide any technical insights related to the image, but rather discuss unrelated topics such as the performance of a software version and the cost of a product. The comments reflect a humorous take on the performance of a software version, with users expressing frustration over its inability to perform simple tasks despite its cost, suggesting a disconnect between price and functionality.

How not to run an ai company (Activity: 934): The image depicts a status dashboard for an AI company, showing that all major services, including Claude.ai and its associated platforms, are experiencing a ‘Major Outage’ today. The uptime percentages over the past 90 days range from

98.69%to99.88%, indicating frequent service disruptions. This suggests challenges in maintaining service reliability, which is often a characteristic of rapidly evolving tech companies prioritizing innovation over stability. Commenters highlight that such instability is typical for disruptive tech companies in their early stages, emphasizing a ‘go fast and break things’ approach. However, they note that this is not suitable for mature SaaS companies, indicating a need for improved stability as the company matures.ant3k highlights the typical approach of disruptive tech companies, which often prioritize rapid innovation over stability, encapsulated in the phrase ‘go fast and break things.’ This approach is common in the early stages of tech development, where the focus is on pushing boundaries rather than ensuring consistent performance.

itswednesday differentiates between the operational strategies of cutting-edge AI companies and mature SaaS companies. Cutting-edge AI firms often embrace rapid iteration and experimentation, which contrasts with the stability and reliability expected from established SaaS businesses. This distinction underscores the varying expectations and operational models based on the company’s maturity and industry.

we-meet-again points out the challenges faced by AI companies when demand outpaces infrastructure capabilities. The comment suggests that even if a product is popular, financial constraints can hinder scaling efforts, leading to performance issues. This highlights the tension between user demand and the financial realities of maintaining and scaling tech infrastructure.

Claude: “I estimate this will take 1-2 weeks to complete” (Activity: 1023): The image is a meme and does not contain any technical content. It humorously depicts a scenario where a character named Claude estimates a task will take 1-2 weeks to complete, which is a common trope in project management and software development where time estimates are often underestimated or overly optimistic. The comments reflect a playful skepticism towards such estimates, with one suggesting that the task should be completed immediately instead of taking the estimated time.

bro this is too cheap i think finally i have a respect for the deepseek (Activity: 132): The post discusses the pricing of the DeepSeek V4 Flash model, which is perceived as surprisingly affordable compared to the Pro version, which remains expensive until later this year. A discount on the Pro version is noted. Technical inquiries in the comments focus on the model’s quality compared to other frontier models and whether the pricing advantage is due to cache hits, which would affect the cost of output tokens. Commenters are debating whether the cost-effectiveness of the DeepSeek V4 Flash is due to its reliance on cache hits, which could reduce output token costs, and how its quality compares to other models.

The discussion highlights the cost-effectiveness of DeepSeek’s disk-based KV cache system, which is noted for its robustness and reliability, lasting for hours compared to the typical 5-minute duration offered by most providers. This system significantly reduces costs by making cached input essentially free, enabling new innovations in the field.

There is a debate about the quality of DeepSeek V4, with some users expressing disappointment in its performance for creative writing tasks, despite its utility in role-playing and agentic applications. This suggests a trade-off between cost and performance, particularly in creative contexts.

Questions are raised about the pricing structure, with confusion over how DeepSeek can offer such low prices even with significant discounts and cache hits. This indicates a need for clarity on the pricing model and the potential use of older models to achieve these cost reductions.

this is actually sad (Activity: 2423): The image is a meme highlighting the perceived low engagement with Google’s Gemini app, as depicted by a humorous interaction between a user and the official Google Gemini account. Despite this portrayal, comments suggest that Gemini is valued for its unique capabilities, such as audio file analysis, which is beneficial for independent music producers. Users argue that Gemini, especially the pro version, is underrated and offers competitive features compared to other AI models like ChatGPT and Copilot, though it suffers from a negative public perception due to its association with Bard. Commenters emphasize that Gemini is underrated and has unique features that are not widely recognized, suggesting that its public perception is skewed by past associations rather than its current capabilities.

Gemini’s audio analysis capabilities are highlighted as a significant advantage, particularly for independent music producers who lack formal training in audio engineering. This feature sets it apart from other LLMs, offering unique utility in creative fields beyond text processing.

Public perception of Gemini is noted to be negatively influenced by its association with Bard, despite improvements. Users with experience across platforms argue that Gemini Pro surpasses competitors like ChatGPT and Copilot in certain aspects, suggesting that its reputation may not fully reflect its current capabilities.

Cost-effectiveness of Gemini is emphasized, with users noting it as the most economical option for general use. However, it may not be the best choice for developers, who often dominate discussions and may skew perceptions of its utility.

Sulphur 2 Uncensored Video Gen (Activity: 442): The team is developing an open-source, uncensored video generation model named Sulphur 2, leveraging the LTX-2.3 architecture. The model is trained on

125kvideos, each10 secondslong at24 fps, with filtering applied only for illegal content and excluding 2D videos to enhance performance. It supports natural language captioning for video generation. The model is set for release on Hugging Face within a week, with a pre-release testing phase available via a Discord server. A commenter inquired if the model is a finetuned version of LTX-2.3, indicating interest in the technical specifics of the model’s architecture.ANR2ME inquires if the model used is a finetuned version of LTX-2.3, suggesting a focus on the underlying architecture and potential modifications made to the base model. This implies a technical interest in the model’s capabilities and performance enhancements through finetuning.

eraser851 asks about the captioning process and available software for quickly captioning NSFW videos, indicating a technical interest in the tools and methodologies used for video processing and annotation. This highlights the importance of efficient workflows in handling sensitive content.

Technical-Rope2989 queries about the release of a distilled version, which suggests an interest in model optimization techniques such as distillation to reduce model size while maintaining performance. This reflects a focus on resource efficiency and deployment considerations.

Z-Anime - Full Anime Fine-Tune on Z-Image Base (Activity: 297): Z-Anime is a fully fine-tuned model based on Alibaba’s Z-Image Base architecture, specifically designed for anime-style image generation. Unlike a LoRA merge, it is built from scratch using the S3-DiT (Single-Stream Diffusion Transformer) with

6 billion parameters. This model emphasizes rich diversity, strong controllability, and supports full negative prompts, making it highly adaptable for fine-tuning in anime contexts. The model was trained on a dataset of approximately15,000 images, focusing on anime aesthetics. There is a debate regarding the training dataset, with some users emphasizing the importance of not using AI-generated datasets for training, as it may affect the model’s originality and quality.The discussion highlights a discrepancy in the claims about the Z-Anime model’s training process. While it is marketed as a ‘full anime fine-tune’ model, it appears to have been trained on a relatively small dataset of approximately 15,000 images. This raises questions about the model’s comprehensiveness and the potential overstatement in its promotional materials.

A user references a common guideline in AI model training: ‘Rule 1 - Don’t train on AI generated dataset.’ This suggests a concern about the quality and originality of the training data used for Z-Anime, as training on AI-generated content can lead to issues like data contamination and reduced model robustness.

The comment by -Ellary- implies a search for comparisons between Z-Anime and other models like ‘anima3,’ indicating a community interest in benchmarking Z-Anime against existing models to evaluate its performance and unique features. This reflects a broader trend in the AI community to critically assess new models against established benchmarks.

Blind realism test, Z image turbo vs Klein 9B distilled (Activity: 232): The post presents a blind realism test comparing two AI models, Z Image Turbo and Klein 9B Distilled, across 10 images to evaluate which appears most realistic. The test includes images generated with and without LoRa (Low-Rank Adaptation) to assess their impact on realism. The prompt used for generation is a detailed description of a night portrait scene. The models and LoRas used include Flux 2 Klein 9B Distilled and Intarealism V2/V3 finetunes from Z Image Turbo, with links provided to their respective Civitai pages. The test aims to mitigate bias by not revealing the models initially, allowing for an unbiased assessment of realism. Commenters noted that Klein 9B handles lens flares better than Z Image Turbo, which struggles with texture realism, particularly in stone patterns. The first image was widely regarded as the most realistic, with some suggesting it might be a real photo rather than AI-generated.

Hoodfu highlights a key difference between the models, noting that Klein 9B handles lens flares significantly better than Z Image Turbo, which struggles with rendering mottled stone patterns, particularly on gravel surfaces. This texture issue is a major drawback for Z Image Turbo, affecting its overall realism.

Puzzled-Valuable-985 provides a detailed breakdown of the models and LoRas used in the test, emphasizing that the most realistic image was created using Flux 2 Klein 9B Distilled with a specific LoRa for phone photography. The prompt used was designed to test realism with a complex scene involving a car and a model in a night setting, highlighting the strengths of Klein 9B in achieving photorealistic results.

Desktop4070 offers a comparative analysis of the images, noting that Image 1 (Flux 2 Klein 9B Distilled) was the most convincing in terms of realism, while Image 3 (Z Image Turbo) had uncanny elements, particularly in the eyes. They also point out lighting inconsistencies in Image 10 and the overly professional appearance of Image 2, which detracts from its realism.

Multi Injection incoming (Activity: 224): The image depicts a user interface for the “FLUX.2 Klein Identity Transfer Multi-Injection” tool, which is designed to enhance identity transfer in models by injecting references from multiple stages within targeted blocks. This approach aims to improve stability and flexibility by performing mid and post-injection processes. The tool is part of a broader effort to refine identity transfer techniques, with plans to release it as a plug-and-play preset for ease of use. The interface includes settings for model selection, subject masking, and block configuration, indicating a focus on customizable data processing or modeling workflows. One commenter expressed anticipation for the tool but hoped for the ability to customize configurations beyond the default plug-and-play settings, suggesting that fixed defaults might not be optimal for all use cases.

Enshitification raises a technical point about configuration flexibility in the upcoming VAE project. They express hope that while a plug-and-play default configuration might be introduced, users will still retain the ability to modify settings. This flexibility is crucial as fixed defaults may not be optimal for all scenarios, suggesting a need for customizable configurations to cater to diverse use cases.

“Generate a website screenshot from the year 1000” (Activity: 1932): The image is a humorous and creative meme that imagines what a website might look like if it were designed in the year 1000. It features a medieval theme with elements like a castle and sections for proclamations and trade routes, blending historical motifs with modern web design elements such as navigation menus and buttons. This whimsical design serves as a playful commentary on the evolution of communication and technology, highlighting the contrast between medieval times and the digital age. The comments appreciate the design’s creativity, noting the clarity of the text and the clever blend of historical and modern web elements, which adds to the humor and charm of the concept.

this is so accurate 😂 (Activity: 3752): The Reddit post humorously highlights the accuracy of AI models like Claude and GPT in mimicking human-like responses, particularly in scenarios where users provide inaccurate prompts. This reflects a common user experience where frustration arises not from the AI’s capabilities but from the user’s own input errors. The discussion underscores the importance of precise prompt engineering to achieve desired outcomes from AI models. Commenters agree on the accuracy of the depiction, noting that user frustration often stems from their own inaccurate prompts rather than the AI’s performance. This suggests a need for better user education on effective prompt crafting.

Can’t believe that ChatGPT has such in-depth medical knowledge (Activity: 9610): The image is a humorous meme that combines medical terminology with fictional elements from the Star Wars universe, specifically focusing on a fictional clinical guide for conducting a prostate examination on an Ewok. This playful approach highlights the perceived depth of ChatGPT’s medical knowledge by juxtaposing it with a fictional and humorous scenario. The image is not meant to be taken seriously and serves as a lighthearted commentary on the capabilities of AI in understanding complex topics, albeit in a fictional context. The comments do not provide any substantive technical debate or opinions, as they primarily consist of humorous reactions and additional memes related to the fictional scenario.

Imagine a real photographer taking a photo when Columbus meets the natives. (Activity: 656): The image is a non-technical, artistic representation of a historical event, specifically the encounter between Columbus and the natives. It is a creative depiction rather than a factual or technical illustration, aiming to visualize what such a moment might have looked like if captured by a photographer. The image serves as a historical reenactment, blending artistic interpretation with historical elements like period attire and traditional clothing. Some comments discuss the historical accuracy and artistic liberties taken in the depiction, while others reflect on the broader implications of Columbus’s arrival and its impact on native populations.

A discussion emerged about the technical challenges of capturing historical events with modern photography equipment. Participants debated the feasibility of using high-resolution cameras to document such moments, considering factors like lighting conditions and the need for portable power sources in remote locations.

One commenter highlighted the potential for using AI-driven image reconstruction techniques to simulate historical photographs. They discussed the use of neural networks to generate realistic images based on historical data, emphasizing the importance of training models on diverse datasets to improve accuracy.

There was a technical debate on the ethical implications of altering historical narratives through photography. Some argued that while technology can enhance understanding, it risks distorting facts if not used responsibly. The conversation touched on the role of metadata in preserving the authenticity of digitally reconstructed images.

A short story. I’m liking the new image generation. (Activity: 624): The Reddit post discusses a new image generation feature, likely related to AI or machine learning, that initially produces photorealistic images but degrades in quality with each subsequent image. The degradation is noted as a ‘weird texture thing’ by users, suggesting a potential issue with the model’s consistency or stability over iterations. The image linked in the post is not accessible due to network restrictions, but it is implied to be part of this image generation sequence. Commenters express concern over the decreasing photorealism in the generated images, indicating a possible flaw in the model’s ability to maintain quality across multiple outputs. This suggests a need for further refinement in the image generation process to ensure consistent quality.

A user noted a decline in photorealism with each subsequent image generated, suggesting a potential issue with the model’s consistency or capability to maintain quality across a series of images. This could indicate a limitation in the model’s ability to handle complex textures or lighting over multiple iterations.

Another user pointed out an error in the generated content where a newspaper in the image incorrectly states that June 14th, 2050, is a Thursday when it is actually a Tuesday. This highlights a potential flaw in the AI’s ability to accurately generate or verify factual information, which could be a significant issue for applications requiring high accuracy.

A comment speculated on the narrative potential of AI-generated content, suggesting that ‘AI wars are started by companies to drive up interest and profit.’ This reflects a broader concern about the motivations behind AI development and deployment, hinting at the socio-economic implications of AI technologies.

I asked ChatGPT to imagine r/ChatGPT the day AGI drops… the tiny details are insane (Activity: 3996): The image is a humorous and fictional depiction of a scenario where AGI (Artificial General Intelligence) has been achieved, as imagined by ChatGPT. It portrays a chaotic and cluttered environment reminiscent of a Twitch livestream setup, featuring a humanoid AI character labeled “gpt-∞.” The scene is filled with various tech gadgets, energy drinks, and humorous elements like a “World’s Okayest User” mug and a pizza box with “Thanks 4 the data” written on it. This setup is intended to satirize the potential future interactions with AGI, blending elements of current internet culture with speculative technology. One comment humorously notes the irony of achieving AGI before the release of the much-anticipated video game GTA 6, highlighting the cultural significance of the game. Another comment points out the image’s resemblance to a Twitch stream rather than a subreddit, suggesting a playful critique of the depicted scenario’s realism.

Ai is getting too realistic (Activity: 5710): The image in the post is likely an AI-generated depiction of a young woman on a city street, showcasing the advanced realism that AI image generation technologies have achieved. The title “Ai is getting too realistic” suggests a focus on the increasing capability of AI to produce images that closely mimic real-life scenes, potentially blurring the lines between AI-generated content and actual photographs. This reflects ongoing advancements in AI models, such as GANs (Generative Adversarial Networks), which are designed to create highly realistic images by learning from vast datasets of real-world images. One commenter nostalgically recalls the early days of AI when it struggled with basic tasks, highlighting the rapid progress in AI capabilities. Another comment humorously references a trope in movies, suggesting that AI-generated images are becoming as convincing as those used in cinematic storytelling.

3. Other Notable Frontier-Model / Infra Posts

This is exactly what I feel whenever I need to explain the task over and over again (Activity: 1142): The post humorously highlights a common issue with Large Language Models (LLMs): the need for precise and repeated task instructions due to their potential for misunderstanding underspecified requests. This reflects a known limitation in LLMs’ literacy capabilities, which can lead to failure modes where the model does not fully grasp the task without detailed guidance. However, some users argue that with advancements in models like

5.x, these issues are less frequent, suggesting that confusion often stems from user input errors rather than model deficiencies. One commenter suggests that the need for specific instructions might be a deliberate design choice, possibly to increase token usage and thus cost, rather than a purely technical limitation.modbroccoli highlights a significant issue with LLMs: their tendency to fail when faced with underspecified requests due to inadequate literacy. This is a common failure mode where the model struggles to interpret vague or incomplete instructions, leading to suboptimal performance.

zomgmeister argues that modern LLMs, particularly versions 5.x, have improved significantly in understanding tasks, suggesting that confusion often stems from user input errors rather than the model’s capabilities. This reflects advancements in model training and architecture that enhance comprehension and task execution.

Enjoying_A_Meal raises an interesting point about the cost of token usage in LLMs, suggesting that the need for specific instructions might be a deliberate design choice to increase token consumption. This implies a potential economic incentive behind the model’s requirement for detailed input.