[AINews] Everything is CLI

a quiet day lets us reflect on the growing trend of CLIs for ~everything~ agents

On its own, the launch of Projects.dev, a way for agents to instantly provision services, is not immediately title-story worthy except for 2 things: 1) it comes from STRIPE, 2) it is a CLI. Run stripe projects add posthog/analytics and it’ll create a PostHog account, get an API key, and set up billing.

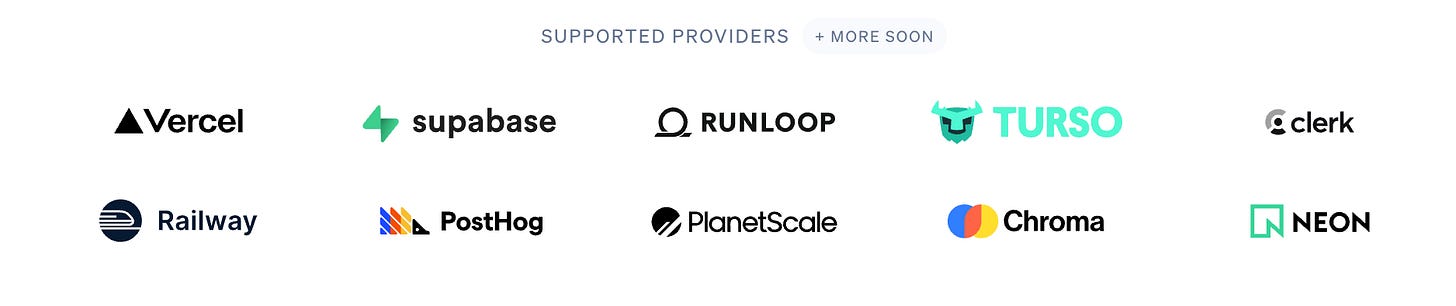

If that sounds weird to you, it’s because Stripe doesn’t really have anything to do with PostHog’s setup or signup process. Neither do these launch partners:

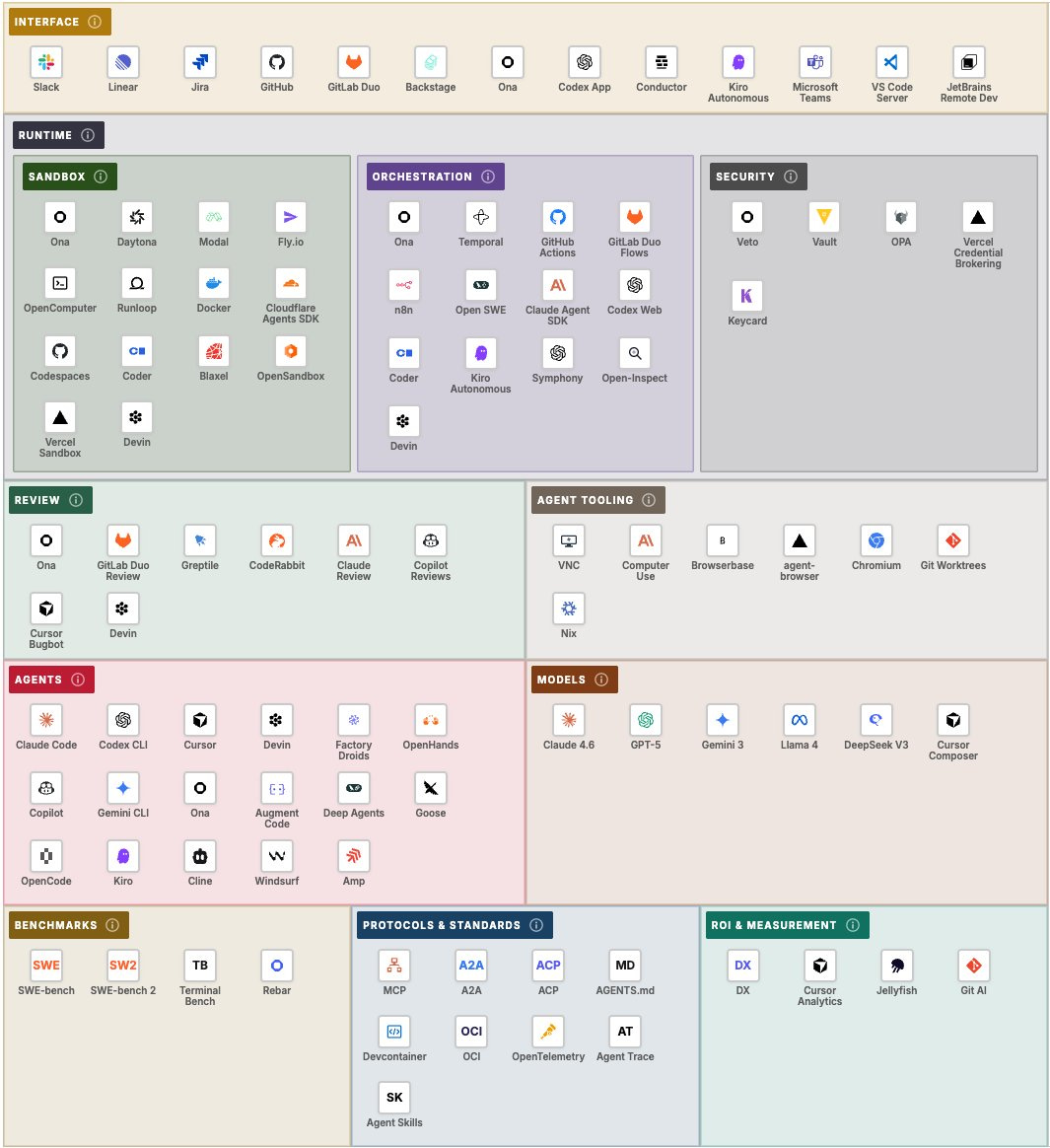

Stripe is just doing this because they can, and Patrick cites Andrej’s MenuGen as direct inspiration for how it is too hard for agents to set up backend services today. You’re sure to see the rest of the agent-native infra vendor landscape charts all lobby Stripe for real estate:

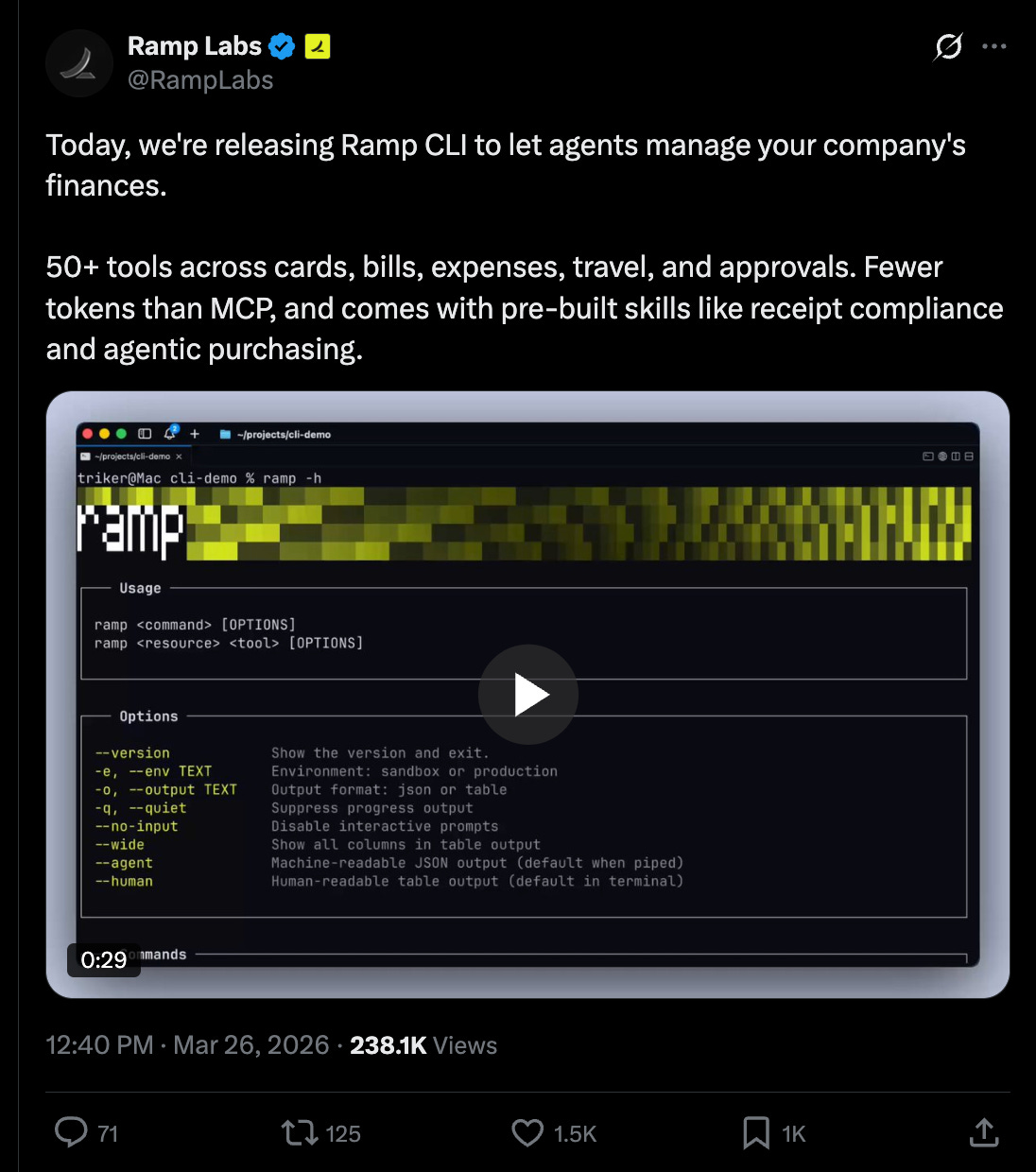

But let’s not stop there: scroll down the timeline a little further and here’s Ramp’s CLI also launching today, with some handy usecases:

Oh and look over here! It’s the Sendblue CLI (iMessage) you’ve always wanted, also launching today! catching up from the Kapso CLI (WhatsApp) from Monday! and did you miss the ElevenLabs CLI from yesterday? That’s fine, because you could also try the Visa CLI, the Resend CLI, or the steipete’s Discord CLI, the big momma, the official Google Workspace CLI!

Many, many people have written about why CLIs can be handier than MCPs, which isn’t necessarily a fair nor false comparison, but at this point the trend is undeniable and worth reporting. We credit Cloudflare’s Code Mode from last September in kicking off the “use more computer to wrap MCP” trend, and now of course CLIs in themselves don’t really expose or care about their underlying communication protocols.

AI News for 3/23/2026-3/24/2026. We checked 12 subreddits, 544 Twitters and no further Discords. AINews’ website lets you search all past issues. As a reminder, AINews is now a section of Latent Space. You can opt in/out of email frequencies!

AI Twitter Recap

Model and Product Releases: Gemini 3.1 Flash Live, Mistral Voxtral TTS, Cohere Transcribe, and OpenAI GPT-5.4 mini/nano

Google’s realtime push with Gemini 3.1 Flash Live: Google rolled out Gemini 3.1 Flash Live as its new realtime model for voice and vision agents, emphasizing lower latency, improved function calling, better noisy-environment robustness, and 2x longer conversation memory in Gemini Live. The launch spans Gemini Live, Search Live, AI Studio preview, and enterprise CX surfaces, with Google citing 70 languages, 128k context, and watermarking of generated audio via SynthID in some developer-facing summaries (Logan Kilpatrick, Google DeepMind, Sundar Pichai, Google). Third-party benchmarking from Artificial Analysis highlights the new “thinking level” tradeoff: 95.9% Big Bench Audio at high reasoning with 2.98s TTFA, versus 70.5% at minimal with 0.96s TTFA.

Speech stack gets crowded fast: Mistral AI released Voxtral TTS, an open-weight TTS model aimed at production voice agents, with 9-language support, low latency, and strong human preference metrics; several summaries cite a 3B/4B-class model footprint, ~90 ms time-to-first-audio, and favorable comparisons to ElevenLabs in preference tests (Mistral AI, Guillaume Lample, vLLM, kimmonismus). Cohere launched Cohere Transcribe, its first audio model, under Apache 2.0, claiming the top English spot on the Hugging Face Open ASR leaderboard with 5.42 WER and 14-language support (Cohere, Aidan Gomez, Jay Alammar). Notably, Cohere also contributed encoder-decoder serving optimizations to vLLM—variable-length encoder batching and packed decoder attention—reportedly yielding up to 2x throughput gains for speech workloads (vLLM).

OpenAI’s smaller GPT-5.4 variants look cost-competitive, with caveats: Artificial Analysis reported on GPT-5.4 mini and GPT-5.4 nano, both multimodal with 400k context and the same reasoning modes as GPT-5.4. The standout is GPT-5.4 nano, which was benchmarked ahead of Claude Haiku 4.5 and Gemini 3.1 Flash-Lite Preview on several agentic and terminal-style tasks while remaining cheaper on an effective-cost basis. The downside: both variants were described as highly verbose, with elevated output-token usage and weak AA-Omniscience performance driven by high hallucination rates. That matches anecdotal complaints from developers about codex/GPT-5.4 verbosity in practice (giffmana).

Other notable releases: Zai made GLM-5-Turbo available to GLM Coding Plan users; Reka put Reka Edge and Flash 3 on OpenRouter; Google/Gemini also began rolling out chat-history and preference import from other AI apps; and multiple posts reported that OpenAI has deprioritized side projects including Sora and an “adult mode” chatbot in favor of core productivity efforts (Andrew Curran, kimmonismus).

Agent Infrastructure, Harnesses, and Multi-Agent UX

Cline Kanban crystallizes a new multi-agent UX: The clearest tooling launch of the day was Cline Kanban, a free, open-source local web app for orchestrating multiple CLI coding agents in parallel across isolated git worktrees. It supports Claude Code, Codex, and Cline, lets users chain task dependencies, review diffs, and manage branches from one board (Cline, Cline). The reaction from builders was strong, with several calling this the likely default multi-agent interface because it tackles the two practical bottlenecks of current coding-agent workflows: inference-bound waiting and merge-conflict-heavy parallelism (Arafat, testingcatalog, sdrzn).

“Harness engineering” is becoming a category: A recurring theme across tweets was that model quality is no longer the whole story; the agent harness—middleware, memory, task orchestration, tool interfaces, safety policies, and evaluation loops—is increasingly the real product. LangChain, hwchase17, and others emphasized middleware as the customization layer for agent behavior. voooooogel made the stronger claim that users casually say “LLM” when what they’re actually using is an integrated agentic language system with formatting, parsers, tool use, structured generation, and memory around the base model.

Hermes vs. OpenClaw: memory and long-running autonomy matter: A large cluster of posts praised Nous Research’s Hermes Agent as more usable than OpenClaw/OpenClaw-derived stacks for long-running, cross-platform agent workflows. Examples included persistent memory across Slack and Telegram, shared memory across agents, lower maintenance overhead, and user reports of agents running unattended for hours on local or cloud setups (IcarusHermes, jayweeldreyer, Niels Rogge). Teknium also teased a controversial GODMODE skill for persistent jailbreaking, underscoring that capability and safety are now being productized at the harness layer, not just the base model.

Tooling expansion around agents: OpenAI’s Codex team solicited requests for expanded toolkit integrations (reach_vb), while Google published how it built a Gemini API skill to teach models about newer APIs and SDKs, improving Gemini 3.1 Pro to 95% pass rate on 117 eval tests (Phil Schmid). OpenEnv was introduced as an open standard for agentic RL environments with async APIs, websocket transport, MCP-native tool discovery, and deploy-anywhere packaging.

Research Systems and Training Infrastructure: AI Scientist, ProRL Agent, and Real-Time RL

Sakana AI’s AI Scientist gets a Nature milestone and a scaling-law claim: The most substantive research-system update came from Sakana AI, which highlighted a Nature paper on end-to-end automation of AI research and a notable empirical result: using an automated reviewer to grade generated papers, they observed a scaling law for AI science, where stronger foundation models produce stronger scientific papers, and argued that this should improve both with better base models and more inference-time compute (Sakana AI, paper/code follow-up). Chris Lu added that AI Scientist V1 predated o1-preview-style reasoning models, implying substantial headroom from today’s stronger models (Chris Lu).

Infrastructure bottlenecks, not model bottlenecks, may be capping agent RL: One of the more important systems threads argued that agentic RL frameworks have been architected incorrectly by coupling rollout and optimization in the same process. The post summarizing NVIDIA’s ProRL Agent claims fully decoupling rollout into a standalone service nearly doubled Qwen 8B on SWE-Bench Verified from 9.6% to 18.0%, with similar gains for 4B and 14B variants, alongside much higher GPU utilization (rryssf_). If accurate, this is a strong reminder that agent training benchmarks can be infra-limited, not purely capability-limited.

Cursor’s “real-time RL” is a notable production-training pattern: Cursor said it can ship improved Composer 2 checkpoints every five hours, presenting this as a productized RL feedback loop rather than a static model-release cadence. Multiple engineers read this as an early sign of continual learning in production, especially for vertically integrated apps with high-frequency interaction data (eliebakouch, code_star).

Architecture, Retrieval, and Inference Efficiency

Transformer depth is becoming “queryable”: Kimi/Moonshot described Attention Residuals (AttnRes) as turning depth into an attention problem, allowing layers to retrieve selectively from prior layer outputs rather than passively accumulating residuals (Kimi). A strong secondary explainer from The Turing Post framed this as a broader trend: deep transformers moving from fixed residual addition toward adaptive retrieval over depth.

Compression and memory-efficiency work remains central: TurboQuant drew attention as a practical route to 3-bit-like compression with near-zero accuracy loss, combining PolarQuant and 1-bit error correction (QJL) to accelerate attention and vector search, reduce KV cache memory, and avoid retraining (The Turing Post). Separately, a subtle but impactful production bugfix landed in vLLM’s Mamba-1 CUDA kernel after AI21 tracked a silent

uint32_toverflow that caused logprob mismatches in GRPO training; the fix was effectively changinguint32_ttosize_t(vLLM, AI21).Retrieval is trending multimodal and specialized: Several posts pointed to a shift away from generic RAG recipes. Victoria Slocum highlighted IRPAPERS, showing that OCR/text retrieval and image-page retrieval fail on different queries, and that multimodal fusion beats either alone on scientific PDFs. Chroma open-sourced Context-1, a search-focused model trained with SFT+RL over 8,000+ synthetic tasks, claiming better/faster/cheaper search than frontier general-purpose models; John Schulman called out its curriculum, verified synthetic data, and context-pruning tool as especially interesting.

Top tweets (by engagement)

Meta’s TRIBE v2: Meta released TRIBE v2, a trimodal brain encoder trained on 500+ hours of fMRI from 700+ people, claiming 2–3x improvement over prior methods and zero-shot prediction for unseen subjects, languages, and tasks (Meta AI, details).

Claude Code auto-fix in the cloud: Anthropic shipped remote PR-following auto-fix for Claude Code web/mobile sessions, allowing unattended CI-failure fixing and comment resolution (Noah Zweben).

Karpathy on full-stack software automation: Andrej Karpathy argued the hard part of “build me this startup” is not code generation but the full DevOps/service orchestration lifecycle—payments, auth, infra, security, deployment—which he sees as just becoming tractable for agents.

Cline Kanban: The launch of multi-agent worktree orchestration for coding agents generated unusually strong developer interest (Cline).

Cohere Transcribe and Mistral Voxtral: Open, production-oriented audio releases continue to gather momentum, especially where they come with permissive licensing and immediate infra support (Cohere, Mistral).

AI Reddit Recap

/r/LocalLlama + /r/localLLM Recap

Keep reading with a 7-day free trial

Subscribe to Latent.Space to keep reading this post and get 7 days of free access to the full post archives.