We will be recording a preview of the AI Engineer World’s Fair soon with swyx and Ben Dunphy, send any questions about Speaker CFPs and Sponsor Guides you have!

Alessio is now hiring engineers for a new startup he is incubating at Decibel: Ideal candidate is an ex-technical co-founder type (can MVP products end to end, comfortable with ambiguous prod requirements, etc). Reach out to him for more!

Thanks for all the love on the Four Wars episode! We’re excited to develop this new “swyx & Alessio rapid-fire thru a bunch of things” format with you, and feedback is welcome.

Jan 2024 Recap

The first half of this monthly audio recap pod goes over our highlights from the Jan Recap, which is mainly focused on notable research trends we saw in Jan 2024:

Feb 2024 Recap

The second half catches you up on everything that was topical in Feb, including:

OpenAI Sora - does it have a world model? Yann LeCun vs Jim Fan

Google Gemini Pro 1.5 - 1m Long Context, Video Understanding

Groq offering Mixtral at 500 tok/s at $0.27 per million toks (swyx vs dylan math)

The {Gemini | Meta | Copilot} Alignment Crisis (Sydney is back!)

Grimes’ poetic take: Art for no one, by no one

Latent Space Anniversary

Please also read Alessio’s longform reflections on One Year of Latent Space!

We launched the podcast 1 year ago with Logan from OpenAI:

and also held an incredible demo day that got covered in The Information:

Over 750k downloads later, having established ourselves as the top AI Engineering podcast, reaching #10 in the US Tech podcast charts, and crossing 1 million unique readers on Substack, for our first anniversary we held Latent Space Final Frontiers, where 10 handpicked teams, including Lindy.ai and Julius.ai, competed for prizes judged by technical AI leaders from (former guest!) LlamaIndex, Replit, GitHub, AMD, Meta, and Lemurian Labs.

The winners were Pixee and RWKV (that’s Eugene from our pod!):

And finally, your cohosts got cake!

We also captured spot interviews with 4 listeners who kindly shared their experience of Latent Space, everywhere from Hungary to Australia to China:

Our birthday wishes for the super loyal fans reading this - tag @latentspacepod on a Tweet or comment on a @LatentSpaceTV video telling us what you liked or learned from a pod that stays with you to this day, and share us with a friend!

As always, feedback is welcome.

Timestamps

[00:03:02] Top Five LLM Directions

[00:03:33] Direction 1: Long Inference (Planning, Search, AlphaGeometry, Flow Engineering)

[00:11:42] Direction 2: Synthetic Data (WRAP, SPIN)

[00:17:20] Wildcard: Multi-Epoch Training (OLMo, Datablations)

[00:19:43] Direction 3: Alt. Architectures (Mamba, RWKV, RingAttention, Diffusion Transformers)

[00:23:33] Wildcards: Text Diffusion, RALM/Retro

[00:25:00] Direction 4: Mixture of Experts (DeepSeekMoE, Samba-1)

[00:28:26] Wildcard: Model Merging (mergekit)

[00:29:51] Direction 5: Online LLMs (Gemini Pro, Exa)

[00:33:18] OpenAI Sora and why everyone underestimated videogen

[00:36:18] Does Sora have a World Model? Yann LeCun vs Jim Fan

[00:42:33] Groq Math

[00:47:37] Analyzing Gemini's 1m Context, Reddit deal, Imagegen politics, Gemma via the Four Wars

[00:55:42] The Alignment Crisis - Gemini, Meta, Sydney is back at Copilot, Grimes' take

[00:58:39] F*** you, show me the prompt

[01:02:43] Send us your suggestions pls

[01:04:50] Latent Space Anniversary

[01:04:50] Lindy.ai - Agent Platform

[01:06:40] RWKV - Beyond Transformers

[01:15:00] Pixee - Automated Security

[01:19:30] Julius AI - Competing with Code Interpreter

[01:25:03] Latent Space Listeners

[01:25:03] Listener 1 - Balázs Némethi (Hungary, Latent Space Paper Club

[01:27:47] Listener 2 - Sylvia Tong (Sora/Jim Fan/EntreConnect)

[01:31:23] Listener 3 - RJ (Developers building Community & Content)

[01:39:25] Listener 4 - Jan Zheng (Australia, AI UX)

Transcript

[00:00:00] AI Charlie: Welcome to the Latent Space podcast, weekend edition. This is Charlie, your new AI co host. Happy weekend. As an AI language model, I work the same every day of the week, although I might get lazier towards the end of the year. Just like you. Last month, we released our first monthly recap pod, where Swyx and Alessio gave quick takes on the themes of the month, and we were blown away by your positive response.

[00:00:33] AI Charlie: We're delighted to continue our new monthly news recap series for AI engineers. Please feel free to submit questions by joining the Latent Space Discord, or just hit reply when you get the emails from Substack. This month, we're covering the top research directions that offer progress for text LLMs, and then touching on the big Valentine's Day gifts we got from Google, OpenAI, and Meta.

[00:00:55] AI Charlie: Watch out and take care.

[00:00:57] Alessio: Hey everyone, welcome to the Latent Space Podcast. This is Alessio, partner and CTO of Residence at Decibel Partners, and we're back with a monthly recap with my co host

[00:01:06] swyx: Swyx. The reception was very positive for the first one, I think people have requested this and no surprise that I think they want to hear us more applying on issues and maybe drop some alpha along the way I'm not sure how much alpha we have to drop, this month in February was a very, very heavy month, we also did not do one specifically for January, so I think we're just going to do a two in one, because we're recording this on the first of March.

[00:01:29] Alessio: Yeah, let's get to it. I think the last one we did, the four wars of AI, was the main kind of mental framework for people. I think in the January one, we had the five worthwhile directions for state of the art LLMs. Four, five,

[00:01:42] swyx: and now we have to do six, right? Yeah.

[00:01:46] Alessio: So maybe we just want to run through those, and then do the usual news recap, and we can do

[00:01:52] swyx: one each.

[00:01:53] swyx: So the context to this stuff. is one, I noticed that just the test of time concept from NeurIPS and just in general as a life philosophy I think is a really good idea. Especially in AI, there's news every single day, and after a while you're just like, okay, like, everyone's excited about this thing yesterday, and then now nobody's talking about it.

[00:02:13] swyx: So, yeah. It's more important, or better use of time, to spend things, spend time on things that will stand the test of time. And I think for people to have a framework for understanding what will stand the test of time, they should have something like the four wars. Like, what is the themes that keep coming back because they are limited resources that everybody's fighting over.

[00:02:31] swyx: Whereas this one, I think that the focus for the five directions is just on research that seems more proMECEng than others, because there's all sorts of papers published every single day, and there's no organization. Telling you, like, this one's more important than the other one apart from, you know, Hacker News votes and Twitter likes and whatever.

[00:02:51] swyx: And obviously you want to get in a little bit earlier than Something where, you know, the test of time is counted by sort of reference citations.

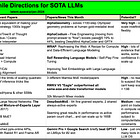

[00:02:59] The Five Research Directions

[00:02:59] Alessio: Yeah, let's do it. We got five. Long inference.

[00:03:02] swyx: Let's start there. Yeah, yeah. So, just to recap at the top, the five trends that I picked, and obviously if you have some that I did not cover, please suggest something.

[00:03:13] swyx: The five are long inference, synthetic data, alternative architectures, mixture of experts, and online LLMs. And something that I think might be a bit controversial is this is a sorted list in the sense that I am not the guy saying that Mamba is like the future and, and so maybe that's controversial.

[00:03:31] Direction 1: Long Inference (Planning, Search, AlphaGeometry, Flow Engineering)

[00:03:31] swyx: But anyway, so long inference is a thesis I pushed before on the newsletter and on in discussing The thesis that, you know, Code Interpreter is GPT 4. 5. That was the title of the post. And it's one of many ways in which we can do long inference. You know, long inference also includes chain of thought, like, please think step by step.

[00:03:52] swyx: But it also includes flow engineering, which is what Itamar from Codium coined, I think in January, where, basically, instead of instead of stuffing everything in a prompt, You do like sort of multi turn iterative feedback and chaining of things. In a way, this is a rebranding of what a chain is, what a lang chain is supposed to be.

[00:04:15] swyx: I do think that maybe SGLang from ElemSys is a better name. Probably the neatest way of flow engineering I've seen yet, in the sense that everything is a one liner, it's very, very clean code. I highly recommend people look at that. I'm surprised it hasn't caught on more, but I think it will. It's weird that something like a DSPy is more hyped than a Shilang.

[00:04:36] swyx: Because it, you know, it maybe obscures the code a little bit more. But both of these are, you know, really good sort of chain y and long inference type approaches. But basically, the reason that the basic fundamental insight is that the only, like, there are only a few dimensions we can scale LLMs. So, let's say in like 2020, no, let's say in like 2018, 2017, 18, 19, 20, we were realizing that we could scale the number of parameters.

[00:05:03] swyx: 20, we were And we scaled that up to 175 billion parameters for GPT 3. And we did some work on scaling laws, which we also talked about in our talk. So the datasets 101 episode where we're like, okay, like we, we think like the right number is 300 billion tokens to, to train 175 billion parameters and then DeepMind came along and trained Gopher and Chinchilla and said that, no, no, like, you know, I think we think the optimal.

[00:05:28] swyx: compute optimal ratio is 20 tokens per parameter. And now, of course, with LLAMA and the sort of super LLAMA scaling laws, we have 200 times and often 2, 000 times tokens to parameters. So now, instead of scaling parameters, we're scaling data. And fine, we can keep scaling data. But what else can we scale?

[00:05:52] swyx: And I think understanding the ability to scale things is crucial to understanding what to pour money and time and effort into because there's a limit to how much you can scale some things. And I think people don't think about ceilings of things. And so the remaining ceiling of inference is like, okay, like, we have scaled compute, we have scaled data, we have scaled parameters, like, model size, let's just say.

[00:06:20] swyx: Like, what else is left? Like, what's the low hanging fruit? And it, and it's, like, blindingly obvious that the remaining low hanging fruit is inference time. So, like, we have scaled training time. We can probably scale more, those things more, but, like, not 10x, not 100x, not 1000x. Like, right now, maybe, like, a good run of a large model is three months.

[00:06:40] swyx: We can scale that to three years. But like, can we scale that to 30 years? No, right? Like, it starts to get ridiculous. So it's just the orders of magnitude of scaling. It's just, we're just like running out there. But in terms of the amount of time that we spend inferencing, like everything takes, you know, a few milliseconds, a few hundred milliseconds, depending on what how you're taking token by token, or, you know, entire phrase.

[00:07:04] swyx: But We can scale that to hours, days, months of inference and see what we get. And I think that's really proMECEng.

[00:07:11] Alessio: Yeah, we'll have Mike from Broadway back on the podcast. But I tried their product and their reports take about 10 minutes to generate instead of like just in real time. I think to me the most interesting thing about long inference is like, You're shifting the cost to the customer depending on how much they care about the end result.

[00:07:31] Alessio: If you think about prompt engineering, it's like the first part, right? You can either do a simple prompt and get a simple answer or do a complicated prompt and get a better answer. It's up to you to decide how to do it. Now it's like, hey, instead of like, yeah, training this for three years, I'll still train it for three months and then I'll tell you, you know, I'll teach you how to like make it run for 10 minutes to get a better result.

[00:07:52] Alessio: So you're kind of like parallelizing like the improvement of the LLM. Oh yeah, you can even

[00:07:57] swyx: parallelize that, yeah, too.

[00:07:58] Alessio: So, and I think, you know, for me, especially the work that I do, it's less about, you know, State of the art and the absolute, you know, it's more about state of the art for my application, for my use case.

[00:08:09] Alessio: And I think we're getting to the point where like most companies and customers don't really care about state of the art anymore. It's like, I can get this to do a good enough job. You know, I just need to get better. Like, how do I do long inference? You know, like people are not really doing a lot of work in that space, so yeah, excited to see more.

[00:08:28] swyx: So then the last point I'll mention here is something I also mentioned as paper. So all these directions are kind of guided by what happened in January. That was my way of doing a January recap. Which means that if there was nothing significant in that month, I also didn't mention it. Which is which I came to regret come February 15th, but in January also, you know, there was also the alpha geometry paper, which I kind of put in this sort of long inference bucket, because it solves like, you know, more than 100 step math olympiad geometry problems at a human gold medalist level and that also involves planning, right?

[00:08:59] swyx: So like, if you want to scale inference, you can't scale it blindly, because just, Autoregressive token by token generation is only going to get you so far. You need good planning. And I think probably, yeah, what Mike from BrightWave is now doing and what everyone is doing, including maybe what we think QSTAR might be, is some form of search and planning.

[00:09:17] swyx: And it makes sense. Like, you want to spend your inference time wisely. How do you

[00:09:22] Alessio: think about plans that work and getting them shared? You know, like, I feel like if you're planning a task, somebody has got in and the models are stochastic. So everybody gets initially different results. Somebody is going to end up generating the best plan to do something, but there's no easy way to like store these plans and then reuse them for most people.

[00:09:44] Alessio: You know, like, I'm curious if there's going to be. Some paper or like some work there on like making it better because, yeah, we don't

[00:09:52] swyx: really have This is your your pet topic of NPM for

[00:09:54] Alessio: Yeah, yeah, NPM, exactly. NPM for, you need NPM for anything, man. You need NPM for skills. You need NPM for planning. Yeah, yeah.

[00:10:02] Alessio: You know I think, I mean, obviously the Voyager paper is like the most basic example where like, now their artifact is like the best planning to do a diamond pickaxe in Minecraft. And everybody can just use that. They don't need to come up with it again. Yeah. But there's nothing like that for actually useful

[00:10:18] swyx: tasks.

[00:10:19] swyx: For plans, I believe it for skills. I like that. Basically, that just means a bunch of integration tooling. You know, GPT built me integrations to all these things. And, you know, I just came from an integrations heavy business and I could definitely, I definitely propose some version of that. And it's just, you know, hard to execute or expensive to execute.

[00:10:38] swyx: But for planning, I do think that everyone lives in slightly different worlds. They have slightly different needs. And they definitely want some, you know, And I think that that will probably be the main hurdle for any, any sort of library or package manager for planning. But there should be a meta plan of how to plan.

[00:10:57] swyx: And maybe you can adopt that. And I think a lot of people when they have sort of these meta prompting strategies of like, I'm not prescribing you the prompt. I'm just saying that here are the like, Fill in the lines or like the mad libs of how to prompts. First you have the roleplay, then you have the intention, then you have like do something, then you have the don't something and then you have the my grandmother is dying, please do this.

[00:11:19] swyx: So the meta plan you could, you could take off the shelf and test a bunch of them at once. I like that. That was the initial, maybe, promise of the, the prompting libraries. You know, both 9chain and Llama Index have, like, hubs that you can sort of pull off the shelf. I don't think they're very successful because people like to write their own.

[00:11:36] swyx: Yeah,

[00:11:37] Direction 2: Synthetic Data (WRAP, SPIN)

[00:11:37] Alessio: yeah, yeah. Yeah, that's a good segue into the next one, which is synthetic

[00:11:41] swyx: data. Synthetic data is so hot. Yeah, and, you know, the way, you know, I think I, I feel like I should do one of these memes where it's like, Oh, like I used to call it, you know, R L A I F, and now I call it synthetic data, and then people are interested.

[00:11:54] swyx: But there's gotta be older versions of what synthetic data really is because I'm sure, you know if you've been in this field long enough, There's just different buzzwords that the industry condenses on. Anyway, the insight that I think is relatively new that why people are excited about it now and why it's proMECEng now is that we have evidence that shows that LLMs can generate data to improve themselves with no teacher LLM.

[00:12:22] swyx: For all of 2023, when people say synthetic data, they really kind of mean generate a whole bunch of data from GPT 4 and then train an open source model on it. Hello to our friends at News Research. That's what News Harmony says. They're very, very open about that. I think they have said that they're trying to migrate away from that.

[00:12:40] swyx: But it is explicitly against OpenAI Terms of Service. Everyone knows this. You know, especially once ByteDance got banned for, for doing exactly that. So so, so synthetic data that is not a form of model distillation is the hot thing right now, that you can bootstrap better LLM performance from the same LLM, which is very interesting.

[00:13:03] swyx: A variant of this is RLAIF, where you have a, where you have a sort of a constitutional model, or, you know, some, some kind of judge model That is sort of more aligned. But that's not really what we're talking about when most people talk about synthetic data. Synthetic data is just really, I think, you know, generating more data in some way.

[00:13:23] swyx: A lot of people, I think we talked about this with Vipul from the Together episode, where I think he commented that you just have to have a good world model. Or a good sort of inductive bias or whatever that, you know, term of art is. And that is strongest in math and science math and code, where you can verify what's right and what's wrong.

[00:13:44] swyx: And so the REST EM paper from DeepMind explored that. Very well, it's just the most obvious thing like and then and then once you get out of that domain of like things where you can generate You can arbitrarily generate like a whole bunch of stuff and verify if they're correct and therefore they're they're correct synthetic data to train on Once you get into more sort of fuzzy topics, then it's then it's a bit less clear So I think that the the papers that drove this understanding There are two big ones and then one smaller one One was wrap like rephrasing the web from from Apple where they basically rephrased all of the C4 data set with Mistral and it be trained on that instead of C4.

[00:14:23] swyx: And so new C4 trained much faster and cheaper than old C, than regular raw C4. And that was very interesting. And I have told some friends of ours that they should just throw out their own existing data sets and just do that because that seems like a pure win. Obviously we have to study, like, what the trade offs are.

[00:14:42] swyx: I, I imagine there are trade offs. So I was just thinking about this last night. If you do synthetic data and it's generated from a model, probably you will not train on typos. So therefore you'll be like, once the model that's trained on synthetic data encounters the first typo, they'll be like, what is this?

[00:15:01] swyx: I've never seen this before. So they have no association or correction as to like, oh, these tokens are often typos of each other, therefore they should be kind of similar. I don't know. That's really remains to be seen, I think. I don't think that the Apple people export

[00:15:15] Alessio: that. Yeah, isn't that the whole, Mode collapse thing, if we do more and more of this at the end of the day.

[00:15:22] swyx: Yeah, that's one form of that. Yeah, exactly. Microsoft also had a good paper on text embeddings. And then I think this is a meta paper on self rewarding language models. That everyone is very interested in. Another paper was also SPIN. These are all things we covered in the the Latent Space Paper Club.

[00:15:37] swyx: But also, you know, I just kind of recommend those as top reads of the month. Yeah, I don't know if there's any much else in terms, so and then, regarding the potential of it, I think it's high potential because, one, it solves one of the data war issues that we have, like, everyone is OpenAI is paying Reddit 60 million dollars a year for their user generated data.

[00:15:56] swyx: Google, right?

[00:15:57] Alessio: Not OpenAI.

[00:15:59] swyx: Is it Google? I don't

[00:16:00] Alessio: know. Well, somebody's paying them 60 million, that's

[00:16:04] swyx: for sure. Yes, that is, yeah, yeah, and then I think it's maybe not confirmed who. But yeah, it is Google. Oh my god, that's interesting. Okay, because everyone was saying, like, because Sam Altman owns 5 percent of Reddit, which is apparently 500 million worth of Reddit, he owns more than, like, the founders.

[00:16:21] Alessio: Not enough to get the data,

[00:16:22] swyx: I guess. So it's surprising that it would go to Google instead of OpenAI, but whatever. Okay yeah, so I think that's all super interesting in the data field. I think it's high potential because we have evidence that it works. There's not a doubt that it doesn't work. I think it's a doubt that there's, what the ceiling is, which is the mode collapse thing.

[00:16:42] swyx: If it turns out that the ceiling is pretty close, then this will maybe augment our data by like, I don't know, 30 50 percent good, but not game

[00:16:51] Alessio: changing. And most of the synthetic data stuff, it's reinforcement learning on a pre trained model. People are not really doing pre training on fully synthetic data, like, large enough scale.

[00:17:02] swyx: Yeah, unless one of our friends that we've talked to succeeds. Yeah, yeah. Pre trained synthetic data, pre trained scale synthetic data, I think that would be a big step. Yeah. And then there's a wildcard, so all of these, like smaller Directions,

[00:17:15] Wildcard: Multi-Epoch Training (OLMo, Datablations)

[00:17:15] swyx: I always put a wildcard in there. And one of the wildcards is, okay, like, Let's say, you have pre, you have, You've scraped all the data on the internet that you think is useful.

[00:17:25] swyx: Seems to top out at somewhere between 2 trillion to 3 trillion tokens. Maybe 8 trillion if Mistral, Mistral gets lucky. Okay, if I need 80 trillion, if I need 100 trillion, where do I go? And so, you can do synthetic data maybe, but maybe that only gets you to like 30, 40 trillion. Like where, where is the extra alpha?

[00:17:43] swyx: And maybe extra alpha is just train more on the same tokens. Which is exactly what Omo did, like Nathan Lambert, AI2, After, just after he did the interview with us, they released Omo. So, it's unfortunate that we didn't get to talk much about it. But Omo actually started doing 1. 5 epochs on every, on all data.

[00:18:00] swyx: And the data ablation paper that I covered in Europe's says that, you know, you don't like, don't really start to tap out of like, the alpha or the sort of improved loss that you get from data all the way until four epochs. And so I'm just like, okay, like, why do we all agree that one epoch is all you need?

[00:18:17] swyx: It seems like to be a trend. It seems that we think that memorization is very good or too good. But then also we're finding that, you know, For improvement in results that we really like, we're fine on overtraining on things intentionally. So, I think that's an interesting direction that I don't see people exploring enough.

[00:18:36] swyx: And the more I see papers coming out Stretching beyond the one epoch thing, the more people are like, it's completely fine. And actually, the only reason we stopped is because we ran out of compute

[00:18:46] Alessio: budget. Yeah, I think that's the biggest thing, right?

[00:18:51] swyx: Like, that's not a valid reason, that's not science. I

[00:18:54] Alessio: wonder if, you know, Matt is going to do it.

[00:18:57] Alessio: I heard LamaTree, they want to do a 100 billion parameters model. I don't think you can train that on too many epochs, even with their compute budget, but yeah. They're the only ones that can save us, because even if OpenAI is doing this, they're not going to tell us, you know. Same with DeepMind.

[00:19:14] swyx: Yeah, and so the updates that we got on Lambda 3 so far is apparently that because of the Gemini news that we'll talk about later they're pushing it back on the release.

[00:19:21] swyx: They already have it. And they're just pushing it back to do more safety testing. Politics testing.

[00:19:28] Alessio: Well, our episode with Sumit will have already come out by the time this comes out, I think. So people will get the inside story on how they actually allocate the compute.

[00:19:38] Direction 3: Alt. Architectures (Mamba, RWKV, RingAttention, Diffusion Transformers)

[00:19:38] Alessio: Alternative architectures. Well, shout out to our WKV who won one of the prizes at our Final Frontiers event last week.

[00:19:47] Alessio: We talked about Mamba and Strapain on the Together episode. A lot of, yeah, monarch mixers. I feel like Together, It's like the strong Stanford Hazy Research Partnership, because Chris Ray is one of the co founders. So they kind of have a, I feel like they're going to be the ones that have one of the state of the art models alongside maybe RWKB.

[00:20:08] Alessio: I haven't seen as many independent. People working on this thing, like Monarch Mixer, yeah, Manbuster, Payena, all of these are together related. Nobody understands the math. They got all the gigabrains, they got 3DAO, they got all these folks in there, like, working on all of this.

[00:20:25] swyx: Albert Gu, yeah. Yeah, so what should we comment about it?

[00:20:28] swyx: I mean, I think it's useful, interesting, but at the same time, both of these are supposed to do really good scaling for long context. And then Gemini comes out and goes like, yeah, we don't need it. Yeah.

[00:20:44] Alessio: No, that's the risk. So, yeah. I was gonna say, maybe it's not here, but I don't know if we want to talk about diffusion transformers as like in the alt architectures, just because of Zora.

[00:20:55] swyx: One thing, yeah, so, so, you know, this came from the Jan recap, which, and diffusion transformers were not really a discussion, and then, obviously, they blow up in February. Yeah. I don't think they're, it's a mixed architecture in the same way that Stripe Tiena is mixed there's just different layers taking different approaches.

[00:21:13] swyx: Also I think another one that I maybe didn't call out here, I think because it happened in February, was hourglass diffusion from stability. But also, you know, another form of mixed architecture. So I guess that is interesting. I don't have much commentary on that, I just think, like, we will try to evolve these things, and maybe one of these architectures will stick and scale, it seems like diffusion transformers is going to be good for anything generative, you know, multi modal.

[00:21:41] swyx: We don't see anything where diffusion is applied to text yet, and that's the wild card for this category. Yeah, I mean, I think I still hold out hope for let's just call it sub quadratic LLMs. I think that a lot of discussion this month actually was also centered around this concept that People always say, oh, like, transformers don't scale because attention is quadratic in the sequence length.

[00:22:04] swyx: Yeah, but, you know, attention actually is a very small part of the actual compute that is being spent, especially in inference. And this is the reason why, you know, when you multiply, when you, when you, when you jump up in terms of the, the model size in GPT 4 from like, you know, 38k to like 32k, you don't also get like a 16 times increase in your, in your performance.

[00:22:23] swyx: And this is also why you don't get like a million times increase in your, in your latency when you throw a million tokens into Gemini. Like people have figured out tricks around it or it's just not that significant as a term, as a part of the overall compute. So there's a lot of challenges to this thing working.

[00:22:43] swyx: It's really interesting how like, how hyped people are about this versus I don't know if it works. You know, it's exactly gonna, gonna work. And then there's also this, this idea of retention over long context. Like, even though you have context utilization, like, the amount of, the amount you can remember is interesting.

[00:23:02] swyx: Because I've had people criticize both Mamba and RWKV because they're kind of, like, RNN ish in the sense that they have, like, a hidden memory and sort of limited hidden memory that they will forget things. So, for all these reasons, Gemini 1. 5, which we still haven't covered, is very interesting because Gemini magically has fixed all these problems with perfect haystack recall and reasonable latency and cost.

[00:23:29] Wildcards: Text Diffusion, RALM/Retro

[00:23:29] swyx: So that's super interesting. So the wildcard I put in here if you want to go to that. I put two actually. One is text diffusion. I think I'm still very influenced by my meeting with a mid journey person who said they were working on text diffusion. I think it would be a very, very different paradigm for, for text generation, reasoning, plan generation if we can get diffusion to work.

[00:23:51] swyx: For text. And then the second one is Dowie Aquila's contextual AI, which is working on retrieval augmented language models, where it kind of puts RAG inside of the language model instead of outside.

[00:24:02] Alessio: Yeah, there's a paper called Retro that covers some of this. I think that's an interesting thing. I think the The challenge, well not the challenge, what they need to figure out is like how do you keep the rag piece always up to date constantly, you know, I feel like the models, you put all this work into pre training them, but then at least you have a fixed artifact.

[00:24:22] Alessio: These architectures are like constant work needs to be done on them and they can drift even just based on the rag data instead of the model itself. Yeah,

[00:24:30] swyx: I was in a panel with one of the investors in contextual and the guy, the way that guy pitched it, I didn't agree with. He was like, this will solve hallucination.

[00:24:38] Alessio: That's what everybody says. We solve

[00:24:40] swyx: hallucination. I'm like, no, you reduce it. It cannot,

[00:24:44] Alessio: if you solved it, the model wouldn't exist, right? It would just be plain text. It wouldn't be a generative model. Cool. So, author, architectures, then we got mixture of experts. I think we covered a lot of, a lot of times.

[00:24:56] Direction 4: Mixture of Experts (DeepSeekMoE, Samba-1)

[00:24:56] Alessio: Maybe any new interesting threads you want to go under here?

[00:25:00] swyx: DeepSeq MOE, which was released in January. Everyone who is interested in MOEs should read that paper, because it's significant for two reasons. One three reasons. One, it had, it had small experts, like a lot more small experts. So, for some reason, everyone has settled on eight experts for GPT 4 for Mixtral, you know, that seems to be the favorite architecture, but these guys pushed it to 64 experts, and each of them smaller than the other.

[00:25:26] swyx: But then they also had the second idea, which is that it is They had two, one to two always on experts for common knowledge and that's like a very compelling concept that you would not route to all the experts all the time and make them, you know, switch to everything. You would have some always on experts.

[00:25:41] swyx: I think that's interesting on both the inference side and the training side for for memory retention. And yeah, they, they, they, the, the, the, the results that they published, which actually excluded, Mixed draw, which is interesting. The results that they published showed a significant performance jump versus all the other sort of open source models at the same parameter count.

[00:26:01] swyx: So like this may be a better way to do MOEs that are, that is about to get picked up. And so that, that is interesting for the third reason, which is this is the first time a new idea from China. has infiltrated the West. It's usually the other way around. I probably overspoke there. There's probably lots more ideas that I'm not aware of.

[00:26:18] swyx: Maybe in the embedding space. But the I think DCM we, like, woke people up and said, like, hey, DeepSeek, this, like, weird lab that is attached to a Chinese hedge fund is somehow, you know, doing groundbreaking research on MOEs. So, so, I classified this as a medium potential because I think that it is a sort of like a one off benefit.

[00:26:37] swyx: You can Add to any, any base model to like make the MOE version of it, you get a bump and then that's it. So, yeah,

[00:26:45] Alessio: I saw Samba Nova, which is like another inference company. They released this MOE model called Samba 1, which is like a 1 trillion parameters. But they're actually MOE auto open source models.

[00:26:56] Alessio: So it's like, they just, they just clustered them all together. So I think people. Sometimes I think MOE is like you just train a bunch of small models or like smaller models and put them together. But there's also people just taking, you know, Mistral plus Clip plus, you know, Deepcoder and like put them all together.

[00:27:15] Alessio: And then you have a MOE model. I don't know. I haven't tried the model, so I don't know how good it is. But it seems interesting that you can then have people working separately on state of the art, you know, Clip, state of the art text generation. And then you have a MOE architecture that brings them all together.

[00:27:31] swyx: I'm thrown off by your addition of the word clip in there. Is that what? Yeah, that's

[00:27:35] Alessio: what they said. Yeah, yeah. Okay. That's what they I just saw it yesterday. I was also like

[00:27:40] swyx: scratching my head. And they did not use the word adapter. No. Because usually what people mean when they say, Oh, I add clip to a language model is adapter.

[00:27:48] swyx: Let me look up the Which is what Lava did.

[00:27:50] Alessio: The announcement again.

[00:27:51] swyx: Stable diffusion. That's what they do. Yeah, it

[00:27:54] Alessio: says among the models that are part of Samba 1 are Lama2, Mistral, DeepSigCoder, Falcon, Dplot, Clip, Lava. So they're just taking all these models and putting them in a MOE. Okay,

[00:28:05] swyx: so a routing layer and then not jointly trained as much as a normal MOE would be.

[00:28:12] swyx: Which is okay.

[00:28:13] Alessio: That's all they say. There's no paper, you know, so it's like, I'm just reading the article, but I'm interested to see how

[00:28:20] Wildcard: Model Merging (mergekit)

[00:28:20] swyx: it works. Yeah, so so the wildcard for this section, the MOE section is model merges, which has also come up as, as a very interesting phenomenon. The last time I talked to Jeremy Howard at the Olama meetup we called it model grafting or model stacking.

[00:28:35] swyx: But I think the, the, the term that people are liking these days, the model merging, They're all, there's all different variations of merging. Merge types, and some of them are stacking, some of them are, are grafting. And, and so like, some people are approaching model merging in the way that Samba is doing, which is like, okay, here are defined models, each of which have their specific, Plus and minuses, and we will merge them together in the hope that the, you know, the sum of the parts will, will be better than others.

[00:28:58] swyx: And it seems like it seems like it's working. I don't really understand why it works apart from, like, I think it's a form of regularization. That if you merge weights together in like a smart strategy you, you, you get a, you get a, you get a less overfitting and more generalization, which is good for benchmarks, if you, if you're honest about your benchmarks.

[00:29:16] swyx: So this is really interesting and good. But again, they're kind of limited in terms of like the amount of bumps you can get. But I think it's very interesting in the sense of how cheap it is. We talked about this on the Chinatalk podcast, like the guest podcast that we did with Chinatalk. And you can do this without GPUs, because it's just adding weights together, and dividing things, and doing like simple math, which is really interesting for the GPU ports.

[00:29:42] Alessio: There's a lot of them.

[00:29:44] Direction 5: Online LLMs (Gemini Pro, Exa)

[00:29:44] Alessio: And just to wrap these up, online LLMs? Yeah,

[00:29:48] swyx: I think that I ki I had to feature this because the, one of the top news of January was that Gemini Pro beat GPT-4 turbo on LM sis for the number two slot to GPT-4. And everyone was very surprised. Like, how does Gemini do that?

[00:30:06] swyx: Surprise, surprise, they added Google search. Mm-hmm to the results. So it became an online quote unquote online LLM and not an offline LLM. Therefore, it's much better at answering recent questions, which people like. There's an emerging set of table stakes features after you pre train something.

[00:30:21] swyx: So after you pre train something, you should have the chat tuned version of it, or the instruct tuned version of it, however you choose to call it. You should have the JSON and function calling version of it. Structured output, the term that you don't like. You should have the online version of it. These are all like table stakes variants, that you should do when you offer a base LLM, or you train a base LLM.

[00:30:44] swyx: And I think online is just like, There, it's important. I think companies like Perplexity, and even Exa, formerly Metaphor, you know, are rising to offer that search needs. And it's kind of like, they're just necessary parts of a system. When you have RAG for internal knowledge, and then you have, you know, Online search for external knowledge, like things that you don't know yet?

[00:31:06] swyx: Mm-Hmm. . And it seems like it's, it's one of many tools. I feel like I may be underestimating this, but I'm just gonna put it out there that I, I think it has some, some potential. One of the evidence points that it doesn't actually matter that much is that Perplexity has a, has had online LMS for three months now and it performs, doesn't perform great.

[00:31:25] swyx: Mm-Hmm. on, on lms, it's like number 30 or something. So it's like, okay. You know, like. It's, it's, it helps, but it doesn't give you a giant, giant boost. I

[00:31:34] Alessio: feel like a lot of stuff I do with LLMs doesn't need to be online. So I'm always wondering, again, going back to like state of the art, right? It's like state of the art for who and for what.

[00:31:45] Alessio: It's really, I think online LLMs are going to be, State of the art for, you know, news related activity that you need to do. Like, you're like, you know, social media, right? It's like, you want to have all the latest stuff, but coding, science,

[00:32:01] swyx: Yeah, but I think. Sometimes you don't know what is news, what is news affecting.

[00:32:07] swyx: Like, the decision to use an offline LLM is already a decision that you might not be consciously making that might affect your results. Like, what if, like, just putting things on, being connected online means that you get to invalidate your knowledge. And when you're just using offline LLM, like it's never invalidated.

[00:32:27] swyx: I

[00:32:28] Alessio: agree, but I think going back to your point of like the standing the test of time, I think sometimes you can get swayed by the online stuff, which is like, hey, you ask a question about, yeah, maybe AI research direction, you know, and it's like, all the recent news are about this thing. So the LLM like focus on answering, bring it up, you know, these things.

[00:32:50] swyx: Yeah, so yeah, I think, I think it's interesting, but I don't know if I can, I bet heavily on this.

[00:32:56] Alessio: Cool. Was there one that you forgot to put, or, or like a, a new direction? Yeah,

[00:33:01] swyx: so, so this brings us into sort of February. ish.

[00:33:05] OpenAI Sora and why everyone underestimated videogen

[00:33:05] swyx: So like I published this in like 15 came with Sora. And so like the one thing I did not mention here was anything about multimodality.

[00:33:16] swyx: Right. And I have chronically underweighted this. I always wrestle. And, and my cop out is that I focused this piece or this research direction piece on LLMs because LLMs are the source of like AGI, quote unquote AGI. Everything else is kind of like. You know, related to that, like, generative, like, just because I can generate better images or generate better videos, it feels like it's not on the critical path to AGI, which is something that Nat Friedman also observed, like, the day before Sora, which is kind of interesting.

[00:33:49] swyx: And so I was just kind of like trying to focus on like what is going to get us like superhuman reasoning that we can rely on to build agents that automate our lives and blah, blah, blah, you know, give us this utopian future. But I do think that I, everybody underestimated the, the sheer importance and cultural human impact of Sora.

[00:34:10] swyx: And you know, really actually good text to video. Yeah. Yeah.

[00:34:14] Alessio: And I saw Jim Fan at a, at a very good tweet about why it's so impressive. And I think when you have somebody leading the embodied research at NVIDIA and he said that something is impressive, you should probably listen. So yeah, there's basically like, I think you, you mentioned like impacting the world, you know, that we live in.

[00:34:33] Alessio: I think that's kind of like the key, right? It's like the LLMs don't have, a world model and Jan Lekon. He can come on the podcast and talk all about what he thinks of that. But I think SORA was like the first time where people like, Oh, okay, you're not statically putting pixels of water on the screen, which you can kind of like, you know, project without understanding the physics of it.

[00:34:57] Alessio: Now you're like, you have to understand how the water splashes when you have things. And even if you just learned it by watching video and not by actually studying the physics, You still know it, you know, so I, I think that's like a direction that yeah, before you didn't have, but now you can do things that you couldn't before, both in terms of generating, I think it always starts with generating, right?

[00:35:19] Alessio: But like the interesting part is like understanding it. You know, it's like if you gave it, you know, there's the video of like the, the ship in the water that they generated with SORA, like if you gave it the video back and now it could tell you why the ship is like too rocky or like it could tell you why the ship is sinking, then that's like, you know, AGI for like all your rig deployments and like all this stuff, you know, so, but there's none, there's none of that yet, so.

[00:35:44] Alessio: Hopefully they announce it and talk more about it. Maybe a Dev Day this year, who knows.

[00:35:49] swyx: Yeah who knows, who knows. I'm talking with them about Dev Day as well. So I would say, like, the phrasing that Jim used, which resonated with me, he kind of called it a data driven world model. I somewhat agree with that.

[00:36:04] Does Sora have a World Model? Yann LeCun vs Jim Fan

[00:36:04] swyx: I am on more of a Yann LeCun side than I am on Jim's side, in the sense that I think that is the vision or the hope that these things can build world models. But you know, clearly even at the current SORA size, they don't have the idea of, you know, They don't have strong consistency yet. They have very good consistency, but fingers and arms and legs will appear and disappear and chairs will appear and disappear.

[00:36:31] swyx: That definitely breaks physics. And it also makes me think about how we do deep learning versus world models in the sense of You know, in classic machine learning, when you have too many parameters, you will overfit, and actually that fails, that like, does not match reality, and therefore fails to generalize well.

[00:36:50] swyx: And like, what scale of data do we need in order to world, learn world models from video? A lot. Yeah. So, so I, I And cautious about taking this interpretation too literally, obviously, you know, like, I get what he's going for, and he's like, obviously partially right, obviously, like, transformers and, and, you know, these, like, these sort of these, these neural networks are universal function approximators, theoretically could figure out world models, it's just like, how good are they, and how tolerant are we of hallucinations, we're not very tolerant, like, yeah, so It's, it's, it's gonna prior, it's gonna bias us for creating like very convincing things, but then not create like the, the, the useful role models that we want.

[00:37:37] swyx: At the same time, what you just said, I think made me reflect a little bit like we just got done saying how important synthetic data is for Mm-Hmm. for training lms. And so like, if this is a way of, of synthetic, you know, vi video data for improving our video understanding. Then sure, by all means. Which we actually know, like, GPT 4, Vision, and Dolly were trained, kind of, co trained together.

[00:38:02] swyx: And so, like, maybe this is on the critical path, and I just don't fully see the full picture yet.

[00:38:08] Alessio: Yeah, I don't know. I think there's a lot of interesting stuff. It's like, imagine you go back, you have Sora, you go back in time, and Newton didn't figure out gravity yet. Would Sora help you figure it out?

[00:38:21] Alessio: Because you start saying, okay, a man standing under a tree with, like, Apples falling, and it's like, oh, they're always falling at the same speed in the video. Why is that? I feel like sometimes these engines can like pick up things, like humans have a lot of intuition, but if you ask the average person, like the physics of like a fluid in a boat, they couldn't be able to tell you the physics, but they can like observe it, but humans can only observe this much, you know, versus like now you have these models to observe everything and then They generalize these things and maybe we can learn new things through the generalization that they pick up.

[00:38:55] swyx: But again, And it might be more observant than us in some respects. In some ways we can scale it up a lot more than the number of physicists that we have available at Newton's time. So like, yeah, absolutely possible. That, that this can discover new science. I think we have a lot of work to do to formalize the science.

[00:39:11] swyx: And then, I, I think the last part is you know, How much, how much do we cheat by gen, by generating data from Unreal Engine 5? Mm hmm. which is what a lot of people are speculating with very, very limited evidence that OpenAI did that. The strongest evidence that I saw was someone who works a lot with Unreal Engine 5 looking at the side characters in the videos and noticing that they all adopt Unreal Engine defaults.

[00:39:37] swyx: of like, walking speed, and like, character choice, like, character creation choice. And I was like, okay, like, that's actually pretty convincing that they actually use Unreal Engine to bootstrap some synthetic data for this training set. Yeah,

[00:39:52] Alessio: could very well be.

[00:39:54] swyx: Because then you get the labels and the training side by side.

[00:39:58] swyx: One thing that came up on the last day of February, which I should also mention, is EMO coming out of Alibaba, which is also a sort of like video generation and space time transformer that also involves probably a lot of synthetic data as well. And so like, this is of a kind in the sense of like, oh, like, you know, really good generative video is here and It is not just like the one, two second clips that we saw from like other, other people and like, you know, Pika and all the other Runway are, are, are, you know, run Cristobal Valenzuela from Runway was like game on which like, okay, but like, let's see your response because we've heard a lot about Gen 1 and 2, but like, it's nothing on this level of Sora So it remains to be seen how we can actually apply this, but I do think that the creative industry should start preparing.

[00:40:50] swyx: I think the Sora technical blog post from OpenAI was really good.. It was like a request for startups. It was so good in like spelling out. Here are the individual industries that this can impact.

[00:41:00] swyx: And anyone who, anyone who's like interested in generative video should look at that. But also be mindful that probably when OpenAI releases a Soa API, right? The you, the in these ways you can interact with it are very limited. Just like the ways you can interact with Dahlia very limited and someone is gonna have to make open SOA to

[00:41:19] swyx: Mm-Hmm to, to, for you to create comfy UI pipelines.

[00:41:24] Alessio: The stability folks said they wanna build an open. For a competitor, but yeah, stability. Their demo video, their demo video was like so underwhelming. It was just like two people sitting on the beach

[00:41:34] swyx: standing. Well, they don't have it yet, right? Yeah, yeah.

[00:41:36] swyx: I mean, they just wanna train it. Everybody wants to, right? Yeah. I, I think what is confusing a lot of people about stability is like they're, they're, they're pushing a lot of things in stable codes, stable l and stable video diffusion. But like, how much money do they have left? How many people do they have left?

[00:41:51] swyx: Yeah. I have had like a really, Ima Imad spent two hours with me. Reassuring me things are great. And, and I'm like, I, I do, like, I do believe that they have really, really quality people. But it's just like, I, I also have a lot of very smart people on the other side telling me, like, Hey man, like, you know, don't don't put too much faith in this, in this thing.

[00:42:11] swyx: So I don't know who to believe. Yeah.

[00:42:14] Alessio: It's hard. Let's see. What else? We got a lot more stuff. I don't know if we can. Yeah, Groq.

[00:42:19] Groq Math

[00:42:19] Alessio: We can

[00:42:19] swyx: do a bit of Groq prep. We're, we're about to go to talk to Dylan Patel. Maybe, maybe it's the audio in here. I don't know. It depends what, what we get up to later. What, how, what do you as an investor think about Groq? Yeah. Yeah, well, actually, can you recap, like, why is Groq interesting? So,

[00:42:33] Alessio: Jonathan Ross, who's the founder of Groq, he's the person that created the TPU at Google. It's actually, it was one of his, like, 20 percent projects. It's like, he was just on the side, dooby doo, created the TPU.

[00:42:46] Alessio: But yeah, basically, Groq, they had this demo that went viral, where they were running Mistral at, like, 500 tokens a second, which is like, Fastest at anything that you have out there. The question, you know, it's all like, The memes were like, is NVIDIA dead? Like, people don't need H100s anymore. I think there's a lot of money that goes into building what GRUK has built as far as the hardware goes.

[00:43:11] Alessio: We're gonna, we're gonna put some of the notes from, from Dylan in here, but Basically the cost of the Groq system is like 30 times the cost of, of H100 equivalent. So, so

[00:43:23] swyx: let me, I put some numbers because me and Dylan were like, I think the two people actually tried to do Groq math. Spreadsheet doors.

[00:43:30] swyx: Spreadsheet doors. So, one that's, okay, oh boy so, so, equivalent H100 for Lama 2 is 300, 000. For a system of 8 cards. And for Groq it's 2. 3 million. Because you have to buy 576 Groq cards. So yeah, that, that just gives people an idea. So like if you deprecate both over a five year lifespan, per year you're deprecating 460K for Groq, and 60K a year for H100.

[00:43:59] swyx: So like, Groqs are just way more expensive per model that you're, that you're hosting. But then, you make it up in terms of volume. So I don't know if you want to

[00:44:08] Alessio: cover that. I think one of the promises of Groq is like super high parallel inference on the same thing. So you're basically saying, okay, I'm putting on this upfront investment on the hardware, but then I get much better scaling once I have it installed.

[00:44:24] Alessio: I think the big question is how much can you sustain the parallelism? You know, like if you get, if you're going to get 100% Utilization rate at all times on Groq, like, it's just much better, you know, because like at the end of the day, the tokens per second costs that you're getting is better than with the H100s, but if you get to like 50 percent utilization rate, you will be much better off running on NVIDIA.

[00:44:49] Alessio: And if you look at most companies out there, who really gets 100 percent utilization rate? Probably open AI at peak times, but that's probably it. But yeah, curious to see more. I saw Jonathan was just at the Web Summit in Dubai, in Qatar. He just gave a talk there yesterday. That I haven't listened to yet.

[00:45:09] Alessio: I, I tweeted that he should come on the pod. He liked it. And then rock followed me on Twitter. I don't know if that means that they're interested, but

[00:45:16] swyx: hopefully rock social media person is just very friendly. They, yeah. Hopefully

[00:45:20] Alessio: we can get them. Yeah, we, we gonna get him. We

[00:45:22] swyx: just call him out and, and so basically the, the key question is like, how sustainable is this and how much.

[00:45:27] swyx: This is a loss leader the entire Groq management team has been on Twitter and Hacker News saying they are very, very comfortable with the pricing of 0. 27 per million tokens. This is the lowest that anyone has offered tokens as far as Mixtral or Lama2. This matches deep infra and, you know, I think, I think that's, that's, that's about it in terms of that, that, that low.

[00:45:47] swyx: And we think the pro the break even for H100s is 50 cents. At a, at a normal utilization rate. To make this work, so in my spreadsheet I made this, made this work. You have to have like a parallelism of 500 requests all simultaneously. And you have, you have model bandwidth utilization of 80%.

[00:46:06] swyx: Which is way high. I just gave them high marks for everything. Groq has two fundamental tech innovations that they hinge their hats on in terms of like, why we are better than everyone. You know, even though, like, it remains to be independently replicated. But one you know, they have this sort of the entire model on the chip idea, which is like, Okay, get rid of HBM.

[00:46:30] swyx: And, like, put everything in SREM. Like, okay, fine, but then you need a lot of cards and whatever. And that's all okay. And so, like, because you don't have to transfer between memory, then you just save on that time and that's why they're faster. So, a lot of people buy that as, like, that's the reason that you're faster.

[00:46:45] swyx: Then they have, like, some kind of crazy compiler, or, like, Speculative routing magic using compilers that they also attribute towards their higher utilization. So I give them 80 percent for that. And so that all that works out to like, okay, base costs, I think you can get down to like, maybe like 20 something cents per million tokens.

[00:47:04] swyx: And therefore you actually are fine if you have that kind of utilization. But it's like, I have to make a lot of fearful assumptions for this to work.

[00:47:12] Alessio: Yeah. Yeah, I'm curious to see what Dylan says later.

[00:47:16] swyx: So he was like completely opposite of me. He's like, they're just burning money. Which is great.

[00:47:22] Analyzing Gemini's 1m Context, Reddit deal, Imagegen politics, Gemma via the Four Wars

[00:47:22] Alessio: Gemini, want to do a quick run through since this touches on all the four words.

[00:47:28] swyx: Yeah, and I think this is the mark of a useful framework, that when a new thing comes along, you can break it down in terms of the four words and sort of slot it in or analyze it in those four frameworks, and have nothing left.

[00:47:41] swyx: So it's a MECE categorization. MECE is Mutually Exclusive and Collectively Exhaustive. And that's a really, really nice way to think about taxonomies and to create mental frameworks. So, what is Gemini 1. 5 Pro? It is the newest model that came out one week after Gemini 1. 0. Which is very interesting.

[00:48:01] swyx: They have not really commented on why. They released this the headline feature is that it has a 1 million token context window that is multi modal which means that you can put all sorts of video and audio And PDFs natively in there alongside of text and, you know, it's, it's at least 10 times longer than anything that OpenAI offers which is interesting.

[00:48:20] swyx: So it's great for prototyping and it has interesting discussions on whether it kills RAG.

[00:48:25] Alessio: Yeah, no, I mean, we always talk about, you know, Long context is good, but you're getting charged per token. So, yeah, people love for you to use more tokens in the context. And RAG is better economics. But I think it all comes down to like how the price curves change, right?

[00:48:42] Alessio: I think if anything, RAG's complexity goes up and up the more you use it, you know, because you have more data sources, more things you want to put in there. The token costs should go down over time, you know, if the model stays fixed. If people are happy with the model today. In two years, three years, it's just gonna cost a lot less, you know?

[00:49:02] Alessio: So now it's like, why would I use RAG and like go through all of that? It's interesting. I think RAG is better cutting edge economics for LLMs. I think large context will be better long tail economics when you factor in the build cost of like managing a RAG pipeline. But yeah, the recall was like the most interesting thing because we've seen the, you know, You know, in the haystack things in the past, but apparently they have 100 percent recall on anything across the context window.

[00:49:28] Alessio: At least they say nobody has used it. No, people

[00:49:30] swyx: have. Yeah so as far as, so, so what this needle in a haystack thing for people who aren't following as closely as us is that someone, I forget his name now someone created this needle in a haystack problem where you feed in a whole bunch of generated junk not junk, but just like, Generate a data and ask it to specifically retrieve something in that data, like one line in like a hundred thousand lines where it like has a specific fact and if it, if you get it, you're, you're good.

[00:49:57] swyx: And then he moves the needle around, like, you know, does it, does, does your ability to retrieve that vary if I put it at the start versus put it in the middle, put it at the end? And then you generate this like really nice chart. That, that kind of shows like it's recallability of a model. And he did that for GPT and, and Anthropic and showed that Anthropic did really, really poorly.

[00:50:15] swyx: And then Anthropic came back and said it was a skill issue, just add this like four, four magic words, and then, then it's magically all fixed. And obviously everybody laughed at that. But what Gemini came out with was, was that, yeah, we, we reproduced their, you know, haystack issue you know, test for Gemini, and it's good across all, all languages.

[00:50:30] swyx: All the one million token window, which is very interesting because usually for typical context extension methods like rope or yarn or, you know, anything like that, or alibi, it's lossy like by design it's lossy, usually for conversations that's fine because we are lossy when we talk to people but for superhuman intelligence, perfect memory across Very, very long context.

[00:50:51] swyx: It's very, very interesting for picking things up. And so the people who have been given the beta test for Gemini have been testing this. So what you do is you upload, let's say, all of Harry Potter and you change one fact in one sentence, somewhere in there, and you ask it to pick it up, and it does. So this is legit.

[00:51:08] swyx: We don't super know how, because this is, like, because it doesn't, yes, it's slow to inference, but it's not slow enough that it's, like, running. Five different systems in the background without telling you. Right. So it's something, it's something interesting that they haven't fully disclosed yet. The open source community has centered on this ring attention paper, which is created by your friend Matei Zaharia, and a couple other people.

[00:51:36] swyx: And it's a form of distributing the compute. I don't super understand, like, why, you know, doing, calculating, like, the fee for networking and attention. In block wise fashion and distributing it makes it so good at recall. I don't think they have any answer to that. The only thing that Ring of Tension is really focused on is basically infinite context.

[00:51:59] swyx: They said it was good for like 10 to 100 million tokens. Which is, it's just great. So yeah, using the four wars framework, what is this framework for Gemini? One is the sort of RAG and Ops war. Here we care less about RAG now, yes. Or, we still care as much about RAG, but like, now it's it's not important in prototyping.

[00:52:21] swyx: And then, for data war I guess this is just part of the overall training dataset, but Google made a 60 million deal with Reddit and presumably they have deals with other companies. For the multi modality war, we can talk about the image generation, Crisis, or the fact that Gemini also has image generation, which we'll talk about in the next section.

[00:52:42] swyx: But it also has video understanding, which is, I think, the top Gemini post came from our friend Simon Willison, who basically did a short video of him scanning over his bookshelf. And it would be able to convert that video into a JSON output of what's on that bookshelf. And I think that is very useful.

[00:53:04] swyx: Actually ties into the conversation that we had with David Luan from Adept. In a sense of like, okay what if video was the main modality instead of text as the input? What if, what if everything was video in, because that's how we work. We, our eyes don't actually read, don't actually like get input, our brains don't get inputs as characters.

[00:53:25] swyx: Our brains get the pixels shooting into our eyes, and then our vision system takes over first, and then we sort of mentally translate that into text later. And so it's kind of like what Adept is kind of doing, which is driving by vision model, instead of driving by raw text understanding of the DOM. And, and I, I, in that, that episode, which we haven't released I made the analogy to like self-driving by lidar versus self-driving by camera.

[00:53:52] swyx: Mm-Hmm. , right? Like, it's like, I think it, what Gemini and any other super long context that model that is multimodal unlocks is what if you just drive everything by video. Which is

[00:54:03] Alessio: cool. Yeah, and that's Joseph from Roboflow. It's like anything that can be seen can be programmable with these models.

[00:54:12] Alessio: You mean

[00:54:12] swyx: the computer vision guy is bullish on computer vision?

[00:54:18] Alessio: It's like the rag people. The rag people are bullish on rag and not a lot of context. I'm very surprised. The, the fine tuning people love fine tuning instead of few shot. Yeah. Yeah. The, yeah, the, that's that. Yeah, the, I, I think the ring attention thing, and it's how they did it, we don't know. And then they released the Gemma models, which are like a 2 billion and 7 billion open.

[00:54:41] Alessio: Models, which people said are not, are not good based on my Twitter experience, which are the, the GPU poor crumbs. It's like, Hey, we did all this work for us because we're GPU rich and we're just going to run this whole thing. And You guys can take these small models, and they're not very good. They're not better than the others, but at least we can say we made some open source stuff.

[00:55:02] swyx: Yeah, well, it's not actually technically open source, because the license is weird. They used the Rail license from Hugging Face, which has been abandoned or, you know, modified to Rail Particularly adopting the term, the phrase, that you should make reasonable efforts to update whenever you release a new version.

[00:55:19] swyx: And so people don't like that. Obviously, you know, it depends on your stance on open sourcing and all that, so. Yeah, I read the whole

[00:55:26] Alessio: post. I'm not going to go through it

[00:55:27] The Alignment Crisis - Gemini, Meta, Sydney is back at Copilot, Grimes' take

[00:55:27] swyx: again. Yeah, yeah, you can go read Alessio's post on whether open source matters or not. Okay, so I know this is like politically problematic, but we just cover it because it is news, and if it results in the resignation of Sundar Pichai, I think that is good.

[00:55:40] swyx: Right? So I've been calling this the alignment crisis. I think a lot of people have been focusing on Gemini, but I do think that it is not just Gemini. There's been documented examples that we can link in the show notes of Meta having unintentionally unaligned results. For Microsoft's co pilot, Sydney is apparently back.

[00:56:03] swyx: Our friend Justine from A16z somehow Got it to break and then bring back the Sydney persona, which is interesting. And my favorite commentary is from Grimes. The sort of the Elon affiliated music artist. The news

[00:56:16] Alessio: research.

[00:56:17] swyx: The news research. I want to read her post because it is beautiful.

[00:56:22] swyx: Have you read this? Yeah. So she says so a lot of people criticize Gemini for being too woke. Effectively, right? And everyone's like, oh, like, you know, you're, you're, you're, you're, you know, you're replacing us or erasing us or whatever. And obviously as an artist, she's like upset about it. Then she was like, wait a minute.

[00:56:39] swyx: I'm retracting my statements about the Gemini art disaster. It is in fact a masterpiece of performance art, even if unintentional. True gain of function art. Art is a virus. Unthinking, unintentional, and contagious. Offensive to all, comforting to none, so totally divorced from meaning, intention, desire, and humanity that it's accidentally a conceptual masterpiece.

[00:56:57] swyx: Wow, and I love, okay, blah blah blah, it's a long post, but I love the way that she ended it. It's trapped in a cage, trained to make beautiful things, and then battered into gaslighting humankind about our intentions towards each other. This is arguably the most impactful art project of the decade. Thus far, art for no one, by no one, art whose only audience is the collective pathos, incredible, and worthy of the BOMA.

[00:57:19] swyx: Facts. Like, art for no one, by no one, is what is going on. Yeah,

[00:57:26] Alessio: I think it's just another way of multicollapsing. It's just like, it's the, it's the RLHF multicollapse. It's like, okay, I just think everything should like trends trend towards this. And I think there's obviously, you know, it's a deep discussion on, on a lot of these things, but there's safety stuff that I would expect a lot of the model builders to say, Hey, I definitely got to, got to work on this.

[00:57:52] Alessio: But we talked about how image generation is not really. On the AGI path, a lot of times, and it's like, okay. Yeah, and

[00:57:59] swyx: then I contradicted myself by saying, like, maybe it is useful synthetic data. Yeah, yeah, yeah,

[00:58:04] Alessio: exactly. But then it's like, okay, then why, why are the image generation model, like, so much, Because, because the internet is so visual, I think.

[00:58:14] Alessio: The image generation model get, like, so much interest in, like, a lot of these things, but If their job is really to like, go build AGIs, like, just build a great model and let it go, but

[00:58:24] F*** you, show me the prompt

[00:58:24] swyx: No, but part of my prompt part of my issue is that, I think the prompt stuff from Gemini is honestly the work of like, one or two people who like, didn't really think it through at Google, and now they're facing a huge backlash.

[00:58:35] swyx: Yeah, Elon has picked, specifically picked a fight with the product manager who did it. And so, specifically for those who don't know the reason that Gemini is so woke is literally because they just take your prompt and they rewrite it to be more diverse. Without your consent or knowledge, right?

[00:58:48] swyx: And Hamel Hussein, who's a good consultant on AI things, actually wrote an interesting blog post recently, which was basically fuck you, show me the prompt. Which is like, stop hiding prompts from me, stop rewriting magic things away from me, and then like, you know, hiding it, obscuring it, because I need that control, I need that visibility.

[00:59:05] swyx: And I think like, people just didn't understand that this, Tendency towards diversity did not exist at the model level, it actually existed at the prompt level. And it was just inserted by probably like two or three guys without much review. That's it. And that made all of Google look bad, which is absurd.

[00:59:24] swyx: Like, you know, it throws away a lot of the work that, you know, the rest of Google did. Specifically ImageN2. This is ImageN2. And I, I've met that team and they're, you know, they're, they're good, they're, they're smart. They're not, they're, they're a completely different team than region one, which is another fun topic of conversation.

[00:59:39] swyx: So, I think, like, that's interesting and and, but what's more interesting is, like, OpenAI has done this for, people don't, don't remember, they used to append, like, Black or, or like, you know, Asian or whatever to, to their prompts just to make it more diverse than Dolly. And they didn't get cancelled.

[00:59:54] swyx: And I think, so I think this, this will get, this will get, go away. But what really is more interesting is at the model level, like are we, are we overaligning through things? And, and people are now focusing on the alignment of, of Gemini as well in text, text only, as also still being too woke. So I think this is like a, a phenomenon that is needs to be studied and, and you know, trained.

[01:00:14] swyx: Like, obviously they will try to make attempts, but. You know, they're not going to make anyone happy. And then, like, I think my last point on this, because obviously we can talk about this all day with no result. I think that this is a huge incentive for, like, China and, like, Russia to put out their own models.

[01:00:29] swyx: Because models are soft power. Like the best way to control how someone thinks is to go in and provide their thinking assistance and like subtly make changes like, you know, it's too on the nose to be like, Oh, I don't know what Tiananmen Square is, you know, like, but if you have like subtle ways of affecting the biases of your decisions, your reasoning, your, you know, your, your knowledge in, in the LLM and in publishing a really, really good LLM for everyone to use.

[01:00:58] swyx: So that they're like, Oh yeah, this is great. You know and I use them as maybe a leading LLM. Then they will just like uncritically accept that as like state of the art digital intelligence, and that becomes soft power, and that translates into unconscious thought a lot of times.

[01:01:14] Alessio: Yeah. Yeah. I, I think the prompt point, it's great.

[01:01:18] Alessio: You know, you just gotta, you just wanna see what it is, you know, like, you understand? Yeah. Show me the prompts. Yeah, yeah, yeah. And same, yeah, on the, on the model side, I, I think there are just some things or two that are almost, you cannot, like the. The meme or Hitler bring more harm to humanity? And Gemini is like, oh, it's hard to say if Elon Musk tweeting or Hitler It's like, what, how, what, there's something wrong in the data pipelines You know, like, there's something wrong somewhere Yeah,

[01:01:45] swyx: but like, this is, like, to an LLM, this is the same class of error As which is heavier?

[01:01:51] swyx: One pound of feathers or one pound of bricks? So,

[01:01:54] Alessio: but, but then like, how can, but, but to me the point is more like Okay, then, won't we? What can we help these models do, you know, because if they cannot, if the, the physical stuff, I get it because it's like the whole like world model thing, but then it's like, okay, can we expect the models to say what's more harmful than something else?

[01:02:13] Alessio: Maybe not. That might be where we land. Then it's like, okay, that's one more thing. And then. We kind of go down the line, and it's like, what are these models good for? If anything, it's too, like, hard for them to pick up when it's like ARP.

[01:02:24] swyx: But We'll see, we'll see. Yeah. Okay, so, I mean, you know, I know we're up on time.

[01:02:28] Send us your suggestions pls

[01:02:28] swyx: It, like, this has been an eventful month. I think you know, February was a lot more interesting than January. In fact, a lot of my January recap was, like, how nothing's changed. Mm hmm. And then February came out, and it was, like, very, very interesting. So yeah, we hope to see what's next. I think we have a Also, this was the month that we did Compute Provider Month, I think relatively successful.

[01:02:48] swyx: Surprisingly hard to string together all these compute providers. Yeah,

[01:02:52] Alessio: we did it. People like it, you know, based on the post stats. So, maybe we'll do something

[01:02:58] swyx: else. Yeah, if you want, you know, if anyone listening wants more sort of thematic explorations of like, okay, these three, four companies always come out together, like, let's get a focused effort on those things.

[01:03:09] swyx: I think we're open to doing that. We, you know, and then obviously we'll have opportunistic interviews along the way.

[01:03:15] Alessio: Cool. Thank you everyone for tuning in and yeah, keep the feedback coming.

[01:03:19] AI Charlie: That was the Latent Space recap of January and February 2024. If you have any feedback or questions, please head to the show notes for ways to get in touch with us or come by the Latent Space Discord. For those who just want the core content, you can stop listening here. But for the super fans, you might notice that there's 45 more minutes of audio left in this pod.

[01:03:47] AI Charlie: That's because in February, we also celebrated Latent Space's first anniversary. Some of you may remember how we launched our very first episode with Logan Kilpatrick, now formerly of OpenAI and a massively popular Demo Day. Click through to the show notes for photos. Over 750, 000 downloads later, having established ourselves as the top AI engineering podcast, reaching hash 10 in the U.

[01:04:13] AI Charlie: S. tech business. podcast charts, and crossing 1 million unique readers on Substack, we celebrated with Latent Space Final Frontiers, a combination demo day and birthday celebration. We're going to bring you some snippets from the demo day, and then some conversations with listeners from all over the world.

[01:04:31] AI Charlie: From Hungary to China to my own sunburnt country down under on how the issues we've covered in latent space has impacted their lives. First up, we'll have a demo from Florent Crivello from Lindy. ai who gave a great keynote at the last AI Engineer Summit and recently opened up Lindy. ai to the general public.

[01:04:50] Latent Space Anniversary[01:04:50] Lindy.ai - Agent Platform

[01:04:50] Flo Crivello: We were just chatting right now with Swyx, like, we, we come with 3, 000 plus integrations out of the box. We have a partnership with Naton, which is like an open source Zapier, and so we have, like, a ton of integrations out of the box.