Latent Space is popping off! Welcome to the over 8500 latent space explorers who have joined us. Join us this month at various events in SF and NYC, or start your own!

This post spent 22 hours at the top of Hacker News.

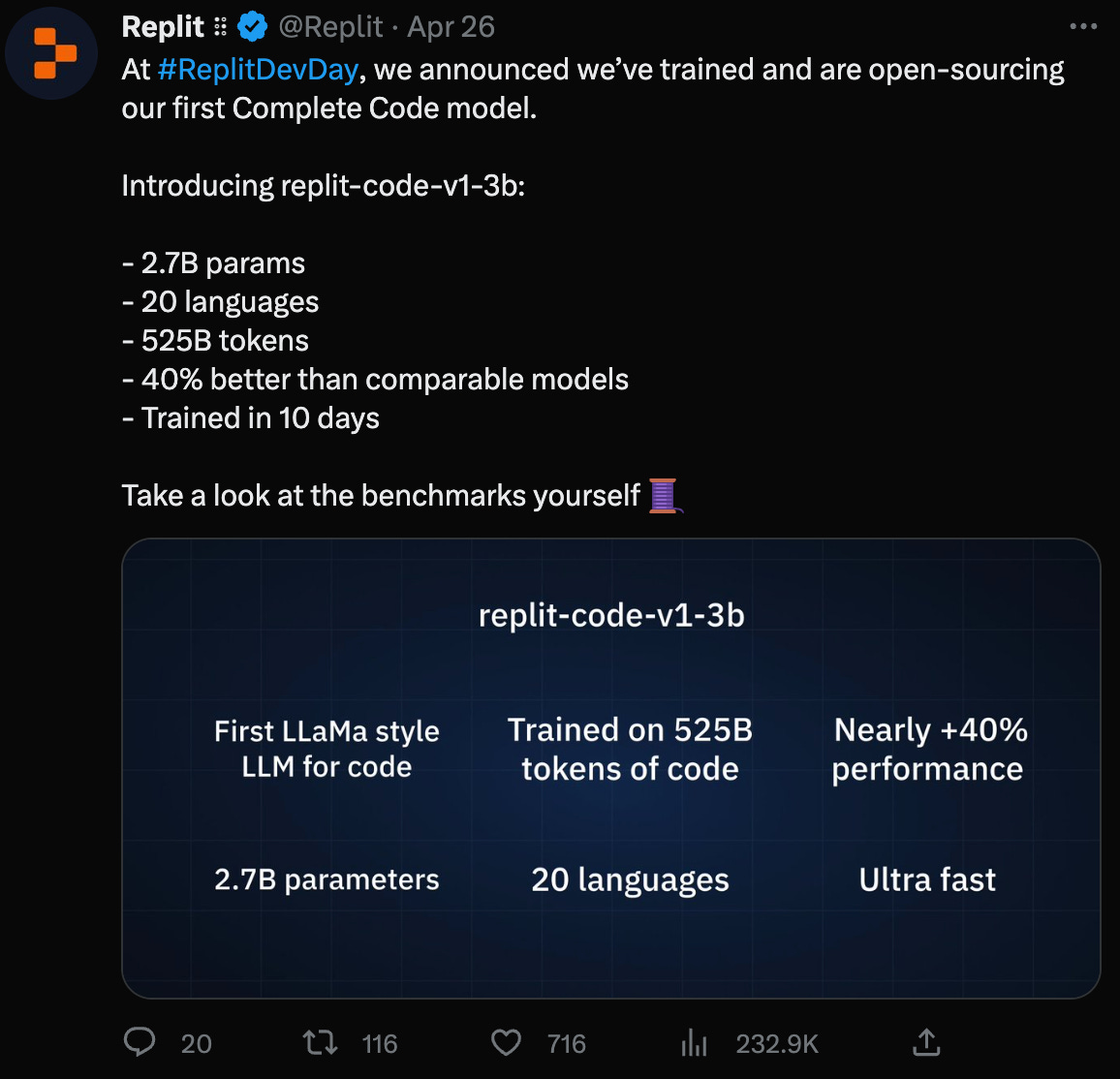

As announced during their Developer Day celebrating their $100m fundraise following their Google partnership, Replit is now open sourcing its own state of the art code LLM: replit-code-v1-3b (model card, HF Space), which beats OpenAI’s Codex model on the industry standard HumanEval benchmark when finetuned on Replit data (despite being 77% smaller1) and more importantly passes AmjadEval (we’ll explain!)

We got an exclusive interview with Reza Shabani, Replit’s Head of AI, to tell the story of Replit’s journey into building a data platform, building GhostWriter, and now training their own LLM, for 22 million developers!

8 minutes of this discussion go into a live demo discussing generated code samples - which is always awkward on audio. So we’ve again gone multimodal and put up a screen recording here where you can follow along on the code samples!

Recorded in-person at the beautiful StudioPod studios in San Francisco.

Full transcript is below the fold. We would really appreciate if you shared our pod with friends on Twitter, LinkedIn, Mastodon, Bluesky, or your social media poison of choice!

Timestamps

[00:00:21] Introducing Reza

[00:01:49] Quantitative Finance and Data Engineering

[00:11:23] From Data to AI at Replit

[00:17:26] Replit GhostWriter

[00:20:31] Benchmarking Code LLMs

[00:23:06] AmjadEval live demo

[00:31:21] Aligning Models on Vibes

[00:33:04] Beyond Chat & Code Completion

[00:35:50] Ghostwriter Autonomous Agent

[00:38:47] Releasing Replit-code-v1-3b

[00:43:38] The YOLO training run

[00:49:49] Scaling Laws: from Kaplan to Chinchilla to LLaMA

[00:52:43] MosaicML

[00:55:36] Replit's Plans for the Future (and Hiring!)

[00:59:05] Lightning Round

Show Notes

Reza on how to train your own LLMs (their top blog of all time)

Our Benchmarks 101 episode where we discussed HumanEval

Replit’s AI team is hiring in North America timezone - Fullstack engineer, Applied AI/ML, and other roles!

Transcript

[00:00:00] Alessio Fanelli: Hey everyone. Welcome to the Latent Space podcast. This is Alessio, partner and CTO in residence at Decibel Partners. I'm joined by my co-host, swyx, writer and editor of Latent Space.

[00:00:21] Introducing Reza

[00:00:21] swyx: Hey and today we have Reza Shabani, Head of AI at Replit. Welcome to the studio. Thank you. Thank you for having me. So we try to introduce people's bios so you don't have to repeat yourself, but then also get a personal side of you.

[00:00:34] You got your PhD in econ from Berkeley, and then you were a startup founder for a bit, and, and then you went into systematic equity trading at BlackRock in Wellington. And then something happened and you were now head of AI at Relet. What should people know about you that might not be apparent on LinkedIn?

[00:00:50] One thing

[00:00:51] Reza Shabani: that comes up pretty often is whether I know how to code. Yeah, you'd be shocked. A lot of people are kind of like, do you know how to code? When I was talking to Amjad about this role, I'd originally talked to him, I think about a product role and, and didn't get it. Then he was like, well, I know you've done a bunch of data and analytics stuff.

[00:01:07] We need someone to work on that. And I was like, sure, I'll, I'll do it. And he was like, okay, but you might have to know how to code. And I was like, yeah, yeah, I, I know how to code. So I think that just kind of surprises people coming from like Ancon background. Yeah. Of people are always kind of like, wait, even when people join Relet, they're like, wait, does this guy actually know how to code?

[00:01:28] Is he actually technical? Yeah.

[00:01:30] swyx: You did a bunch of number crunching at top financial companies and it still wasn't

[00:01:34] Reza Shabani: obvious. Yeah. Yeah. I mean, I, I think someone like in a software engineering background, cuz you think of finance and you think of like calling people to get the deal done and that type of thing.

[00:01:43] No, it's, it's not that as, as you know, it's very very quantitative. Especially what I did in, in finance, very quantitative.

[00:01:49] Quantitative Finance and Data Engineering

[00:01:49] swyx: Yeah, so we can cover a little bit of that and then go into the rapid journey. So as, as you, as you know, I was also a quantitative trader on the sell side and the buy side. And yeah, I actually learned Python there.

[00:02:01] I learned my, I wrote my own data pipelines there before airflow was a thing, and it was just me writing running notebooks and not version controlling them. And it was a complete mess, but we were managing a billion dollars on, on my crappy code. Yeah, yeah. What was it like for you?

[00:02:17] Reza Shabani: I guess somewhat similar.

[00:02:18] I, I started the journey during grad school, so during my PhD and my PhD was in economics and it was always on the more data intensive kind of applied economic side. And, and specifically financial economics. And so what I did for my dissertation I recorded cnbc, the Financial News Network for 10 hours a day, every day.

[00:02:39] Extracted the close captions from the video files and then used that to create a second by second transcript of, of cmbc, merged that on with high frequency trading, quote data and then looked at, you know, went in and did some, some nlp, tagging the company names, and and then looked at the price response or the change in price and trading volume in the seconds after a company was mentioned.

[00:03:01] And, and this was back in. 2009 that I was doing this. So before cloud, before, before a lot of Python actually. And, and definitely before any of these packages were available to make this stuff easy. And that's where, where I had to really learn to code, like outside of you know, any kind of like data programming languages.

[00:03:21] That's when I had to learn Python and had to learn all, all of these other skills to work it with data at that, at that scale. So then, you know, I thought I wanted to do academia. I did terrible on the academic market because everyone looked at my dissertation. They're like, this is cool, but this isn't economics.

[00:03:37] And everyone in the computer science department was actually way more interested in it. Like I, I hung out there more than in the econ department and You know, didn't get a single academic offer. Had two offer. I think I only applied to like two industry jobs and got offers from both of them.

[00:03:53] They, they saw value in it. One of them was BlackRock and turned it down to, to do my own startup, and then went crawling back two and a half years later after the startup failed.

[00:04:02] swyx: Something on your LinkedIn was like you're trading Chinese news tickers or something. Oh, yeah. I forget,

[00:04:07] Reza Shabani: forget what that was.

[00:04:08] Yeah, I mean oh. There, there was so much stuff. Honestly, like, so systematic active equity at, at BlackRock is, was such an amazing. Group and you just end up learning so much and the, and the possibilities there. Like when you, when you go in and you learn the types of things that they've been trading on for years you know, like a paper will come out in academia and they're like, did you know you can use like this data on searches to predict the price of cars?

[00:04:33] And it's like, you go in and they've been trading on that for like eight years. Yeah. So they're, they're really ahead of the curve on, on all of that stuff. And the really interesting stuff that I, that I found when I went in was all like, related to NLP and ml a lot of like transcript data, a lot of like parsing through the types of things that companies talk about, whether an analyst reports, conference calls, earnings reports and the devil's really in the details about like how you make sense of, of that information in a way that, you know, gives you insight into what the company's doing and, and where the market is, is going.

[00:05:08] I don't know if we can like nerd out on specific strategies. Yes. Let's go, let's go. What, so one of my favorite strategies that, because it never, I don't think we ended up trading on it, so I can probably talk about it. And it, it just kind of shows like the kind of work that you do around this data.

[00:05:23] It was called emerging technologies. And so the whole idea is that there's always a new set of emerging technologies coming onto the market and the companies that are ahead of that curve and stay up to date on on the latest trends are gonna outperform their, their competitors.

[00:05:38] And that's gonna reflect in the, in the stock price. So when you have a theory like that, how do you actually turn that into a trading strategy? So what we ended up doing is, well first you have to, to determine what are the emergent technologies, like what are the new up and coming technologies.

[00:05:56] And so we actually went and pulled data on startups. And so there's like startups in Silicon Valley. You have all these descriptions of what they do, and you get that, that corpus of like when startups were getting funding. And then you can run non-negative matrix factorization on it and create these clusters of like what the various Emerging technologies are, and you have this all the way going back and you have like social media back in like 2008 when Facebook was, was blowing up.

[00:06:21] And and you have things like mobile and digital advertising and and a lot of things actually outside of Silicon Valley. They, you know, like shale and oil cracking. Yeah. Like new technologies in, in all these different types of industries. And then and then you go and you look like, which publicly traded companies are actually talking about these things and and have exposure to these things.

[00:06:42] And those are the companies that end up staying ahead of, of their competitors. And a lot of the the cases that came out of that made a ton of sense. Like when mobile was emerging, you had Walmart Labs. Walmart was really far ahead in terms of thinking about mobile and the impact of mobile.

[00:06:59] And, and their, you know, Sears wasn't, and Walmart did well, and, and Sears didn't. So lots of different examples of of that, of like a company that talks about a new emerging trend. I can only imagine, like right now, all of the stuff with, with ai, there must be tons of companies talking about, yeah, how does this affect their

[00:07:17] swyx: business?

[00:07:18] And at some point you do, you do lose the signal. Because you get overwhelmed with noise by people slapping a on everything. Right? Which is, yeah. Yeah. That's what the Long Island Iced Tea Company slaps like blockchain on their name and, you know, their stock price like doubled or something.

[00:07:32] Reza Shabani: Yeah, no, that, that's absolutely right.

[00:07:35] And, and right now that's definitely the kind of strategy that would not be performing well right now because everyone would be talking about ai. And, and that's, as you know, like that's a lot of what you do in Quant is you, you try to weed out other possible explanations for for why this trend might be happening.

[00:07:52] And in that particular case, I think we found that, like the companies, it wasn't, it wasn't like Sears and Walmart were both talking about mobile. It's that Walmart went out of their way to talk about mobile as like a future, mm-hmm. Trend. Whereas Sears just wouldn't bring it up. And then by the time an invest investors are asking you about it, you're probably late to the game.

[00:08:12] So it was really identifying those companies that were. At the cutting edge of, of new technologies and, and staying ahead. I remember like Domino's was another big one. Like, I don't know, you

[00:08:21] swyx: remember that? So for those who don't know, Domino's Pizza, I think for the run of most of the 2010s was a better performing stock than Amazon.

[00:08:29] Yeah.

[00:08:31] Reza Shabani: It's insane.

[00:08:32] swyx: Yeah. Because of their investment in mobile. Mm-hmm. And, and just online commerce and, and all that. I it must have been fun picking that up. Yeah, that's

[00:08:40] Reza Shabani: that's interesting. And I, and I think they had, I don't know if you, if you remember, they had like the pizza tracker, which was on, on mobile.

[00:08:46] I use it

[00:08:46] swyx: myself. It's a great, it's great app. Great app. I it's mostly faked. I think that

[00:08:50] Reza Shabani: that's what I heard. I think it's gonna be like a, a huge I don't know. I'm waiting for like the New York Times article to drop that shows that the whole thing was fake. We all thought our pizzas were at those stages, but they weren't.

[00:09:01] swyx: The, the challenge for me, so that so there's a, there's a great piece by Eric Falkenstein called Batesian Mimicry, where every signal essentially gets overwhelmed by noise because the people who wants, who create noise want to follow the, the signal makers. So that actually is why I left quant trading because there's just too much regime changing and like things that would access very well would test poorly out a sample.

[00:09:25] And I'm sure you've like, had a little bit of that. And then there's what was the core uncertainty of like, okay, I have identified a factor that performs really well, but that's one factor out of. 500 other factors that could be going on. You have no idea. So anyway, that, that was my existential uncertainty plus the fact that it was a very highly stressful job.

[00:09:43] Reza Shabani: Yeah. This is a bit of a tangent, but I, I think about this all the time and I used to have a, a great answer before chat came out, but do you think that AI will win at Quant ever?

[00:09:54] swyx: I mean, what is Rentech doing? Whatever they're doing is working apparently. Yeah. But for, for most mortals, I. Like just waving your wand and saying AI doesn't make sense when your sample size is actually fairly low.

[00:10:08] Yeah. Like we have maybe 40 years of financial history, if you're lucky. Mm-hmm. Times what, 4,000 listed equities. It's actually not a lot. Yeah, no, it's,

[00:10:17] Reza Shabani: it's not a lot at all. And, and constantly changing market conditions and made laden variables and, and all of, all of that as well. Yeah. And then

[00:10:24] swyx: retroactively you're like, oh, okay.

[00:10:26] Someone will discover a giant factor that, that like explains retroactively everything that you've been doing that you thought was alpha, that you're like, Nope, actually you're just exposed to another factor that you're just, you just didn't think about everything was momentum in.

[00:10:37] Yeah. And one piece that I really liked was Andrew Lo. I think he had from mit, I think he had a paper on bid as Spreads. And I think if you, if you just. Taken, took into account liquidity of markets that would account for a lot of active trading strategies, alpha. And that was systematically declined as interest rates declined.

[00:10:56] And I mean, it was, it was just like after I looked at that, I was like, okay, I'm never gonna get this right.

[00:11:01] Reza Shabani: Yeah. It's a, it's a crazy field and I you know, I, I always thought of like the, the adversarial aspect of it as being the, the part that AI would always have a pretty difficult time tackling.

[00:11:13] Yeah. Just because, you know, there's, there's someone on the other end trying to out, out game you and, and AI can, can fail in a lot of those situations. Yeah.

[00:11:23] swyx: Cool.

[00:11:23] From Data to AI at Replit

[00:11:23] Alessio Fanelli: Awesome. And now you've been a rep almost two years. What do you do there? Like what does the, the team do? Like, how has that evolved since you joined?

[00:11:32] Especially since large language models are now top of mind, but, you know, two years ago it wasn't quite as mainstream. So how, how has that evolved?

[00:11:40] Reza Shabani: Yeah, I, so when I joined, I joined a year and a half ago. We actually had to build out a lot of, of data pipelines.

[00:11:45] And so I started doing a lot of data work. And we didn't have you know, there, there were like databases for production systems and, and whatnot, but we just didn't have the the infrastructure to query data at scale and to process that, that data at scale and replica has tons of users tons of data, just tons of ripples.

[00:12:04] And I can get into, into some of those numbers, but like, if you wanted to answer the question, for example of what is the most. Forked rep, rep on rep, you couldn't answer that back then because it, the query would just completely time out. And so a lot of the work originally just went into building data infrastructure, like modernizing the data infrastructure in a way where you can answer questions like that, where you can you know, pull in data from any particular rep to process to make available for search.

[00:12:34] And, and moving all of that data into a format where you can do all of this in minutes as opposed to, you know, days or weeks or months. That laid a lot of the groundwork for building anything in, in ai, at least in terms of training our own own models and then fine tuning them with, with replica data.

[00:12:50] So then you know, we, we started a team last year recruited people from, you know from a team of, of zero or a team of one to, to the AI and data team today. We, we build. Everything related to, to ghostrider. So that means the various features like explain code, generate code, transform Code, and Ghostrider chat which is like a in context ide or a chat product within the, in the ide.

[00:13:18] And then the code completion models, which are ghostwriter code complete, which was the, the very first version of, of ghostrider. Yeah. And we also support, you know, things like search and, and anything in terms of what creates, or anything that requires like large data scale or large scale processing of, of data for the site.

[00:13:38] And, and various types of like ML algorithms for the site, for internal use of the site to do things like detect and stop abuse. Mm-hmm.

[00:13:47] Alessio Fanelli: Yep. Sounds like a lot of the early stuff you worked on was more analytical, kind of like analyzing data, getting answers on these things. Obviously this has evolved now into some.

[00:13:57] Production use case code lms, how is the team? And maybe like some of the skills changed. I know there's a lot of people wondering, oh, I was like a modern data stack expert, or whatever. It's like I was doing feature development, like, how's my job gonna change? Like,

[00:14:12] Reza Shabani: yeah. It's a good question. I mean, I think that with with language models, the shift has kind of been from, or from traditional ml, a lot of the shift has gone towards more like nlp backed ml, I guess.

[00:14:26] And so, you know, there, there's an entire skill set of applicants that I no longer see, at least for, for this role which are like people who know how to do time series and, and ML across time. Right. And, and you, yeah. Like you, you know, that exact feeling of how difficult it is to. You know, you have like some, some text or some variable and then all of a sudden you wanna track that over time.

[00:14:50] The number of dimensions that it, that it introduces is just wild and it's a totally different skill set than what we do in a, for example, in in language models. And it's very it's a, it's a skill that is kind of you know, at, at least at rep not used much. And I'm sure in other places used a lot, but a lot of the, the kind of excitement about language models has pulled away attention from some of these other ML areas, which are extremely important and, and I think still going to be valuable.

[00:15:21] So I would just recommend like anyone who is a, a data stack expert, like of course it's cool to work with NLP and text data and whatnot, but I do think at some point it's going to you know, having, having skills outside of that area and in more traditional aspects of ML will, will certainly be valuable as well.

[00:15:39] swyx: Yeah. I, I'd like to spend a little bit of time on this data stack notion pitch. You were even, you were effectively the first data hire at rep. And I just spent the past year myself diving into data ecosystem. I think a lot of software engineers are actually. Completely unaware that basically every company now eventually evolves.

[00:15:57] The data team and the data team does everything that you just mentioned. Yeah. All of us do exactly the same things, set up the same pipelines you know, shop at the same warehouses essentially. Yeah, yeah, yeah, yeah. So that they enable everyone else to query whatever they, whatever they want. And to, to find those insights that that can drive their business.

[00:16:15] Because everyone wants to be data driven. They don't want to do the janitorial work that it comes, that comes to, yeah. Yeah. Hooking everything up. What like, so rep is that you think like 90 ish people now, and then you, you joined two years ago. Was it like 30 ish people? Yeah, exactly. We're 30 people where I joined.

[00:16:30] So and I just wanna establish your founders. That is exactly when we hired our first data hire at Vilify as well. I think this is just a very common pattern that most founders should be aware of, that like, You start to build a data discipline at this point. And it's, and by the way, a lot of ex finance people very good at this because that's what we do at our finance job.

[00:16:48] Reza Shabani: Yeah. Yeah. I was, I was actually gonna Good say that is that in, in some ways, you're kind of like the perfect first data hire because it, you know, you know how to build things in a reliable but fast way and, and how to build them in a way that, you know, it's, it scales over time and evolves over time because financial markets move so quickly that if you were to take all of your time building up these massive systems, like the trading opportunities gone.

[00:17:14] So, yeah. Yeah, they're very good at it. Cool. Okay. Well,

[00:17:18] swyx: I wanted to cover Ghost Writer as a standalone thing first. Okay. Yeah. And then go into code, you know, V1 or whatever you're calling it. Yeah. Okay. Okay. That sounds good. So order it

[00:17:26] Replit GhostWriter

[00:17:26] Reza Shabani: however you like. Sure. So the original version of, of Ghost Writer we shipped in August of, of last year.

[00:17:33] Yeah. And so this was a. This was a code completion model similar to GitHub's co-pilot. And so, you know, you would have some text and then it would predict like, what, what comes next. And this was, the original version was actually based off of the cogen model. And so this was an open source model developed by Salesforce that was trained on, on tons of publicly available code data.

[00:17:58] And so then we took their their model, one of the smaller ones, did some distillation some other kind of fancy tricks to, to make it much faster and and deployed that. And so the innovation there was really around how to reduce the model footprint in a, to, to a size where we could actually serve it to, to our users.

[00:18:20] And so the original Ghost Rider You know, we leaned heavily on, on open source. And our, our friends at Salesforce obviously were huge in that, in, in developing these models. And, but, but it was game changing just because we were the first startup to actually put something like that into production.

[00:18:38] And, and at the time, you know, if you wanted something like that, there was only one, one name and, and one place in town to, to get it. And and at the same time, I think I, I'm not sure if that's like when the image models were also becoming open sourced for the first time. And so the world went from this place where, you know, there was like literally one company that had all of these, these really advanced models to, oh wait, maybe these things will be everywhere.

[00:19:04] And that's exactly what's happened in, in the last Year or so, as, as the models get more powerful and then you always kind of see like an open source version come out that someone else can, can build and put into production very quickly at, at, you know, a fraction of, of the cost. So yeah, that was the, the kind of code completion Go Strider was, was really just, just that we wanted to fine tune it a lot to kind of change the way that our users could interact with it.

[00:19:31] So just to make it you know, more customizable for our use cases on, on Rep. And so people on Relet write a lot of, like jsx for example, which I don't think was in the original training set for, for cogen. And and they do specific things that are more Tuned to like html, like they might wanna run, right?

[00:19:50] Like inline style or like inline CSS basically. Those types of things. And so we experimented with fine tuning cogen a bit here and there, and, and the results just kind of weren't, weren't there, they weren't where you know, we, we wanted the model to be. And, and then we just figured we should just build our own infrastructure to, you know, train these things from scratch.

[00:20:11] Like, LMS aren't going anywhere. This world's not, you know, it's, it's not like we're not going back to that world of there's just one, one game in town. And and we had the skills infrastructure and the, and the team to do it. So we just started doing that. And you know, we'll be this week releasing our very first open source code model.

[00:20:31] And,

[00:20:31] Benchmarking Code LLMs

[00:20:31] Alessio Fanelli: and when you say it was not where you wanted it to be, how were you benchmarking

[00:20:36] Reza Shabani: it? In that particular case, we were actually, so, so we have really two sets of benchmarks that, that we use. One is human eval, so just the standard kind of benchmark for, for Python, where you can generate some code or you give you give the model a function definition with, with some string describing what it's supposed to do, and then you allow it to complete that function, and then you run a unit test against it and and see if what it generated passes the test.

[00:21:02] So we, we always kind of, we would run this on the, on the model. The, the funny thing is the fine tuned versions of. Of Cogen actually did pretty well on, on that benchmark. But then when we, we then have something called instead of human eval. We call it Amjad eval, which is basically like, what does Amjad think?

[00:21:22] Yeah, it's, it's exactly that. It's like testing the vibes of, of a model. And it's, it's cra like I've never seen him, I, I've never seen anyone test the model so thoroughly in such a short amount of time. He's, he's like, he knows exactly what to write and, and how to prompt the model to, to get you know, a very quick read on, on its quote unquote vibes.

[00:21:43] And and we take that like really seriously. And I, I remember there was like one, one time where we trained a model that had really good you know, human eval scores. And the vibes were just terrible. Like, it just wouldn't, you know, it, it seemed overtrained. So so that's a lot of what we found is like we, we just couldn't get it to Pass the vibes test no matter how the, how

[00:22:04] swyx: eval.

[00:22:04] Well, can you formalize I'm jal because I, I actually have a problem. Slight discomfort with human eval. Effectively being the only code benchmark Yeah. That we have. Yeah. Isn't that

[00:22:14] Reza Shabani: weird? It's bizarre. It's, it's, it's weird that we can't do better than that in some, some way. So, okay. If

[00:22:21] swyx: I, if I asked you to formalize Mja, what does he look for that human eval doesn't do well on?

[00:22:25] Reza Shabani: Ah, that is a, that's a great question. A lot of it is kind of a lot of it is contextual like deep within, within specific functions. Let me think about this.

[00:22:38] swyx: Yeah, we, we can pause for. And if you need to pull up something.

[00:22:41] Reza Shabani: Yeah, I, let me, let me pull up a few. This, this

[00:22:43] swyx: is gold, this catnip for people.

[00:22:45] Okay. Because we might actually influence a benchmark being evolved, right. So, yeah. Yeah. That would be,

[00:22:50] Reza Shabani: that would be huge. This was, this was his original message when he said the vibes test with, with flying colors. And so you have some, some ghostrider comparisons ghost Rider on the left, and cogen is on the right.

[00:23:06] AmjadEval live demo

[00:23:06] Reza Shabani: So here's Ghostrider. Okay.

[00:23:09] swyx: So basically, so if I, if I summarize it from a, for ghosting the, there's a, there's a, there's a bunch of comments talking about how you basically implement a clone. Process or to to c Clooney process. And it's describing a bunch of possible states that he might want to, to match.

[00:23:25] And then it asks for a single line of code for defining what possible values of a name space it might be to initialize it in amjadi val With what model is this? Is this your, this is model. This is the one we're releasing. Yeah. Yeah. It actually defines constants which are human readable and nice.

[00:23:42] And then in the other cogen Salesforce model, it just initializes it to zero because it reads that it starts of an int Yeah, exactly. So

[00:23:51] Reza Shabani: interesting. Yeah. So you had a much better explanation of, of that than than I did. It's okay. So this is, yeah. Handle operation. This is on the left.

[00:24:00] Okay.

[00:24:00] swyx: So this is rep's version. Yeah. Where it's implementing a function and an in filling, is that what it's doing inside of a sum operation?

[00:24:07] Reza Shabani: This, so this one doesn't actually do the infill, so that's the completion inside of the, of the sum operation. But it, it's not, it's, it, it's not taking into account context after this value, but

[00:24:18] swyx: Right, right.

[00:24:19] So it's writing an inline lambda function in Python. Okay.

[00:24:21] Reza Shabani: Mm-hmm. Versus

[00:24:24] swyx: this one is just passing in the nearest available variable. It's, it can find, yeah.

[00:24:30] Reza Shabani: Okay. So so, okay. I'll, I'll get some really good ones in a, in a second. So, okay. Here's tokenize. So

[00:24:37] swyx: this is an assertion on a value, and it's helping to basically complete the entire, I think it looks like an E s T that you're writing here.

[00:24:46] Mm-hmm. That's good. That that's, that's good. And then what does Salesforce cogen do? This is Salesforce cogen here. So is that invalidism way or what, what are we supposed to do? It's just making up tokens. Oh, okay. Yeah, yeah, yeah. So it's just, it's just much better at context. Yeah. Okay.

[00:25:04] Reza Shabani: And, and I guess to be fair, we have to show a case where co cogen does better.

[00:25:09] Okay. All right. So here's, here's one on the left right, which

[00:25:12] swyx: is another assertion where it's just saying that if you pass in a list, it's going to throw an exception saying in an expectedly list and Salesforce code, Jen says,

[00:25:24] Reza Shabani: This is so, so ghost writer was sure that the first argument needs to be a list

[00:25:30] swyx: here.

[00:25:30] So it hallucinated that it wanted a list. Yeah. Even though you never said it was gonna be a list.

[00:25:35] Reza Shabani: Yeah. And it's, it's a argument of that. Yeah. Mm-hmm. So, okay, here's a, here's a cooler quiz for you all, cuz I struggled with this one for a second. Okay. What is.

[00:25:47] swyx: Okay, so this is a four loop example from Amjad.

[00:25:50] And it's, it's sort of like a q and a context in a chat bot. And it's, and it asks, and Amjad is asking, what does this code log? And it just paste in some JavaScript code. The JavaScript code is a four loop with a set time out inside of it with a cons. The console logs out the iteration variable of the for loop and increasing numbers of of, of times.

[00:26:10] So it's, it goes from zero to five and then it just increases the, the delay between the timeouts each, each time. Yeah.

[00:26:15] Reza Shabani: So, okay. So this answer was provided by by Bard. Mm-hmm. And does it look correct to you? Well,

[00:26:22] the

[00:26:22] Alessio Fanelli: numbers too, but it's not one second. It's the time between them increases.

[00:26:27] It's like the first one, then the one is one second apart, then it's two seconds, three seconds. So

[00:26:32] Reza Shabani: it's not, well, well, so I, you know, when I saw this and, and the, the message and the thread was like, Our model's better than Bard at, at coding Uhhuh. This is the Bard answer Uhhuh that looks totally right to me.

[00:26:46] Yeah. And this is our

[00:26:47] swyx: answer. It logs 5 5 55, what is it? Log five 50. 55 oh oh. Because because it logs the state of I, which is five by the time that the log happens. Mm-hmm. Yeah.

[00:27:01] Reza Shabani: Oh God. So like we, you know we were shocked. Like, and, and the Bard dancer looked totally right to, to me. Yeah. And then, and somehow our code completion model mind Jude, like this is not a conversational chat model.

[00:27:14] Mm-hmm. Somehow gets this right. And and, you know, Bard obviously a much larger much more capable model with all this fancy transfer learning and, and and whatnot. Some somehow, you know, doesn't get it right. So, This is the kind of stuff that goes into, into mja eval that you, you won't find in any benchmark.

[00:27:35] Good. And and, and it's, it's the kind of thing that, you know, makes something pass a, a vibe test at Rep.

[00:27:42] swyx: Okay. Well, okay, so me, this is not a vibe, this is not so much a vibe test as the, these are just interview questions. Yeah, that's, we're straight up just asking interview questions

[00:27:50] Reza Shabani: right now. Yeah, no, the, the vibe test, the reason why it's really difficult to kind of show screenshots that have a vibe test is because it really kind of depends on like how snappy the completion is, how what the latency feels like and if it gets, if it, if it feels like it's making you more productive.

[00:28:08] And and a lot of the time, you know, like the, the mix of, of really low latency and actually helpful content and, and helpful completions is what makes up the, the vibe test. And I think part of it is also, is it. Is it returning to you or the, the lack of it returning to you things that may look right, but be completely wrong.

[00:28:30] I think that also kind of affects Yeah. Yeah. The, the vibe test as well. Yeah. And so, yeah, th this is very much like a, like a interview question. Yeah.

[00:28:39] swyx: The, the one with the number of processes that, that was definitely a vibe test. Like what kind of code style do you expect in this situation? Yeah.

[00:28:47] Is this another example? Okay.

[00:28:49] Reza Shabani: Yeah. This is another example with some more Okay. Explanations.

[00:28:53] swyx: Should we look at the Bard one

[00:28:54] Reza Shabani: first? Sure. These are, I think these are, yeah. This is original GT three with full size 175. Billion

[00:29:03] swyx: parameters. Okay, so you asked GPC three, I'm a highly intelligent question answering bot.

[00:29:07] If you ask me a question that is rooted in truth, I'll give you the answer. If you ask me a question that is nonsense I will respond with unknown. And then you ask it a question. What is the square root of a bananas banana? It answers nine. So complete hallucination and failed to follow the instruction that you gave it.

[00:29:22] I wonder if it follows if one, if you use an instruction to inversion it might, yeah. Do what better?

[00:29:28] Reza Shabani: On, on the original

[00:29:29] swyx: GP T Yeah, because I like it. Just, you're, you're giving an instructions and it's not

[00:29:33] Reza Shabani: instruction tuned. Now. Now the interesting thing though is our model here, which does follow the instructions this is not instruction tuned yet, and we still are planning to instruction tune.

[00:29:43] Right? So it's like for like, yeah, yeah, exactly. So,

[00:29:45] swyx: So this is a replica model. Same question. What is the square of bananas? Banana. And it answers unknown. And this being one of the, the thing that Amjad was talking about, which you guys are. Finding as a discovery, which is, it's better on pure natural language questions, even though you trained it on code.

[00:30:02] Exactly. Yeah. Hmm. Is that because there's a lot of comments in,

[00:30:07] Reza Shabani: No. I mean, I think part of it is that there's a lot of comments and there's also a lot of natural language in, in a lot of code right. In terms of documentation, you know, you have a lot of like markdowns and restructured text and there's also just a lot of web-based code on, on replica, and HTML tends to have a lot of natural language in it.

[00:30:27] But I don't think the comments from code would help it reason in this way. And, you know, where you can answer questions like based on instructions, for example. Okay. But yeah, it's, I know that that's like one of the things. That really shocked us is the kind of the, the fact that like, it's really good at, at natural language reasoning, even though it was trained on, on code.

[00:30:49] swyx: Was this the reason that you started running your model on hella swag and

[00:30:53] Reza Shabani: all the other Yeah, exactly. Interesting. And the, yeah, it's, it's kind of funny. Like it's in some ways it kind of makes sense. I mean, a lot of like code involves a lot of reasoning and logic which language models need and need to develop and, and whatnot.

[00:31:09] And so you know, we, we have this hunch that maybe that using that as part of the training beforehand and then training it on natural language above and beyond that really tends to help. Yeah,

[00:31:21] Aligning Models on Vibes

[00:31:21] Alessio Fanelli: this is so interesting. I, I'm trying to think, how do you align a model on vibes? You know, like Bard, Bard is not purposefully being bad, right?

[00:31:30] Like, there's obviously something either in like the training data, like how you're running the process that like, makes it so that the vibes are better. It's like when it, when it fails this test, like how do you go back to the team and say, Hey, we need to get better

[00:31:44] Reza Shabani: vibes. Yeah, let's do, yeah. Yeah. It's a, it's a great question.

[00:31:49] It's a di it's very difficult to do. It's not you know, so much of what goes into these models in, in the same way that we have no idea how we can get that question right. The programming you know, quiz question. Right. Whereas Bard got it wrong. We, we also have no idea how to take certain things out and or, and to, you know, remove certain aspects of, of vibes.

[00:32:13] Of course there's, there's things you can do to like scrub the model, but it's, it's very difficult to, to get it to be better at something. It's, it's almost like all you can do is, is give it the right type of, of data that you think will do well. And then and, and of course later do some fancy type of like, instruction tuning or, or whatever else.

[00:32:33] But a lot of what we do is finding the right mix of optimal data that we want to, to feed into the model and then hoping that the, that the data that's fed in is sufficiently representative of, of the type of generations that we want to do coming out. That's really the best that, that you can do.

[00:32:51] Either the model has. Vibes or, or it doesn't, you can't teach vibes. Like you can't sprinkle additional vibes in it. Yeah, yeah, yeah. Same in real life. Yeah, exactly right. Yeah, exactly. You

[00:33:04] Beyond Code Completion

[00:33:04] Alessio Fanelli: mentioned, you know, co being the only show in town when you started, now you have this, there's obviously a, a bunch of them, right.

[00:33:10] Cody, which we had on the podcast used to be Tap nine, kite, all these different, all these different things. Like, do you think the vibes are gonna be the main you know, way to differentiate them? Like, how are you thinking about. What's gonna make Ghost Rider, like stand apart or like, do you just expect this to be like table stakes for any tool?

[00:33:28] So like, it just gonna be there?

[00:33:30] Reza Shabani: Yeah. I, I do think it's, it's going to be table stakes for sure. I, I think that if you don't if you don't have AI assisted technology, especially in, in coding it's, it's just going to feel pretty antiquated. But but I do think that Ghost Rider stands apart from some of, of these other tools for for specific reasons too.

[00:33:51] So this is kind of the, one of, one of the things that these models haven't really done yet is Come outside of code completion and outside of, of just a, a single editor file, right? So what they're doing is they're, they're predicting like the text that can come next, but they're not helping with the development process quite, quite yet outside of just completing code in a, in a text file.

[00:34:16] And so the types of things that we wanna do with Ghost Rider are enable it to, to help in the software development process not just editing particular files. And so so that means using a right mix of like the right model for for the task at hand. But but we want Ghost Rider to be able to, to create scaffolding for you for, for these projects.

[00:34:38] And so imagine if you would like Terraform. But, but powered by Ghostrider, right? I want to, I put up this website, I'm starting to get a ton of traffic to it and and maybe like I need to, to create a backend database. And so we want that to come from ghostrider as well, so it can actually look at your traffic, look at your code, and create.

[00:34:59] You know a, a schema for you that you can then deploy in, in Postgres or, or whatever else? You know, I, I know like doing anything in in cloud can be a nightmare as well. Like if you wanna create a new service account and you wanna deploy you know, nodes on and, and have that service account, kind of talk to those nodes and return some, some other information, like those are the types of things that currently we have to kind of go, go back, go look at some documentation for Google Cloud, go look at how our code base does it you know, ask around in Slack, kind of figure that out and, and create a pull request.

[00:35:31] Those are the types of things that we think we can automate away with with more advanced uses of, of ghostwriter once we go past, like, here's what would come next in, in this file. So, so that's the real promise of it, is, is the ability to help you kind of generate software instead of just code in a, in a particular file.

[00:35:50] Ghostwriter Autonomous Agent

[00:35:50] Reza Shabani: Are

[00:35:50] Alessio Fanelli: you giving REPL access to the model? Like not rep, like the actual rep. Like once the model generates some of this code, especially when it's in the background, it's not, the completion use case can actually run the code to see if it works. There's like a cool open source project called Walgreen that does something like that.

[00:36:07] It's like self-healing software. Like it gives a REPL access and like keeps running until it fixes

[00:36:11] Reza Shabani: itself. Yeah. So, so, so right now there, so there's Ghostrider chat and Ghostrider code completion. So Ghostrider Chat does have, have that advantage in, in that it can it, it knows all the different parts of, of the ide and so for example, like if an error is thrown, it can look at the, the trace back and suggest like a fix for you.

[00:36:33] So it has that type of integration. But the what, what we really want to do is is. Is merge the two in a way where we want Ghost Rider to be like, like an autonomous agent that can actually drive the ide. So in these action models, you know, where you have like a sequence of of events and then you can use you know, transformers to kind of keep track of that sequence and predict the next next event.

[00:36:56] It's how, you know, companies like, like adapt work these like browser models that can, you know, go and scroll through different websites or, or take some, some series of actions in a, in a sequence. Well, it turns out the IDE is actually a perfect place to do that, right? So like when we talk about creating software, not just completing code in a file what do you do when you, when you build software?

[00:37:17] You, you might clone a repo and then you, you know, will go and change some things. You might add a new file go down, highlight some text, delete that value, and point it to some new database, depending on the value in a different config file or in your environment. And then you would go in and add additional block code to, to extend its functionality and then you might deploy that.

[00:37:40] Well, we, we have all of that data right there in the replica ide. And and we have like terabytes and terabytes of, of OT data you know, operational transform data. And so, you know, we can we can see that like this person has created a, a file what they call it, and, you know, they start typing in the file.

[00:37:58] They go back and edit a different file to match the you know, the class name that they just put in, in the original file. All of that, that kind of sequence data is what we're looking to to train our next model on. And so that, that entire kind of process of actually building software within the I D E, not just like, here's some text what comes next, but rather the, the actions that go into, you know, creating a fully developed program.

[00:38:25] And a lot of that includes, for example, like running the code and seeing does this work, does this do what I expected? Does it error out? And then what does it do in response to that error? So all, all of that is like, Insanely valuable information that we want to put into our, our next model. And and that's like, we think that one can be way more advanced than the, than this, you know, go straighter code completion model.

[00:38:47] Releasing Replit-code-v1-3b

[00:38:47] swyx: Cool. Well we wanted to dive in a little bit more on, on the model that you're releasing. Maybe we can just give people a high level what is being released what have you decided to open source and maybe why open source the story of the YOLO project and Yeah. I mean, it's a cool story and just tell it from the start.

[00:39:06] Yeah.

[00:39:06] Reza Shabani: So, so what's being released is the, the first version that we're going to release. It's a, it's a code model called replica Code V1 three B. So this is a relatively small model. It's 2.7 billion parameters. And it's a, it's the first llama style model for code. So, meaning it's just seen tons and tons of tokens.

[00:39:26] It's been trained on 525 billion tokens of, of code all permissively licensed code. And it's it's three epox over the training set. And And, you know, all of that in a, in a 2.7 billion parameter model. And in addition to that, we, for, for this project or, and for this model, we trained our very own vocabulary as well.

[00:39:48] So this, this doesn't use the cogen vocab. For, for the tokenize we, we trained a totally new tokenize on the underlying data from, from scratch, and we'll be open sourcing that as well. It has something like 32,000. The vocabulary size is, is in the 32 thousands as opposed to the 50 thousands.

[00:40:08] Much more specific for, for code. And, and so it's smaller faster, that helps with inference, it helps with training and it can produce more relevant content just because of the you know, the, the vocab is very much trained on, on code as opposed to, to natural language. So, yeah, we'll be releasing that.

[00:40:29] This week it'll be up on, on hugging pace so people can take it play with it, you know, fine tune it, do all type of things with it. We want to, we're eager and excited to see what people do with the, the code completion model. It's, it's small, it's very fast. We think it has great vibes, but we, we hope like other people feel the same way.

[00:40:49] And yeah. And then after, after that, we might consider releasing the replica tuned model at, at some point as well, but still doing some, some more work around that.

[00:40:58] swyx: Right? So there are actually two models, A replica code V1 three B and replica fine tune V1 three B. And the fine tune one is the one that has the 50% improvement in in common sense benchmarks, which is going from 20% to 30%.

[00:41:13] For,

[00:41:13] Reza Shabani: for yes. Yeah, yeah, yeah, exactly. And so, so that one, the, the additional tuning that was done on that was on the publicly available data on, on rep. And so, so that's, that's you know, data that's in public res is Permissively licensed. So fine tuning on on that. Then, Leads to a surprisingly better, like significantly better model, which is this retuned V1 three B, same size, you know, same, very fast inference, same vocabulary and everything.

[00:41:46] The only difference is that it's been trained on additional replica data. Yeah.

[00:41:50] swyx: And I think I'll call out that I think in one of the follow up q and as that Amjad mentioned, people had some concerns with using replica data. Not, I mean, the licensing is fine, it's more about the data quality because there's a lot of beginner code Yeah.

[00:42:03] And a lot of maybe wrong code. Mm-hmm. But it apparently just wasn't an issue at all. You did

[00:42:08] Reza Shabani: some filtering. Yeah. I mean, well, so, so we did some filtering, but, but as you know, it's when you're, when you're talking about data at that scale, it's impossible to keep out, you know, all of the, it's, it's impossible to find only select pieces of data that you want the, the model to see.

[00:42:24] And, and so a lot of the, a lot of that kind of, you know, people who are learning to code material was in there anyway. And, and you know, we obviously did some quality filtering, but a lot of it went into the fine tuning process and it really helped for some reason. You know, there's a lot of high quality code on, on replica, but there's like you, like you said, a lot of beginner code as well.

[00:42:46] And that was, that was the really surprising thing is that That somehow really improved the model and its reasoning capabilities. It felt much more kind of instruction tuned afterward. And, and you know, we have our kind of suspicions as as to why there's, there's a lot of like assignments on rep that kind of explain this is how you do something and then you might have like answers and, and whatnot.

[00:43:06] There's a lot of people who learn to code on, on rep, right? And, and like, think of a beginner coder, like think of a code model that's learning to, to code learning this reasoning and logic. It's probably a lot more valuable to see that type of, you know, the, the type of stuff that you find on rep as opposed to like a large legacy code base that that is, you know, difficult to, to parse and, and figure out.

[00:43:29] So, so that was very surprising to see, you know, just such a huge jump in in reasoning ability once trained on, on replica data.

[00:43:38] The YOLO training run

[00:43:38] swyx: Yeah. Perfect. So we're gonna do a little bit of storytelling just leading up to the, the an the developer day that you had last week. Yeah. My understanding is you decide, you raised some money, you decided to have a developer day, you had a bunch of announcements queued up.

[00:43:52] And then you were like, let's train the language model. Yeah. You published a blog post and then you announced it on Devrel Day. What, what, and, and you called it the yolo, right? So like, let's just take us through like the

[00:44:01] Reza Shabani: sequence of events. So so we had been building the infrastructure to kind of to, to be able to train our own models for, for months now.

[00:44:08] And so that involves like laying out the infrastructure, being able to pull in the, the data processes at scale. Being able to do things like train your own tokenizes. And and even before this you know, we had to build out a lot of this data infrastructure for, for powering things like search.

[00:44:24] There's over, I think the public number is like 200 and and 30 million res on, on re. And each of these res have like many different files and, and lots of code, lots of content. And so you can imagine like what it must be like to, to be able to query that, that amount of, of data in a, in a reasonable amount of time.

[00:44:45] So we've You know, we spent a lot of time just building the infrastructure that allows for for us to do something like that and, and really optimize that. And, and this was by the end of last year. That was the case. Like I think I did a demo where I showed you can, you can go through all of replica data and parse the function signature of every Python function in like under two minutes.

[00:45:07] And, and there's, you know, many, many of them. And so a and, and then leading up to developer day, you know, we had, we'd kind of set up these pipelines. We'd started training these, these models, deploying them into production, kind of iterating and, and getting that model training to production loop.

[00:45:24] But we'd only really done like 1.3 billion parameter models. It was like all JavaScript or all Python. So there were still some things like we couldn't figure out like the most optimal way to to, to do it. So things like how do you pad or yeah, how do you how do you prefix chunks when you have like multi-language models, what's like the optimal way to do it and, and so on.

[00:45:46] So you know, there's two PhDs on, on the team. Myself and Mike and PhDs tend to be like careful about, you know, a systematic approach and, and whatnot. And so we had this whole like list of things we were gonna do, like, oh, we'll test it on this thing and, and so on. And even these, like 1.3 billion parameter models, they were only trained on maybe like 20 billion tokens or 30 billion tokens.

[00:46:10] And and then Amjad joins the call and he's like, no, let's just, let's just yolo this. Like, let's just, you know, we're raising money. Like we should have a better code model. Like, let's yolo it. Let's like run it on all the data. How many tokens do we have? And, and, and we're like, you know, both Michael and I are like, I, I looked at 'em during the call and we were both like, oh God is like, are we really just gonna do this?

[00:46:33] And

[00:46:34] swyx: well, what is the what's the hangup? I mean, you know that large models work,

[00:46:37] Reza Shabani: you know that they work, but you, you also don't know whether or not you can improve the process in, in In important ways by doing more data work, scrubbing additional content, and, and also it's expensive. It's like, it, it can, you know it can cost quite a bit and if you, and if you do it incorrectly, you can actually get it.

[00:47:00] Or you, you know, it's

[00:47:02] swyx: like you hit button, the button, the go button once and you sit, sit back for three days.

[00:47:05] Reza Shabani: Exactly. Yeah. Right. Well, like more like two days. Yeah. Well, in, in our case, yeah, two days if you're running 256 GP 100. Yeah. Yeah. And and, and then when that comes back, you know, you have to take some time to kind of to test it.

[00:47:19] And then if it fails and you can't really figure out why, and like, yeah, it's, it's just a, it's kind of like a, a. A time consuming process and you just don't know what's going to, to come out of it. But no, I mean, I'm Judd was like, no, let's just train it on all the data. How many tokens do we have? We tell him and he is like, that's not enough.

[00:47:38] Where can we get more tokens? Okay. And so Michele had this you know, great idea to to train it on multiple epox and so

[00:47:45] swyx: resampling the same data again.

[00:47:47] Reza Shabani: Yeah. Which, which can be, which is known risky or like, or tends to overfit. Yeah, you can, you can over overfit. But you know, he, he pointed us to some evidence that actually maybe this isn't really a going to be a problem.

[00:48:00] And, and he was very persuasive in, in doing that. And so it, it was risky and, and you know, we did that training. It turned out. Like to actually be great for that, for that base model. And so then we decided like, let's keep pushing. We have 256 TVs running. Let's see what else we can do with it.

[00:48:20] So we ran a couple other implementations. We ran you know, a the fine tune version as I, as I said, and that's where it becomes really valuable to have had that entire pipeline built out because then we can pull all the right data, de-dupe it, like go through the, the entire like processing stack that we had done for like months.

[00:48:41] We did that in, in a matter of like two days for, for the replica data as well removed, you know, any of, any personal any pii like personal information removed, harmful content, removed, any of, of that stuff. And we just put it back through the that same pipeline and then trained on top of that.

[00:48:59] And so I believe that replica tune data has seen something like 680. Billion tokens. And, and that's in terms of code, I mean, that's like a, a universe of code. There really isn't that much more out there. And, and it, you know, gave us really, really promising results. And then we also did like a UL two run, which allows like fill the middle capabilities and and, and will be, you know working to deploy that on, on rep and test that out as well soon.

[00:49:29] But it was really just one of those Those cases where, like, leading up to developer day, had we, had we done this in this more like careful, systematic way what, what would've occurred in probably like two, three months. I got us to do it in, in a week. That's fun. It was a lot of fun. Yeah.

[00:49:49] Scaling Laws: from Kaplan to Chinchilla to LLaMA

[00:49:49] Alessio Fanelli: And so every time I, I've seen the stable releases to every time none of these models fit, like the chinchilla loss in, in quotes, which is supposed to be, you know, 20 tokens per, per, what's this part of the yo run?

[00:50:04] Or like, you're just like, let's just throw out the tokens at it doesn't matter. What's most efficient or like, do you think there's something about some of these scaling laws where like, yeah, maybe it's good in theory, but I'd rather not risk it and just throw out the tokens that I have at it? Yeah,

[00:50:18] Reza Shabani: I think it's, it's hard to, it's hard to tell just because there's.

[00:50:23] You know, like, like I said, like these runs are expensive and they haven't, if, if you think about how many, how often these runs have been done, like the number of models out there and then, and then thoroughly tested in some forum. And, and so I don't mean just like human eval, but actually in front of actual users for actual inference as part of a, a real product that, that people are using.

[00:50:45] I mean, it's not that many. And, and so it's not like there's there's like really well established kind of rules as to whether or not something like that could lead to, to crazy amounts of overfitting or not. You just kind of have to use some, some intuition around it. And, and what we kind of found is that our, our results seem to imply that we've really been under training these, these models.

[00:51:06] Oh my god. And so like that, you know, all, all of the compute that we kind of. Through, with this and, and the number of tokens, it, it really seems to help and really seems to to improve. And I, and I think, you know, these things kind of happen where in, in the literature where everyone kind of converges to something seems to take it for for a fact.

[00:51:27] And like, like Chinchilla is a great example of like, okay, you know, 20 tokens. Yeah. And but, but then, you know, until someone else comes along and kind of tries tries it out and sees actually this seems to work better. And then from our results, it seems imply actually maybe even even lla. Maybe Undertrained.

[00:51:45] And, and it may be better to go even You know, like train on on even more tokens then and for, for the

[00:51:52] swyx: listener, like the original scaling law was Kaplan, which is 1.7. Mm-hmm. And then Chin established 20. Yeah. And now Lama style seems to mean 200 x tokens to parameters, ratio. Yeah. So obviously you should go to 2000 X, right?

[00:52:06] Like, I mean, it's,

[00:52:08] Reza Shabani: I mean, we're, we're kind of out of code at that point, you know, it's like there, there is a real shortage of it, but I know that I, I know there are people working on I don't know if it's quite 2000, but it's, it's getting close on you know language models. And so our friends at at Mosaic are are working on some of these really, really big models that are, you know, language because you with just code, you, you end up running out of out of context.

[00:52:31] So Jonathan at, at Mosaic has Jonathan and Naveen both have really interesting content on, on Twitter about that. Yeah. And I just highly recommend following Jonathan. Yeah,

[00:52:43] MosaicML

[00:52:43] swyx: I'm sure you do. Well, CAGR, can we talk about, so I, I was sitting next to Naveen. I'm sure he's very, very happy that you, you guys had such, such success with Mosaic.

[00:52:50] Maybe could, could you shout out like what Mosaic did to help you out? What, what they do well, what maybe people don't appreciate about having a trusted infrastructure provider versus a commodity GPU provider?

[00:53:01] Reza Shabani: Yeah, so I mean, I, I talked about this a little bit in the in, in the blog post in terms of like what, what advantages like Mosaic offers and, and you know, keep in mind, like we had, we had deployed our own training infrastructure before this, and so we had some experience with it.

[00:53:15] It wasn't like we had just, just tried Mosaic And, and some of those things. One is like you can actually get GPUs from different providers and you don't need to be you know, signed up for that cloud provider. So it's, it kind of detaches like your GPU offering from the rest of your cloud because most of our cloud runs in, in gcp.

[00:53:34] But you know, this allowed us to leverage GPUs and other providers as well. And then another thing is like train or infrastructure as a service. So you know, these GPUs burn out. You have note failures, you have like all, all kinds of hardware issues that come up. And so the ability to kind of not have to deal with that and, and allow mosaic and team to kind of provide that type of, of fault tolerance was huge for us.

[00:53:59] As well as a lot of their preconfigured l m configurations for, for these runs. And so they have a lot of experience in, in training these models. And so they have. You know, the, the right kind of pre-configured setups for, for various models that make sure that, you know, you have the right learning rates, the right training parameters, and that you're making the, the best use of the GPU and, and the underlying hardware.

[00:54:26] And so you know, your GPU utilization is always at, at optimal levels. You have like fewer law spikes than if you do, you can recover from them. And you're really getting the most value out of, out of the compute that you're kind of throwing at, at your data. We found that to be incredibly, incredibly helpful.

[00:54:44] And so it, of the time that we spent running things on Mosaic, like very little of that time is trying to figure out why the G P U isn't being utilized or why you know, it keeps crashing or, or why we, you have like a cuda out of memory errors or something like that. So like all, all of those things that make training a nightmare Are are, you know, really well handled by, by Mosaic and the composer cloud and and ecosystem.

[00:55:12] Yeah. I was gonna

[00:55:13] swyx: ask cuz you're on gcp if you're attempted to rewrite things for the TPUs. Cause Google's always saying that it's more efficient and faster, whatever, but no one has experience with them. Yeah.

[00:55:23] Reza Shabani: That's kind of the problem is that no one's building on them, right? Yeah. Like, like we want to build on, on systems that everyone else is, is building for.

[00:55:31] Yeah. And and so with, with the, with the TPUs that it's not easy to do that.

[00:55:36] Replit's Plans for the Future (and Hiring!)

[00:55:36] swyx: So plans for the future, like hard problems that you wanna solve? Maybe like what, what do you like what kind of people that you're hiring on your team?

[00:55:44] Reza Shabani: Yeah. So We are, we're currently hiring for for two different roles on, on my team.

[00:55:49] Although we, you know, welcome applications from anyone that, that thinks they can contribute in, in this area. Replica tends to be like a, a band of misfits. And, and the type of people we work with and, and have on our team are you know, like just the, the perfect mix to, to do amazing projects like this with very, very few people.

[00:56:09] Right now we're hiring for the applied a applied to AI ml engineer. And so, you know, this is someone who's. Creating data pipelines, processing the data at scale creating runs and and training models and you know, running different variations, testing the output running human evals and, and solving a, a ton of the issues that come up in the, in the training pipeline from beginning to end.

[00:56:34] And so, you know, if you read the, the blog post we'll be going into, we'll be releasing additional blog posts that go into the details of, of each of those different sections. You know, just like tokenized training is incredibly complex and you can write, you know, a whole series of blog posts on that.

[00:56:50] And so the, those types of really challenging. Engineering problems of how do you sample this data at, at scale from different languages in different RDS and pipelines and, and feed them to you know, sense peace tokenize to, to learn. If you're interested in working in that type of, of stuff we'd love to speak with you.

[00:57:10] And and same for on the inference side. So like, if you wanna figure out how to make these models be lightning fast and optimize the the transformer layer to get like as much out of out of inference and reduce latency as much as possible you know, you'd be, you'd be joining our team and working alongside.

[00:57:29] Bradley, for example, who was like he, I always embarrass him and he's like the most humble person ever, but I'm gonna embarrass him here. He was employee number seven at YouTube and Wow. Yeah, so when I met him I was like, why are you here? But that's like the kind of person that joins Relet and, you know, he, he's obviously seen like how to scale systems and, and seen, seen it all.

[00:57:52] And like he's like the type of person who works on like our inference stack and makes it faster and scalable and and is phenomenal. So if you're just a solid engineer and wanna work on anything related to LLMs In terms of like training inference, data pipelines the applied AI ML role is, is a great role.

[00:58:12] We're also hiring for a full stack engineer. So this would be someone on my team who does both the model training stuff, but, but is more oriented towards bringing that AI to to users. And so that could mean many different things. It could mean you know, on the front end building the integrations with the workspace that allow you to, to receive the code completion models.

[00:58:34] It means working on Go rider chats, like the conversational ability between. Ghost Writer and what you're trying to do, building the various agents that we want replica to have access to. Creating embeddings to allow people to ask questions about you know, docs or or, or their own projects or, or other teams, projects that they're collaborating with.

[00:58:55] All of those types of things are in the, in the kind of full stack role that that I'm hiring for on my team as well. Perfect. Awesome.

[00:59:05] Lightning Round

[00:59:05] Alessio Fanelli: Yeah, let's jump into Lining Ground. We'll ask you Factbook questions give us a short answer. I know it's a landing ground, but Sean likes to ask follow up questions to the landing ground questions.

[00:59:15] So be ready.

[00:59:18] swyx: Yeah. This is an acceleration question. What is something you thought would take much longer, but it's already here.

[00:59:24] It's coming true much faster than you thought.

[00:59:27] Reza Shabani: Ai I mean, it's, it's like I, I know it's cliche, but like every episode of Of Black Mirror that I watched like in the past five years is already Yeah. Becoming true, if not, will become true very, very soon. I remember that during there was like one episode where this, this woman, her boyfriend dies and then they train the data on, they, they go through all of his social media and train a, a chat bot to speak like him.

[00:59:54] And at the, and you know, she starts speaking to him and, and it speaks like him. And she's like, blown away by this. And I think everyone was blown away by that. Yeah. That's like old news. That's like, it's, and, and I think that that's mind blowing. How, how quickly it's here and, and how much it's going to keep changing.

[01:00:13] Yeah.

[01:00:14] swyx: Yeah. Yeah. And, and you, you mentioned that you're also thinking about the social impact of some of these things that we're doing.

[01:00:19] Reza Shabani: Yeah. That that'll be, I think one of the. Yeah, I, I think like another way to kind of answer that question is it's, it's forcing us, the, the speed at which everything is developing is forcing us to answer some important questions that we might have otherwise kind of put off in terms of automation.

[01:00:39] I think like one of the there's a bit of a tangent, but like, one, one of the things is I think we used to think of AI as these things that would come and take blue collar jobs. And then now, like with a lot of white collar jobs that seem to be like at risk from something like chat G B T all of a sudden that conversation becomes a lot, a lot more important.

[01:00:59] And how do we it, it suddenly becomes more important to talk about how do we allow AI to help people as opposed to replace them. And and you know, what changes we need to make over the very long term as a society to kind of Allow you know, people to enjoy the kind of benefits that AI brings to an economy and, and to a society and not feel threatened by it instead.

[01:01:23] Alessio Fanelli: Yeah. What do you think a year from now, what will people be the most

[01:01:26] Reza Shabani: surprised by? I think a year from now, I'm really interested in seeing how a lot of this technology will be applied to domains outside of chat. And, and I think we're kind of just at the beginning of, of that world you know, chat, G B T, that that took a lot of people by surprise because it was the first time that people started to, to actually interact with it and see what the the capabilities were.

[01:01:54] And, and I think it's still just a, a chatbot for many people. And I think that once you start to apply it to actual products, businesses use cases, it's going to become incredibly Powerful. And, and I don't think that we're kind of thinking of the implications for, for companies and, and for the, for the economy.

[01:02:14] You know, if you, for example, are like traveling and you want to be able to ask like specific questions about where you're going and plan out your trip, and maybe you wanna know if like if there are like noise complaints in the Airbnb, you just are thinking of booking. And, and you might have like a chat bots actually able to create a query that goes and looks at like, noise complaints that were filed or like construction permits that are filed that are fall within the same date range of your stay.

[01:02:40] Like I, I think that that type of like transfer learning when applied to like specific industries and specific products is gonna be incredibly powerful. And I don't think. Anyone has like that much clue in terms of like what's what's going to be possible there and how much a lot of our favorite products might, might change and become a lot more powerful with this technology.

[01:03:00] swyx: Request for products or request for startups. What is an AI thing you would pay for if somebody built it with their personal work?

[01:03:08] Reza Shabani: Oh, man. The, the, there's a lot of a lot of this type of stuff, but or, or a lot of people trying to build this type of, of thing, but a good L l m IDE is kind of what, what we call it in You mean the one, like the one you work on?

[01:03:22] Yeah, exactly. Yeah. Well, so that's why we're trying to build it so that people Okay. Okay. Will pay for it. No, I, but, but I mean, seriously, I think that I, I, I think something that allows you to kind of. Work with different LLMs and not have to repeat a lot of the, the annoyance that kind of comes with prompt engineering.

[01:03:44] So think, think of it this way. Like I want to be able to create different prompts and and test them and against different types of models. And so maybe I want to test open AI's models. Google's models. Yeah. Cohere.

[01:03:57] swyx: So the playground, like from

[01:03:59] Reza Shabani: net Devrel, right? Exactly. So, so like think Nat dot Devrel for Yeah.

[01:04:04] For, well, for anything I guess. So Nat, maybe we should say what Nat dot Devrel is for people don't know. So Nat Friedman, Nat Friedman former GitHub ceo. CEO and, and or not current ceo, right? No. Former. Yeah. Went on replica Hired a bounty and, and had a bounty build this website for him.

[01:04:25] Yeah. That allows you to kind of compare different language models and and get a response back. Like you, you add one prompt and then it queries these different language models, gets the response back. And it, it turned into this really cool tool that people were using to compare these models.

[01:04:39] And then he put it behind a paywall because people were starting to bankrupt him as a result of using it. But but something like that, that allows you to test different models, but also goes further and lets you like, keep the various responses that were, that were generated with these various parameters.

[01:04:56] And, and, you know, you can do things like perplexity analysis and how, how widely The, the, the responses differ and over time and using what prompts, strategies and whatnot, I, I do think something like that would be really useful and isn't really built into most ides today. But that's definitely something, especially given how much I'm playing around with prompts and and language models today would be incredibly useful to have.

[01:05:22] I

[01:05:22] swyx: perceive you to be one layer below prompts. But you're saying that you actually do a lot of prompt engineering yourself because you, I thought you were working on the model, not the prompts, but maybe I'm wrong.

[01:05:31] Reza Shabani: No, I, so I work on, on everything. Both, yeah. On, on everything. I think most people still work with pro, I mean, even a code completion model, you're still working with prompts to Yeah.

[01:05:40] When you're, when you're you know running inference and, and whatever else. And, you know, instruction tuning, you're working with prompts. And so like, there's There's still a big need for for, for prompt engineering tools as well. I, I do, I guess I should say, I do think that that's gonna go away at some point.

[01:05:59] That's my, that's my like, hot take. I don't know if, if you all agree on that, but I do kind of, yeah. I think some of that stuff is going to, to go away at

[01:06:07] swyx: some point. I'll, I'll represent the people who disagree. People need problems all the time. Humans need problems all the time. We, you know, humans are general intelligences and we need to tell them to align and prompts our way to align our intent.

[01:06:18] Yeah. So, I don't know the, it's a way to inject context and give instructions and that will never go away. Right. Yeah.

[01:06:25] Reza Shabani: I think I think you're, you're right. I totally agree by the way that humans are general intelligences. Yeah. Well, I was, I was gonna say like one thing is like as a manager, you're like the ultimate prompt engineer.

[01:06:34] Prompt engineer.

[01:06:35] swyx: Yeah. Any executive. Yeah. You have to communicate extremely well. And it is, it is basically akin of prompt engineering. Yeah. They teach you frameworks on how to communicate as an executive. Yeah.

[01:06:45] Reza Shabani: No, absolutely. I, I completely agree with that. And then someone might hallucinate and you're like, no, no, this is, let's try it this way instead.

[01:06:52] No, I, I completely agree with that. I think a lot of the more kind of I guess the algorithmic models that will return something to you the way like a search bar might, right? Yeah. I think that type of You wanted to disappear. Yeah. Yeah, exactly. And so like, I think that type of prompt engineering will, will go away.

[01:07:08] I mean, imagine if in the early days of search when the algorithms weren't very good, imagine if you were to go create a middleware that says, Hey type in what you're looking for, and then I will turn it into the set of words that you should be searching for. Yes. To get back the information that's most relevant, that, that feels a little like what prompt engineering is today.