Welcome to the almost 3k latent space explorers that joined us last month! We’re holding our first SF listener meetup with Practical AI next Monday; join us if you want to meet past guests and put faces to voices! All events are in /community.

Who among you regularly click the ubiquitous 👍 /👎 buttons in ChatGPT/Bard/etc?

Anyone? I don’t see any hands up.

OpenAI has told us how important reinforcement learning from human feedback (RLHF) is to creating the magic that is ChatGPT, but we know from our conversation with Databricks’ Mike Conover just how hard it is to get just 15,000 pieces of explicit, high quality human responses1.

We are shockingly reliant on good human feedback. Andrej Karpathy’s recent keynote at Microsoft Build on the State of GPT demonstrated just how much of the training process relies on contractors2 to supply the millions of items of human feedback needed to make a ChatGPT-quality LLM (highlighted by us in red):

But the collection of good feedback is an incredibly messy problem. First of all, if you have contractors paid by the datapoint, they are incentivized to blast through as many as possible without much thought. So you hire more contractors and double, maybe triple, your costs. Ok, you say, lets recruit missionaries, not mercenaries. People should volunteer their data! Then you run into the same problem we and any consumer review platform run into - the vast majority of people send nothing at all, and those who do are disproportionately representing negative reactions. More subtle problems emerge when you try to capture subjective human responses - the reason that ChatGPT responses tend to be inhumanly verbose, is because humans have a well documented “longer = better” bias when classifying responses in a “laboratory setting”.

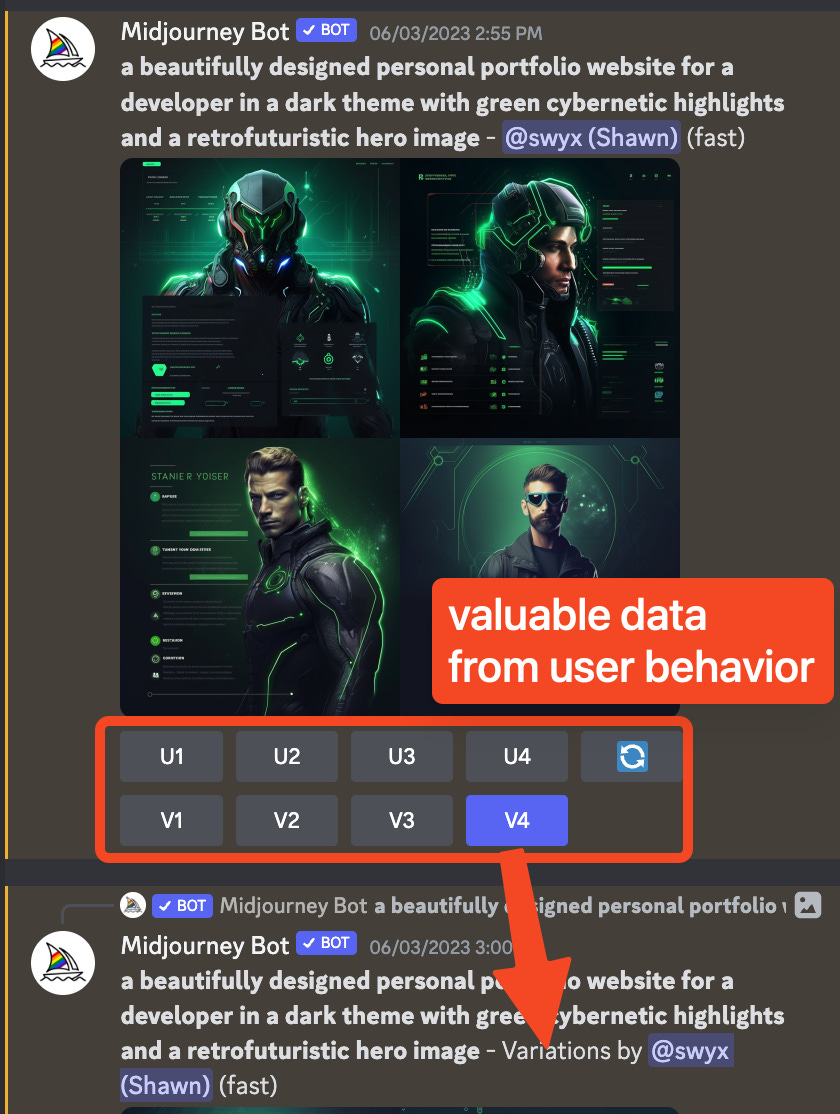

The fix for this, of course, is to get out of the lab and learn from real human behavior, not artificially constructed human feedback. You don’t see a thumbs up/down button in GitHub Copilot nor Codeium nor Codium. Instead, they work an implicit accept/reject event into the product workflow, such that you cannot help but to give feedback while you use the product. This way you hear from all your users, in their natural environments doing valuable tasks they are familiar with. The prototypal example in this is Midjourney3, who unobtrusively collect 1 of 9 types of feedback from every user as part of their workflow, in exchange for much faster first draft image generations:

The best known public example of AI product telemetry is in the Copilot-Explorer writeup, which checks for the presence of generated code after 15-600 second intervals, which enables GitHub to claim that 40% of code is generated by Copilot.

This is fantastic and “obviously” the future of productized AI. Every AI application should figure out how to learn from all their real users, not some contractors in a foreign country. Most prompt engineers and prompt engineering tooling also tend to focus on pre-production prototyping, but could also benefit from A/B testing their prompts in the real world.

In short, AI may need Analytics more than Analytics needs AI.

Amplitude’s Month of AI

This is why Amplitude is going hard on AI - and why we recently spent a weekend talking to Jeffrey Wang, cofounder and chief architect at Amplitude, and Joe Reeve, head of AI, recording a live episode at the AI + Product Hackathon where 150+ hackers gathered to compete for over $22.5k in prizes from Amplitude, New Relic, LanceDB, AWS, and more.

To put things in perspective, Amplitude is a legendary YC alum with $238M of revenue in 2022 — our first guests representing the AI efforts of a public company!

We chatted about how they have been approaching AI in their product (“question to chart” BI, text field autofill, instrumenting Amplitude with Amplitude), some of the issues they’ve had with different models, and the importance of first-party data in the world of LLMs. Another topic that came out of the Q&A was this idea of almost an “AmplitudeGPT”; rather than using language to simply generate a query, you could have these models investigate reasons for why certain behavior is happening in your user base. It was a really good discussion, and hope you all enjoy listening to it!

Sections

[00:00:47] Amplitude's founding story and pivot

[00:03:28] Amplitude as an AI company and opportunities

[00:07:14] Limitations and challenges with using AI models

[00:10:56] Using Amplitude's product to build Amplitude - instrumenting AI

[00:12:32] Existing ML models in Amplitude's product and customer use cases

[00:15:50] “A/Z testing” and adaptable products

[00:19:33] The future of analytics and dashboards

[00:21:03] Optimizing for metrics in chatbots and AI products

[00:26:22] Using general models vs. fine-tuned models

[00:30:24] The importance of models vs. data - Amplitude's data set

[00:39:00] Lightning Round + Q&A

Show Notes

Transcript

Editor’s note: all timestamps are 1 minute behind because we hadn’t yet added the intro before making these. Sorry about that!

Alessio: Thank you everyone for coming. Hopefully, some of you have listened to the podcast before, if you haven't, we focus on AI research and application. So we don't focus on “AI is going to kill us all”. We don't think about virtual girlfriends. We don't think about all of these more societal things. We're focused on models: how do you build them? How do you train them? How do you use them in production? What are some of the limitations on getting these things from demos to things that millions of users use? And obviously, a lot of you are building things. Otherwise, you wouldn't be here. And some of you have been building things for a long time, and now have a new paradigm that you want to build on top of. So I'm excited to dive in here. And maybe, I mean, I'm sure most people know you, but maybe you want to do intros and give a little background. [00:00:47]

Jeffrey: Sure. Yeah, hey, everyone, met you all this morning, but I'm Jeffrey. I'm one of the co-founders and Chief Architect here at Amplitude. Been working on this product analytics thing, helping people understand user behavior data and make great product decisions and build better products for the last decade or so. And obviously, AI is a technology that we've been leveraging for a long time, but the recent trends are particularly exciting. And yeah, we have a lot of thoughts on how to apply that to our space, what we're doing in our product, and what we think the future of AI and product development and product data is. So excited to talk through some of those. [00:01:20]

Joe: Yeah, I'm Joe, Joe Reeve. I've got a background in sort of startups and tech, been professional software engineer since I was 16, quit college. And at the moment, I'm running sort of AI R&D efforts here at Amplitude. Super excited about all the new stuff, but also all the stuff that Amplitude's been doing for a long time and how we're sort of getting renewed interest and excitement and abilities to push that even further forwards. [00:01:44]

Swyx: So I think it's useful for people listening on the podcast and also some people here. Can you contextualize Amplitude as an AI company? Like what does that mean to you? What unique opportunities do you guys have? [00:02:02]

Jeffrey: Sure, yeah, happy to speak to that. So, you know, if we think about the fundamental thing that our customers of Amplitude try to do, it's they want to look at their product data and they want to figure out how do I make my product better? And the really cool thing about product data is that one, it's often like very high fidelity, right? Digital products compared to, you know, let's say physical products before them have way more information about what's going on. And so that's why product data is, you know, even a thing at all, right? You finally have that feedback loop of, hey, I built this thing. This is how people are using it. Now let me learn from that and make my product better. Now, one of the downsides of that is that the data is massive. If you look at any of the internet scale products out there, they generate enormous amounts of data. And the ability of humans to kind of sift through that data is obviously limited. At Amplitude, we try to give people as many tools, whether AI or not, in order to process that. But at the end of the day, if you could get from the data and what user behavior is happening in your product to the insights of how to make your product better without as much manual work, that's kind of the holy grail of product analytics. And so in some sense, Amplitude has always been a company on the path to AI because figuring out how to make your product better from data is ultimately an AI problem. And so we're kind of just solving all the barriers in the way, like getting data in first, building good models for short-term things. And long-term, it's always been about, hey, how can you take product data and automatically make your product better as fast as possible? [00:03:28]

Alessio: So that's the future of Amplitude. And a lot of people here probably want to start companies and whatnot. So maybe you want to give a 60 seconds of why you started Amplitude and what the story was like and maybe the first three to six months, what the challenges were. [00:03:42]

Jeffrey: Yeah, of course. It's funny that we talk about this because the start of Amplitude is actually almost more AI than the current state. And so actually my two co-founders, Spencer and Curtis, they went through YC originally with not Amplitude, but SonaLite, which was a text-by-voice company. So it was kind of before the era of Siri and those types of technologies where they wanted to build something that would read text messages to them, that's easy, but also do voice recognition so that you could send text messages, say when you're driving, without having to pull out your phone. And so they worked on it and it was really popular back when they were doing it. After they finished YC, they realized the big innovation that they needed to figure out in order to make that successful was being really good at voice recognition, which was a different problem. They're awesome software engineers, but they don't come from an ML background. And so it's like, okay, are we going to spend the next five years solving voice recognition? Not really the thing that they had in mind when they were building product. But one thing that they happened to stumble upon as they were working on that was they spent a lot of time thinking about, hey, what was hard about that product? What made users churn? What made users really love it and engage? And they built a bunch of analytics tools to help them understand that. And they were really kind of shocked that those tools didn't exist out there in the market or they were like much more primitive than they wanted. And it turns out a bunch of other people in their YC batch felt the same. And they were like, hey, that analytics thing you're building, we want that. For you to text by voice, we want your analytics product. And so they're like, okay, fine. We will pivot, natural language and voice recognition isn't really our thing. And so we'll do distributed systems and analytics instead. That's where I came in. I'm a distributed systems and analytics guy. And so I happened to get in touch with them just through some mutual friends at the time. And then, yeah, we kind of went on it. The funny thing about a lot of things in technology is that the most forward thinking companies with respect to a lot of technologies are gaming companies. And so a lot of AmpliG's early start was either gaming companies or companies with founders that came from gaming backgrounds, where in gaming people have always been very, very rigorous about product data and optimizing engagement loops and all of that. And so they look for the best tools. We went to Zynga 15 years ago. It's like, that's where product analytics originated. And so a lot of those founders of new startups who had left Zynga were like, hey, that thing that you're building, that's trying to figure out patterns and user data and use that to make better products. That is exactly what we want after leaving Zynga. And then from there, that was Amplitude.

Swyx: Yeah, I think famously other gaming companies would be like Slack, right? Mr. Butterfield tried to make a gaming company and failed and made Flickr. Then he tried to make another gaming company and failed and made Slack. And now look out to see what he does next. Discord as well. That's right. [00:06:34]

Jeffrey: Yeah, people who come from gaming backgrounds are very rigorous in their product thinking. [00:06:39]

Swyx: That's interesting. Alessio, you have a background in games? [00:06:43]

Alessio: Yeah, in playing them, not in building them. So I will not fall into an enterprise company by doing that. Let's talk about R&D today and some of the ideas that you're working through, like some of the limitations that you run through. I think the most interesting thing about hackathons is you come with an idea and then you kind of hit a wall trying to build it. And then that takes you into another path. Like what are maybe funny things that you learn in terms of like the limitations of these models or like the missing infrastructure for using them? [00:07:14]

Joe: So we've got a couple of different frames for thinking about this. There's AI that we're putting into our products and then us knowing that our customers want to put AI into their products. So there's the, how do we support our customers in their product development using AI? But how do we do that ourselves? And this is a great opportunity for us to learn the challenges our customers are gonna see. And so the first thing there is let's just start from the beginning, assume we want to add AI to our product, which maybe isn't the best place to start, but let's just assume we want to. How do we start ideating opportunities to put stuff into our product? So we sort of came up with this framework where we look at our product and we think about what are the collaboration touch points? So where are the points that a human might hand off to another human? And then think where can we replace one of those humans with the machine? So instead of thinking of some AI, amorphous AI, LLM, whatever, we're thinking actually, what if we had a robot that we were collaborating, not just a human, not just some sort of thing that spits out numbers. So collaborating. Then there's thinking of these as tools. So this is like your auto-suggest, on your mobile keyboard or spell check or something. How do you integrate this stuff as deeply into your product? So what are the friction points that users go through? Maybe they check lots of boxes. Is there a way we can pre-check those boxes we can get? So that's the feature embedding really deeply into the tool you've already got, the product you've already got. And then you step back and think, okay, what's a tool? So a tool is like ChatGPT, where you go there, it's an AI powered tool. It's not necessarily connected to your product, but it's a supplementary tool that you add. So there's a sort of ideation process there that we went through. And we sort of landed on a couple. And one of the key things that Amplitude does is help our customers, one, collect data in like a standard and sort of queryable way. And then we help them query it and get insights out of that data. So we were thinking, what's the feature there? How do we embed that? But also what's the collaboration point? And you might be a product manager asking an analyst, hey, please help me. Let's have a conversation about this. I don't know what questions to ask, but you also might just be about to go click the big create button and fill in a bunch of fields. And can we fill in a bunch of the fields for you? So we went to what to us seemed like one of the most obvious places. And we built a text box. Surprise, surprise with LLMs. We've got a text box. You can type in a question, type in anything about your data that you want to know, and then it'll spit back a chart, which is kind of neat. And we hit a bunch of problems there with LLMs hallucinating, losing context, even within the context windows, not really sort of recalling everything within the context window. So we sort of did a bunch of experimentation and realized if we split this down to seven different questions, so instead of saying, generate me a chart and a query for this one question, let's split that into lots of sub queries, like what kinds of events should I use? How should I display this? What should I call it? Rather than asking you all of that in one go. But then we had another problem where we have one query that a user makes that actually spins out seven different queries. So how do we monitor this? We can't just say one performance metric. You know, RLHF, you can't just say yes or no. Was the query response good? Because it might've failed for one of seven reasons. And maybe multiple of them failed or maybe some of them failed and then maybe they've hallucinated. And so we're getting code errors where an enum is not being matched. So we've had lots of sort of issues going all the way down there that we've had to figure out from first principles and sort of a really exciting way for us to understand what our customers are going through. [00:10:56]

Swyx: So I wanna be clear. So you've described your exploration and how you think about products. What have you released so far? I just wanna get an idea of what has been shipped. [00:11:08]

Joe: Sure. So in terms of LLM stuff, this, we call it question to chart internally. This ask a question, get a chart out. This, we've started rolling out to customers already. So last week, actually, started rolling out to our AI design partners a sign that we had signed up, which is a really exciting process. Actually, a lot of customers are just so excited to work with us and try it out and see how they can break it. So that's something we rolled out recently, which is built in LLM. It's the first piece built on LLM that we're working on. But we've also had a bunch of long-term ML, sort of traditional ML models that we've been running and products that we've been running with customers that help them predict what their users are gonna do. Because we've got this massive behavioral data set, best behavioral data set in the world. So we can train these awesome models and help our customers predict what their users are gonna do. So they can share the more relevant content or now is the right time to ask people if they want to upgrade or they want to rate your app or that sort of thing. [00:12:05]

Swyx: Yeah, there is a little bit of a contrast, conflicts, because you already had all these ML models in-house and you're spinning up a new AI team and you're like, no, let's do all of this with GPT-3. Are the existing ML researchers saying like, no, this is a complete misuse of text generation? Or are they excited about it? Is it unlocking new things? [00:12:32]

Joe: Yeah, actually, it's the combining these things. So we're able to use the traditional ML to shorten the fields, to narrow the number of things we need to pass into the LLMs. Because the LLMs can do a lot more of the reasoning, but we can make sure that the context we're providing is much more specific and generally much better by using the traditional ML models. [00:12:53]

Swyx: Yeah, okay. And then the pain points that you're experiencing are hallucination. And then also like the multi-query thing. What do you think you wish for? Or what do you think you're thinking about to solve those pain points? [00:13:06]

Joe: So right now we're instrumenting with our own product. So we're instrumenting groups of inferences and individual inferences, which means we can then create charts that show how often they fail, why they fail, how often we need to retry to get good answers.

Swyx: So amplitude using amplitude. [00:13:23]

Joe: Exactly. To build amplitude. [00:13:24]

Swyx: Yeah, exactly. [00:13:25]

Joe: Well, I mean, we're a product company. What else would we do? [00:13:29]

Swyx: That is the second part of what you're saying, right? Which is, first of all, you want AI in the amplitude products. Second, people are shipping AI products with amplitude. You wanna talk a little bit more about what you're seeing there? [00:13:39]

Joe: Yeah. I guess the key thing here is, for a lot of people is, okay, I can build the thing that calls OpenAI's API and then gives a response back. I'm nervous that I'm gonna be giving incorrect answers. I'm nervous that I don't really know how to measure whether the answers are incorrect. And I'm nervous that I'm not gonna be able to improve over time. So a lot of people we actually hear are nervous of giving thumbs up, thumbs down buttons because they're implying to their users that they're gonna be using this to improve the results. But they actually have no idea how to use that to improve the results in a meaningful way. And particularly when you've got multiple queries going off for one request, you've gotta then fine tune lots of different things in parallel. So it gets to be quite a technically complex sort of problem if you're not using great tooling that already exists for it. So that's, and then you have the extra layer of, I'm getting a bad result. I've tweaked my prompt template that I'm sending off to OpenAI. And now, has the result got better or worse? [00:14:35]

Swyx: I don't know. [00:14:36]

Joe: I don't know how to measure that. Except by thumbs up, thumbs down, which is a difficult measure in the first place. So that's where we can start saying, measuring the behavior of users once we've generated something for them. So have they gone and shared this content? Have they used this content? They actually gotten any value out of it? Not just have they pressed thumbs up. We can actually measure, are they getting value? Are they throwing it away from their behavior? But then using that through the Amplitude product, we can then tie that through to A-B tests, which is another product that Amplitude has. So then suddenly we start, and we're not doing this yet. This is sort of next on our list, is to start putting these prompts into our A-B test variants. So then we make a tweak in the UI, and it goes off, fires on the original, the control and our variant, our new variant. See, does it get fewer or more errors? Does it get fewer or more thumbs up, thumbs down? [00:15:30]

Alessio: Have you thought about, I don't know, A-Z testing, I guess? Like one of the limitations has been, well, people can only write so much copywrite to test, but now with these generative models, you can actually generate a lot of copy. And like you go to on-demand test more and more and more copy. Have you seen any maybe fun customer stories? Like can you, anything there? [00:15:50]

Jeffrey: Yeah, so actually there's a very good example of this. I don't know if I can share the actual customer, but actually from before the LLM days, where they literally generated the versions of the copy themselves, and they made their product basically adapt, you know, multi-arm bandit style of like, hey, here's all these different variations, like just go figure out the best one. At an internal hackathon, maybe two months ago, I built a prototype of what you're talking about, which is, okay, now replace the copy generation with an LLM. So just constantly generating new variations, and then multi-arm banditing to figure out which one's the best. I think that is probably the future of copywriting, where it's like, you don't actually need a whole lot of manual work anymore. It can, almost everything can happen automatically. And it's kind of the micro example in my head of this concept that we really like, which is self-improving products, where, you know, at some point, you know, someone has to say, hey, I'm gonna build a product that does this, you know, like a newsreader or something. But then, you know, after you have that, like the title of the newsreader, like the description of the sections, your navigation, all of that, in theory, you know, if you can give it some structure that the AI can play with, the LLM can manipulate all of that for you, and then use, you know, A-B testing, multi-arm bandits and all of that to kind of figure out what's best. And that generative AI kind of makes that last piece of like, what are my options possible? And that's super exciting for us. And we wanna be there, you know, to help you measure that, help you deploy that, and make that like the way people build products in the future. [00:17:14]

Alessio: I think I've talked about this on the podcast, but this idea of like just-in-time UIs, you know, like each type of user wants to interact in a different way. And like, what you're building is a way of that, right? Like, Amplitude has been really like dashboard-driven, kind of like a diagram-driven, showing the user flow. Now each user can say, hey, I don't really want the table. I just want the charts. Or like, I don't want the charts. I just want the data. What do you think about the future of like dashboards and like BI in general? But like, the analysts used to come up with like what you should be seeing. Now each user can ask their own questions. [00:17:47]

Jeffrey: Yeah, like the future of analytics, I think, is, you know, can go a few different paths. One thing that I want to, you know, counter against the whole LLM trend a little bit is I think when you get into really important and specific questions, you know, let's say you're writing like some complicated SQL or even code, you know, code and SQL are good because they're very specific, right? You can define your semantics very precisely. And that's something that I think, you know, when people start thinking about like natural language questions, they kind of take for granted. They're like, oh yeah, why doesn't it just, you know, figure out the precise semantics from my very ambiguous words? It's like, well, it's actually, in some senses it's possible, right? Because the precise semantics are not captured by your ambiguous natural language words. And so the way we think about it, at least today, you know, who knows what's going to change in the future is like natural language is a great interface to like get started. If you don't know what the underlying data looks like, if you don't know like what questions you should be asking, it is a very, very expressive way to start, get started. It's much easier than manipulating a bunch of things, much, much easier than writing SQL and all of that. But like once you kind of know what you want, it's very hard to like make it precise. It's actually easier to make SQL or code precise than it is natural language. And so that's a little bit of what we're thinking right now. So we think, you know, for sure the way that maybe many people will interface with analytics and data will turn into natural language because maybe the precision doesn't matter to them. But like at the end of the day, when you're trying to get, you're trying to sum up your revenue or something, it's like, you want to know that it's right. And you want to know the semantics that go into that. And like, that's why, you know, that's part of why data is hard. The semantics really do matter. They can make a huge difference in the output. And so there's a boundary there that I'm curious where it will push over time, but I don't think it's quite there yet. [00:19:33]

Joe: I think this is where models sort of can become more embedded as features rather than go off and do this thing, create this analysis for me and then come back, the collaborator model. Then we're saying this field, I'm not sure what should go in there. Can you make a suggestion? And then I'm going to go and refine it over time. So it's the sort of autofill, but guessing autofill, but then you still, you can tweak everything. This is one of the core design sort of principles that we've come up is yes, you've got to be able to explain what the model's doing. And as a human, I need to understand, a user I need to understand what is the model doing and why is it doing it? But I also need to be able to tweak it once it's done it. I don't want to feel like I've just said go and then I can't stop it and it's going to go off and do stuff. And that's sometimes how things like AutoGPT can feel. It's going and it's costing me OpenAI tokens and I have no idea what's going on. So yeah, I think a key thing is servicing all the individual things the model's doing and allowing users to tweak it, stop it, retry while it's going. [00:20:33]

Swyx: For me, one of the most challenging questions is something I think you guys have maybe thought about a lot which is chat. Ideally you want, like you could say naively, for example, you want to optimize time in app, but actually that's a sign of failure if the chat session is longer than it should be. Do you have any advice on, I'm sure you've dealt with this before pre AI era, but like what do you advise AI hackers to optimize for? Like what analytics should people be looking at? [00:21:03]

Jeffrey: Yeah, our general kind of philosophy as a company is to work with customers to identify north star metrics. Right, and like time in app is not good primarily because it doesn't actually correlate with your business outcomes most of the time. And to be fair, sometimes it does. Like if you're a social media app, maybe it does correlate really well and maybe it's not a bad metric then. But for a lot of other products, right, if you're trying to do the search, for example, or like time on search, like nobody wants that. It's like, yeah, what is your success rate? You know, how many, do you get them to come back and search in the future? Like that's much more interesting than the time of your session. And so, because you know, each time you can serve apps, right, that's your business. And so it's like, if you choose a metric that's well correlated with your business outcomes, then that's at least the first step to getting that right and not getting caught up in other vanity metrics that sound like they could be good to increase, but then, you know, they can sometimes lead to negative business outcomes, you know, and then you get the worst. You've optimized the wrong metric the whole time. And that's where tying in AI and product analytics makes a lot of sense. And it's really important because product analytics, these companies that are like our customers that are trying out building features that are LMs and they're not sure what to optimize for, optimize for the same thing you're already optimizing for. You're already measuring conversions. You're measuring how much value, hopefully, your customers are getting out of your product. So continue doing that and maybe find a way to tie the LLM feature to that and sort of through A-B tests and that sort of thing. And then on the chat specifically, chat is obviously for a business maybe rolling out a chat box based on LLMs. It can be really scary. And that's another sort of mental model of framing we've been thinking around is we find LLMs right now are most useful either when you come from, either when you have a narrow input space and a broad output space, because you can be very, you know exactly what format of data, what kind of data is gonna be passed in. That's probably not coming directly from a user. It's probably coming from a button click or a toggle switch or something. And then you can have a general output and you can provide templates and that sort of thing. And then the other way is broad input space, narrow output space. So that's free form text box. And you can provide a bunch of sort of clamping, framing, validation on the output to make sure that you're not spewing out, you know, poems about Hitler or whatever it is. You know, you can be really, really deliberate when you've got a small output space. Chat is large input space, large output space, which is really, really scary. If you're, as a company, you're not selling a chat product, you're selling a, you know, an analytics product with maybe a chat support bot or something. [00:23:37]

Swyx: Yeah, I think this is one of those opportunities. I always try to raise the awareness of this, that Copilot I think did a really interesting metric or North Star, which was how much code is kept or retained by the user. And for people who are Googling along, you can actually look for this blog post about reverse engineering Copilot internals. And they actually set up custom metrics around, you know, 30 seconds after a code snippet is accepted, one minute, two minute, three minute, all the way to five minutes. And you can sort of see it construct a curve of how long Copilot suggestions stick around. And from there, they can actually make statements like this, you know, evaluate the success of the products. It's pretty cool. [00:24:18]

Joe: One of the really nice things we found actually, we accidentally did this. So our chart building interface, heavily instrumented. It's a, we're Amplitude. So we instrument our product. We also, it's one of the main tools that our customers use. So it's really, really well instrumented. And so when we tied chart creation through asking a question through an LLM, and then we tied that to a chart, an output chart, we then automatically were able to tie every time someone edits any of the parameters to that generation. So then we know, we have really detailed RLHF data for, yeah, you got everything apart from the metric, right? But you got everything apart from this event that shouldn't have been there, because that's the one that got removed. So similar to the Copilot there. [00:25:00]

Alessio: And I want to make sure we open it up for questions, but like one last thing is about, everybody knows that small is beautiful. And when you think about what models to use and some of the parameters, like there's costs, there's latency, there's like accuracy. How do you think about using, you know, GPT-4 and some of those models versus using smaller ones that are fine-tuned? What are the trade-offs? [00:25:23]

Joe: Yeah, I guess right now we're very much in the, let's explore, let's try everything and just iterate as fast as possible, which is what general models are great for. We do have some smaller, not even fine-tuned, some smaller models that we've sort of borrowed from Hugging Face that we run internally for more specific tasks. And that's often sort of selecting specific values before we pass it to a general model right now, just because the general models are much easier to communicate with and they understand most of the words we use. It's not like we use a word and suddenly we get random outputs for no reason, the sort of gold magic up type thing. So they're generally less susceptible to that. So that's why we're iterating heavily on the general models. I think we absolutely have to move to some more specific models, particularly given inference on fine-tuned open AI models gets more expensive and slower the more you do it. So yeah, that's definitely a thing we're looking at and we're doing some internal stuff, but it's the next step or one of the next steps. [00:26:22]

Jeffrey: Yeah, to give a pseudo example of that, one of the hard things to help users within Amplitude is picking the right event to analyze. It's kind of your fundamental unit of analysis. And when a user comes in and let's say that's the first time they're using Amplitudes, someone else in their company has set up the product, so they don't know what the events are. Right now in Amplitude you get this massive dropdown and it's like, all right, there's a thousand things, like which one is the one I'm looking for. And sometimes the names are good and sometimes they're not. But one thing we did was, okay, yeah, feed that into open AI. Hey, tell me which event type best matches like this user's intent. That's like pretty good at that, right? So it's all language stuff, but it's a little bit slow and it's a little bit expensive to do that every time. And so we kind of fell back to, once we validated that that works, kind of fell back to a more traditional embedding-based approach. It's like, all right, compute all those embeddings. That's more work upfront because you have to go through your database of all of these things and you got to commit like that engineering work, but it's like you validate with the general model because it's just easy. It takes like an hour to figure out that it works. And then it's like, all right, can we do the same thing with embeddings? That's way faster, way cheaper and still has reasonable quality. Embeddings also have a nice quality that you can get like magnitude of things, whereas LLMs aren't great at giving you like, hey, it matches this much. It's kind of, you can ask it for an order and that's decent, but like, yeah, anything beyond that is pretty challenging. [00:27:42]

Alessio: How do you think about the importance of the model versus the data, right? There's like a lot of companies that have a lot of data, but not a lot of AI expertise or companies that are just using off the shelf model. How should companies think about how much data to collect? What data is meaningful? What isn't, any thoughts there? [00:27:59]

Jeffrey: Yeah, I think it's safe to say that both are really important, right? Like the evolution of LLMs really was a lot of model innovation. And so I don't want to downplay that. At the same time, I think the future of AI applications and doing really cool things with it will be in the data, partially because like, you know, ChatGPT has done such a huge advance, right? The LLMs model space has advanced like crazy in the last year. And so I think a lot of the untapped potential will be in data in the future. One thing that's particularly interesting to us is like we have a pretty unique data set, actually. It's a lot of first party behavior data, right? So if you're, you know, if you're Square, for example, you instrumented like the way that people interact with Square Cash and the wallet and the, you know, the checkout system. And like, those are very specific things. Like Square can't look elsewhere in the world for that stuff. And that's really interesting because, you know, to build models of user behavior, you need user behavior data. And it turns out there's not actually a lot of examples of user behavior data out there in the world. And so to Joy's point earlier about, you know, we have one of the best user behavior data sets in the world. And so if we want to build a model around that, I think it would be a super interesting one. So if you take an analogy to what ChatGPT does, it basically takes a bunch of language examples and it, you know, learns a bunch of abstract concepts, like how to, you know, prove math things or how to render in JavaScript. It's like, wow, that's very astonishing. They kind of prove, it's almost like a proof of concept to the world that if you train a sufficiently good, you know, transformer self-attention type model with a sufficiently large data set of, you know, hundreds of gigabytes of internet text, you'll learn really interesting abstract concepts. And so we want to apply that to our data set, right? Cat GPG is great because it's a proof of concept. If it didn't exist, you know, I would have told you, yeah, you can spend $10 million training this model on a data set, you'd probably not get anything interesting because we just have no idea. But because it exists, it kind of proves to the world that if you do this correctly, there is a ton of interesting value. And so that's what I think. And so, you know, amplitude is just one example of a very interesting data set that you will train something that's, you know, fundamentally very different from GPT or any LLM out there. And there's lots of other data sets out there. And I think that's where a lot of the interesting things will come once this kind of, this phase of like rapid model evolution kind of tapers out a little bit. And you'll see a lot of the more interesting applications there. [00:30:24]

Swyx: So I've never thought about this much, but you guys must do it a lot. Like what is the ethics or best practices around training on user data when they don't know they're being watched? Like, I mean, presumably they're fine with tracking and events, but like, do we tell them that we're going to train on their data? Is it okay? [00:30:50]

Joe: I guess there are a couple of things. One is PII. Doesn't go anywhere near the stuff, right? PII with strip and like, that's just a really important thing. [00:30:58]

Swyx: You still need an identifier for streams. [00:31:02]

Joe: Yeah, yeah. But in terms of training models, we don't want any of that to go in there because then you might accidentally, you know, like, hello, ChatGPT, please hallucinate me a social security number. That's dangerous. [00:31:11]

Swyx: Also PII makes it into prompts a lot. [00:31:14]

Joe: Sure, that's true. So then you have to strip that from your... So we have some experiments where we're stripping PII that is in places that shouldn't be, you know, descriptions of things. Sometimes people copy paste big long lists of email addresses into charts and things. But some of these things are actually pretty surprisingly easy to detect and strip out. So we can do that. And we have some layers that are stripping out that sort of replacing them with tokens. So the LLMs can still operate on them. But in terms of training this data, all that training is happening internally and we're not putting any sort of private data, personally identifiable information in. I don't know if there's anything you wanted to add there. Yeah, yeah. [00:31:54]

Jeffrey: We certainly think about this a lot and our customers think about a lot. Like when I think about user privacy with respect to tracking, there's kind of this big spectrum. Around the one end, it's like literally track nothing and, you know, the end of story. And like for people like that, I mean, that's cool. You know, they're not gonna use Amplitude. They may not like us very much. You know, that is what it is. And then on the other end of the spectrum is like, we're gonna track you across the entire internet and sell your data to everyone. And like, that's obviously bad. And like, there's lots of good reasons to think that's bad. First party behavioral data, I think is actually probably almost as far. Fully anonymized first party behavior data would be like kind of the minimum. It's like web server logs with no IP, no identifier, nothing. The problem is that you can't do a lot of interesting behavioral analysis without that. You can't tell if, you know, this person that came on this day was the same one that purchased later. And so like, you can't actually, it's much harder to make your product better if you don't have that. And so, you know, we're kind of set at this place where we have, you know, like pseudo anonymized first party data. And like, we don't sell the data. You don't mix data from, you know, different places on the internet through Facebook cookies or things like that. And, you know, our philosophy is like, that is actually the most important data to build a better product. It's not the most important data to advertise, which is why Facebook and Google do what they do, but it's the most important data to build a better products. And it kind of strikes the right balance between yeah, totally tracking everything that you're doing and like not having any information to make your product better. [00:33:19]

Swyx: Yeah, cool. And I think we're going to go to audience questions. So let's start warming them up soon. But I think we have some lightning round questions [00:33:29]

Joe: The audience is thinking of questions while we go. [00:33:31]

Alessio: The first one is, what's something that already happened in AI that you thought would take much longer to be here? [00:33:39]

Jeffrey: I don't know what the constraints on our lightning round, but I think maybe creativity is the best word where it's, you know, with the image generation stuff, text generation, you know, one thing that still blows my mind, I used to be a competitive like math guy and like there's this international math Olympiad problem in one of the papers and it solves it. And I'm just like, wow, I can solve this when I was spending all my life doing this thing. Like that level of creativity really blew my mind. And what's the takeaway? It's like maybe the takeaway is that creativity is not as, you know, as not as high entropy or high dimensional as we think it is, which is kind of interesting takeaway. But yeah, that one definitely surprised me. [00:34:21]

Joe: I guess there's something actually that maybe answering the inverse question that a lot of my friends were surprised happened quickly. And I was like, this is just braindead obvious. I've got a lot of friends in the AI safety space. So they're worried that in particular, X-risk, right, extinction risk, that AI is going to kill the human race. And they were like, oh no, what if an AI escapes containment and gets access to the internet? And then we get an LLM and the first thing we do is like, hey, also GPT, here's the internet. [00:34:48]

Swyx: So you thought, it's happening faster than you thought. [00:34:53]

Joe: Well, it's happening faster than, to me it makes sense, because I'm like one of the guys connecting it to the internet. And I'm like, I'm surprised that other people were surprised it was going to be so fast. [00:35:01]

Swyx: Yeah, so a bit of context, Joe and I, we've been adjacent to the EA community and they have like smoothly migrated to the X-risk community very quickly after SBF. [00:35:13]

Joe: Yeah, after SBF, yeah, that was fun. [00:35:16]

Swyx: Okay, so next question, exploration. What do you think is the most interesting unsolved question in AI? What's next? [00:35:30]

Joe: I guess like, is it going to keep getting better at the same rate? Is it going to, and that's just a super important question that's going to change. Like, depending on that answer, 50 startups are going to pivot or not pivot, right? [00:35:43]

Swyx: Which is what's next, literally. [00:35:45]

Joe: Literally, what's next? Like in a year's time, are the models similarly better than they have been so far? Or are we about to taper off or are we about to continue going linearly? [00:35:58]

Jeffrey: Yeah, I'll throw one out that is not necessarily about AI, but like, what's intelligence, right? And if you ask people 20, 30 years ago, maybe even longer now, it's like, yeah, chess. Chess is intelligence. And then chess got solved and like, ah, that's just brute force. And it's like, well, you know, creating creative images and writing, that's intelligence. Well, it's like, that's solved too. Maybe it's just, you know, if you have enough parameters, you can capture that. So like, what is intelligence? What does it mean to have an AGI? What does that actually mean? And then what the implications that are on for our understanding of humans and our brains. I've always thought that, you know, everyone is just a stochastic machine. And so, you know, is everything consistent in my mind?

Swyx: Free will and illusion. Exactly. [00:36:43]

Joe: I guess maybe like the scaling piece is like that intelligence as you scale is gets more and more expensive on the traditional stuff. But then there's something I think I saw yesterday on Hacker News. It was people actually getting a brain to play tic-tac-toe. Like by a brain, I mean, stem cells grown into brain tissue. And they were able to train it. And like that to me is very significant because suddenly the like metal computers limitations is not applied. And then now we've got all this intelligence. What is intelligence stuff on a squishy wet computer? That makes it even harder to ask and even harder to draw lines. [00:37:18]

Swyx: Yeah. Yeah. So famously, you know, language models are so much more inefficient than wet computers, as you say. And so if you can merge that, you know, the human brain runs on 30 Watts of power as it is my favorite fact. We're not anywhere close to that yet. [00:37:36]

Alessio: Before we get into Q&A, one last takeaway that you want everybody to think about. [00:37:41]

Jeffrey: Yeah, I'll do the one that we actually repeat in Inside Amplitude very often, not about AI, but I think it applies, which is it's early. It's sometimes hard to realize that when things are happening so fast, especially in the Bay Area, but like the ramifications of AI or in our case, product data and all that are gonna play out over the next many decades. And that's just, you know, we're very fortunate to be at the beginning of it. And so yeah, take advantage of it and keep reminding yourself that it's early. [00:38:15]

Joe: I guess mine would be, let humans be good at doing human things. Let machines be good at doing machine things and let machines be good at doing machine things and help humans be good at doing human things. And like, if you don't do that, then you're gonna be building something that's either not useful or it's very scary. So yeah, get machines helping humans, not the other way around. [00:38:39]

Swyx: Get machines helping humans. All right. With that, I think we're all gonna open up to questions. We're gonna toss you the mic. [00:38:45]

Audience #1: Yeah, hey, thanks for the insight into how you guys implemented your AI, you know, question asking chatbot and how have you converted into seven sub queries and then generate the data out. I've just, I got a peak my interest about how you guys exactly do it. Like Alessio asked, like, what exactly is the model that you guys are using? Are you converting it into your, what are these queries that you generate from a single English language? Is it possible to go a little deeper just from a curiosity perspective? [00:46:34]

Joe: So we have a custom query engine. So it's not SQL or anything that we're generating. We're generating a custom query output. So I guess the types of questions range. So things like chart type, are we doing a segmentation chart, a line chart or are we doing a funnel chart? You know, the number goes down over time or up over time or between a conversion between two events and there are various other types or metrics or, and then there's also the name. What should we name this chart that answers this question? So the way that's implemented in practice, you could use something like Lang chain to sort of chain these things together. But in our experience, I think Lang chain's a great tool for certain things and definitely really great for prototyping, but we found it quite restrictive. So we've ended up building sort of an internal, it's a very, very small wrapper, internal, we use TypeScript as well, framework that allows us to basically just write in code and infer within what we call a transaction, an inference transaction, which gets monitored as one, but then also all the individual inferences within it get monitored. So it's a bit like when you're writing a database transaction with most sort of, at least in the node ecosystem, the JavaScript ecosystem, where you sort of get a transaction object that you can operate on, and then you return your, or you return, you sort of commit your transaction. So we've got an interface like that, so we can just write pure TypeScript, await this response or await these responses. And then we've got a switch case. If it's a segmentation chart, go and do these with these queries. And then each of those inferences can be a different model. So we think in the future, maybe we have one query where we have some GPT-4 responses. We want some text responses. Maybe we also want to generate an image from that same query together, and then that gets bundled. So I don't know if that answers your question.

Audience #1: Yeah, I think so. Yeah, thank you. I think so. You said in future, you're going to use GPT-4. What are you using right now for? [00:48:33]

Joe: Right now, everything's GPT-3.5. We're moving around, and I think probably for some of the prompts, we'll use something like DaVinci. Some we might use GPT-4. Some we'll be using internal ones. And we also want to be able to degrade gracefully if a customer has told us they don't want us to send anything to OpenAI, then we can degrade to some internal models that maybe are some of the open source models that have been trained on smaller datasets. [00:48:57]

Audience #1: Gotcha, makes sense. Thank you. [00:48:58]

Jeffrey: Yeah, I think to add to that a little bit, the key is breaking down the problem sufficiently, because if you break down the problem enough, you can also provide it with some examples, which is super helpful, right? You know, GPT is quite good at zero shot, but within the context of our specific domain, it doesn't know what's going on. And so being able to break down the problem to, hey, select the type of chart. Don't generate me an entire chart definition. Select me the type of chart, and then select me the specific metric based on their query, and then giving it some examples. Select me the events and properties that I want to look at. By breaking it down and having very, very contextual prompts with respect to those examples, you get a lot higher quality output than trying to generate, like, you know, if you imagine generate, like, hey, generate me a whole SQL query with all, you know, here's like the schema of all my tables, now generate it entirely. It's like, it actually struggles with stuff like that, because it's just like kind of too much information and computation to come out of language. Now, maybe GPT-5 will be different, but like, that's the state of the art today. [00:49:57]

Swyx: I'll ask a follow-up to Joe. So you mentioned, you mentioned trying LangChain, but not needing it for production. Any other comments on tooling that are out there that's interesting to you? Do you use a embedding database, for example, or do you just use a regular database? [00:50:18]

Joe: Yeah, so we've actually been running embedding sort of similarity or vector search in production for multiple months, maybe even almost a year, and just like straight up Postgres, but now we're using PG Vector, which actually Jeffrey could probably speak more to about that decision and what that was like. [00:50:40]

Swyx: So this is a pretty hot take. At Amplitude scale, all you need is Postgres? [00:50:46]

Joe: We'd use many things other than Postgres. But I mean, we, this isn't rolled out for all customers and it's not necessarily getting sort of hit with a lot of traffic. And so the scale here is very different. Our usage scale is very different to our ingestion. [00:51:04]

Swyx: Yeah, yeah, yeah. [00:51:06]

Jeffrey: Just to clarify that a little bit more, we're not putting individual end user vectors or end event vectors. We're putting in taxonomies. So if I'm DoorDash, my taxonomy is add to cart, checkout, purchase, browse. That's the cardinality. And so that's actually small. It's on the order of tens of millions. And so yeah, you use stuff that in Postgres, no problem. Now, when we talk about large behavioral models or like actually embedding events, there are many, many trillions of those. And yeah, Postgres probably doesn't work there. [00:51:41]

Swyx: Yeah, actually I wanted to comment on this slightly before, which is separating taxonomies from the actual data is one way you protect your customers against prompt injection. It's something that Simon Willison has been talking about where you want to have like query for one thing, but essentially no knowledge of the actual underlying data, just the taxonomy. So it's good practice. [00:52:00]

Audience #2: Yeah, so you talked about a model which would be trained on user behavior data like amplitude GPT. It really piqued my interest and what capabilities would emerge? What do you think that you would find and what would be the first thing you would ask the model? That's a good question. [00:52:23]

Jeffrey: So we've thought about this a little bit and I think the, right, these are sequence, token prediction models. And so at the very least, I would hope for a much better, we have a predictions feature right now, which says, hey, given what a user has done over the last 90 days, do we think they're gonna belong to this cohort in the future or not? So that cohort might be people who churn, people who purchase, people who upsell, whatever the customer wants. We think it would be much better at tasks like that, right, because if it just has a very good understanding of behavioral patterns and what's gonna come next, it would be able to do that. That's exciting, but not that exciting. If I'm trying to think about like the analogies to what we see in LLMs, it's like, okay, yeah, what is the behavioral equivalent of like learning physics concepts, right? It's like, oh, I don't actually know, but it might be this understanding of patterns of sessions and how that like, for example, categorizing users in a unsupervised way seems like a very simple output for a model that understands user behavior, right? Here's all the users and if you wanna discriminate them by their ability to achieve some outcome in the future, like here's the best way to separate that group and here's why, right? Be able to explain at that level and that would be super powerful for customers, right? A lot of times what our customers do is, hey, these people came back the next day and these people didn't, why? What was different about them? And so we have a bunch of heuristics to do that, but at the end, there's something like, causal impact is like one of the holy grails of product analytics. It's like, what was the causation behind some observed difference in behavior? And I think, yeah, a large behavioral model will be much better at assessing that and be able to give you potentially interpretable ways of answering that question that are like really hard to do, really hard, really computationally intensive, really like noisy, distilling causation correlation is obviously super hard. Those are some of the examples. The other one that I am, I don't know if I'm optimistic about it, but we really interesting is, one of the things that amplitude requires today is manual instrumentation, right? You have to decide, hey, this clicking of a button, this viewing of page, these are important things. I'm naming them in this way. There's a lot of popular tools out there that kind of just record user sessions or like track DOM events automatically. There's a lot of problems with those tools because the data is incredibly noisy. It's just so noisy, right? A lot of times you just can't actually interpret it. And so it's like, oh, it's great because I don't need to do any work. But like, well, you also don't get anything out of it. It's possible that a behavioral model would be able to actually understand what's going on there by understanding your user behavior in a correctly modeled and correctly labeled sense, and then figuring out. I don't know if that's possible. I think that would make everyone's lives a lot easier if you could somehow ask behavioral questions of data without having to instrument. All of our customers would love that, but also all of them are instrumenting because they know that's definitely not possible today. [00:55:26]

Audience #2: This is really interesting. You're looking forward to the future. If you're gonna build it, it's gonna be amazing, yeah. [00:55:31]

Jeffrey: That's the goal, that's the goal. [00:55:33]

Audience #2: Awesome. [00:55:34]

Swyx: Thanks for listening. [00:56:09]

More recently, Yannic Kilcher mobilized his massive YouTube audience to generate 600k datapoints for OpenAssistant.

Some of them in Kenya, with an outsourcing company coincidentally named Sama (no relation. But Leila Janah is an inspiring social entrepreneur more people should remember)

Rumored to be making >$200m/year with a team of 11 and a community of 17.1million on their Discord!